norallm/normistral-7b-warm

Text Generation

•

Updated

•

946

•

29

code

stringlengths 235

11.6M

| repo_path

stringlengths 3

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # 09 Strain Gage

#

# This is one of the most commonly used sensor. It is used in many transducers. Its fundamental operating principle is fairly easy to understand and it will be the purpose of this lecture.

#

# A strain gage is essentially a thin wire that is wrapped on film of plastic.

# <img src="img/StrainGage.png" width="200">

# The strain gage is then mounted (glued) on the part for which the strain must be measured.

# <img src="img/Strain_gauge_2.jpg" width="200">

#

# ## Stress, Strain

# When a beam is under axial load, the axial stress, $\sigma_a$, is defined as:

# \begin{align*}

# \sigma_a = \frac{F}{A}

# \end{align*}

# with $F$ the axial load, and $A$ the cross sectional area of the beam under axial load.

#

# <img src="img/BeamUnderStrain.png" width="200">

#

# Under the load, the beam of length $L$ will extend by $dL$, giving rise to the definition of strain, $\epsilon_a$:

# \begin{align*}

# \epsilon_a = \frac{dL}{L}

# \end{align*}

# The beam will also contract laterally: the cross sectional area is reduced by $dA$. This results in a transverval strain $\epsilon_t$. The transversal and axial strains are related by the Poisson's ratio:

# \begin{align*}

# \nu = - \frac{\epsilon_t }{\epsilon_a}

# \end{align*}

# For a metal the Poission's ratio is typically $\nu = 0.3$, for an incompressible material, such as rubber (or water), $\nu = 0.5$.

#

# Within the elastic limit, the axial stress and axial strain are related through Hooke's law by the Young's modulus, $E$:

# \begin{align*}

# \sigma_a = E \epsilon_a

# \end{align*}

#

# <img src="img/ElasticRegime.png" width="200">

# ## Resistance of a wire

#

# The electrical resistance of a wire $R$ is related to its physical properties (the electrical resistiviy, $\rho$ in $\Omega$/m) and its geometry: length $L$ and cross sectional area $A$.

#

# \begin{align*}

# R = \frac{\rho L}{A}

# \end{align*}

#

# Mathematically, the change in wire dimension will result inchange in its electrical resistance. This can be derived from first principle:

# \begin{align}

# \frac{dR}{R} = \frac{d\rho}{\rho} + \frac{dL}{L} - \frac{dA}{A}

# \end{align}

# If the wire has a square cross section, then:

# \begin{align*}

# A & = L'^2 \\

# \frac{dA}{A} & = \frac{d(L'^2)}{L'^2} = \frac{2L'dL'}{L'^2} = 2 \frac{dL'}{L'}

# \end{align*}

# We have related the change in cross sectional area to the transversal strain.

# \begin{align*}

# \epsilon_t = \frac{dL'}{L'}

# \end{align*}

# Using the Poisson's ratio, we can relate then relate the change in cross-sectional area ($dA/A$) to axial strain $\epsilon_a = dL/L$.

# \begin{align*}

# \epsilon_t &= - \nu \epsilon_a \\

# \frac{dL'}{L'} &= - \nu \frac{dL}{L} \; \text{or}\\

# \frac{dA}{A} & = 2\frac{dL'}{L'} = -2 \nu \frac{dL}{L}

# \end{align*}

# Finally we can substitute express $dA/A$ in eq. for $dR/R$ and relate change in resistance to change of wire geometry, remembering that for a metal $\nu =0.3$:

# \begin{align}

# \frac{dR}{R} & = \frac{d\rho}{\rho} + \frac{dL}{L} - \frac{dA}{A} \\

# & = \frac{d\rho}{\rho} + \frac{dL}{L} - (-2\nu \frac{dL}{L}) \\

# & = \frac{d\rho}{\rho} + 1.6 \frac{dL}{L} = \frac{d\rho}{\rho} + 1.6 \epsilon_a

# \end{align}

# It also happens that for most metals, the resistivity increases with axial strain. In general, one can then related the change in resistance to axial strain by defining the strain gage factor:

# \begin{align}

# S = 1.6 + \frac{d\rho}{\rho}\cdot \frac{1}{\epsilon_a}

# \end{align}

# and finally, we have:

# \begin{align*}

# \frac{dR}{R} = S \epsilon_a

# \end{align*}

# $S$ is materials dependent and is typically equal to 2.0 for most commercially availabe strain gages. It is dimensionless.

#

# Strain gages are made of thin wire that is wraped in several loops, effectively increasing the length of the wire and therefore the sensitivity of the sensor.

#

# _Question:

#

# Explain why a longer wire is necessary to increase the sensitivity of the sensor_.

#

# Most commercially available strain gages have a nominal resistance (resistance under no load, $R_{ini}$) of 120 or 350 $\Omega$.

#

# Within the elastic regime, strain is typically within the range $10^{-6} - 10^{-3}$, in fact strain is expressed in unit of microstrain, with a 1 microstrain = $10^{-6}$. Therefore, changes in resistances will be of the same order. If one were to measure resistances, we will need a dynamic range of 120 dB, whih is typically very expensive. Instead, one uses the Wheatstone bridge to transform the change in resistance to a voltage, which is easier to measure and does not require such a large dynamic range.

# ## Wheatstone bridge:

# <img src="img/WheatstoneBridge.png" width="200">

#

# The output voltage is related to the difference in resistances in the bridge:

# \begin{align*}

# \frac{V_o}{V_s} = \frac{R_1R_3-R_2R_4}{(R_1+R_4)(R_2+R_3)}

# \end{align*}

#

# If the bridge is balanced, then $V_o = 0$, it implies: $R_1/R_2 = R_4/R_3$.

#

# In practice, finding a set of resistors that balances the bridge is challenging, and a potentiometer is used as one of the resistances to do minor adjustement to balance the bridge. If one did not do the adjustement (ie if we did not zero the bridge) then all the measurement will have an offset or bias that could be removed in a post-processing phase, as long as the bias stayed constant.

#

# If each resistance $R_i$ is made to vary slightly around its initial value, ie $R_i = R_{i,ini} + dR_i$. For simplicity, we will assume that the initial value of the four resistances are equal, ie $R_{1,ini} = R_{2,ini} = R_{3,ini} = R_{4,ini} = R_{ini}$. This implies that the bridge was initially balanced, then the output voltage would be:

#

# \begin{align*}

# \frac{V_o}{V_s} = \frac{1}{4} \left( \frac{dR_1}{R_{ini}} - \frac{dR_2}{R_{ini}} + \frac{dR_3}{R_{ini}} - \frac{dR_4}{R_{ini}} \right)

# \end{align*}

#

# Note here that the changes in $R_1$ and $R_3$ have a positive effect on $V_o$, while the changes in $R_2$ and $R_4$ have a negative effect on $V_o$. In practice, this means that is a beam is a in tension, then a strain gage mounted on the branch 1 or 3 of the Wheatstone bridge will produce a positive voltage, while a strain gage mounted on branch 2 or 4 will produce a negative voltage. One takes advantage of this to increase sensitivity to measure strain.

#

# ### Quarter bridge

# One uses only one quarter of the bridge, ie strain gages are only mounted on one branch of the bridge.

#

# \begin{align*}

# \frac{V_o}{V_s} = \pm \frac{1}{4} \epsilon_a S

# \end{align*}

# Sensitivity, $G$:

# \begin{align*}

# G = \frac{V_o}{\epsilon_a} = \pm \frac{1}{4}S V_s

# \end{align*}

#

#

# ### Half bridge

# One uses half of the bridge, ie strain gages are mounted on two branches of the bridge.

#

# \begin{align*}

# \frac{V_o}{V_s} = \pm \frac{1}{2} \epsilon_a S

# \end{align*}

#

# ### Full bridge

#

# One uses of the branches of the bridge, ie strain gages are mounted on each branch.

#

# \begin{align*}

# \frac{V_o}{V_s} = \pm \epsilon_a S

# \end{align*}

#

# Therefore, as we increase the order of bridge, the sensitivity of the instrument increases. However, one should be carefull how we mount the strain gages as to not cancel out their measurement.

# _Exercise_

#

# 1- Wheatstone bridge

#

# <img src="img/WheatstoneBridge.png" width="200">

#

# > How important is it to know \& match the resistances of the resistors you employ to create your bridge?

# > How would you do that practically?

# > Assume $R_1=120\,\Omega$, $R_2=120\,\Omega$, $R_3=120\,\Omega$, $R_4=110\,\Omega$, $V_s=5.00\,\text{V}$. What is $V_\circ$?

Vs = 5.00

Vo = (120**2-120*110)/(230*240) * Vs

print('Vo = ',Vo, ' V')

# typical range in strain a strain gauge can measure

# 1 -1000 micro-Strain

AxialStrain = 1000*10**(-6) # axial strain

StrainGageFactor = 2

R_ini = 120 # Ohm

R_1 = R_ini+R_ini*StrainGageFactor*AxialStrain

print(R_1)

Vo = (120**2-120*(R_1))/((120+R_1)*240) * Vs

print('Vo = ', Vo, ' V')

# > How important is it to know \& match the resistances of the resistors you employ to create your bridge?

# > How would you do that practically?

# > Assume $R_1= R_2 =R_3=120\,\Omega$, $R_4=120.01\,\Omega$, $V_s=5.00\,\text{V}$. What is $V_\circ$?

Vs = 5.00

Vo = (120**2-120*120.01)/(240.01*240) * Vs

print(Vo)

# 2- Strain gage 1:

#

# One measures the strain on a bridge steel beam. The modulus of elasticity is $E=190$ GPa. Only one strain gage is mounted on the bottom of the beam; the strain gage factor is $S=2.02$.

#

# > a) What kind of electronic circuit will you use? Draw a sketch of it.

#

# > b) Assume all your resistors including the unloaded strain gage are balanced and measure $120\,\Omega$, and that the strain gage is at location $R_2$. The supply voltage is $5.00\,\text{VDC}$. Will $V_\circ$ be positive or negative when a downward load is added?

# In practice, we cannot have all resistances = 120 $\Omega$. at zero load, the bridge will be unbalanced (show $V_o \neq 0$). How could we balance our bridge?

#

# Use a potentiometer to balance bridge, for the load cell, we ''zero'' the instrument.

#

# Other option to zero-out our instrument? Take data at zero-load, record the voltage, $V_{o,noload}$. Substract $V_{o,noload}$ to my data.

# > c) For a loading in which $V_\circ = -1.25\,\text{mV}$, calculate the strain $\epsilon_a$ in units of microstrain.

# \begin{align*}

# \frac{V_o}{V_s} & = - \frac{1}{4} \epsilon_a S\\

# \epsilon_a & = -\frac{4}{S} \frac{V_o}{V_s}

# \end{align*}

S = 2.02

Vo = -0.00125

Vs = 5

eps_a = -1*(4/S)*(Vo/Vs)

print(eps_a)

# > d) Calculate the axial stress (in MPa) in the beam under this load.

# > e) You now want more sensitivity in your measurement, you install a second strain gage on to

# p of the beam. Which resistor should you use for this second active strain gage?

#

# > f) With this new setup and the same applied load than previously, what should be the output voltage?

# 3- Strain Gage with Long Lead Wires

#

# <img src="img/StrainGageLongWires.png" width="360">

#

# A quarter bridge strain gage Wheatstone bridge circuit is constructed with $120\,\Omega$ resistors and a $120\,\Omega$ strain gage. For this practical application, the strain gage is located very far away form the DAQ station and the lead wires to the strain gage are $10\,\text{m}$ long and the lead wire have a resistance of $0.080\,\Omega/\text{m}$. The lead wire resistance can lead to problems since $R_{lead}$ changes with temperature.

#

# > Design a modified circuit that will cancel out the effect of the lead wires.

# ## Homework

#

| Lectures/09_StrainGage.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# <table class="ee-notebook-buttons" align="left">

# <td><a target="_blank" href="https://github.com/giswqs/earthengine-py-notebooks/tree/master/Algorithms/landsat_radiance.ipynb"><img width=32px src="https://www.tensorflow.org/images/GitHub-Mark-32px.png" /> View source on GitHub</a></td>

# <td><a target="_blank" href="https://nbviewer.jupyter.org/github/giswqs/earthengine-py-notebooks/blob/master/Algorithms/landsat_radiance.ipynb"><img width=26px src="https://upload.wikimedia.org/wikipedia/commons/thumb/3/38/Jupyter_logo.svg/883px-Jupyter_logo.svg.png" />Notebook Viewer</a></td>

# <td><a target="_blank" href="https://colab.research.google.com/github/giswqs/earthengine-py-notebooks/blob/master/Algorithms/landsat_radiance.ipynb"><img src="https://www.tensorflow.org/images/colab_logo_32px.png" /> Run in Google Colab</a></td>

# </table>

# ## Install Earth Engine API and geemap

# Install the [Earth Engine Python API](https://developers.google.com/earth-engine/python_install) and [geemap](https://geemap.org). The **geemap** Python package is built upon the [ipyleaflet](https://github.com/jupyter-widgets/ipyleaflet) and [folium](https://github.com/python-visualization/folium) packages and implements several methods for interacting with Earth Engine data layers, such as `Map.addLayer()`, `Map.setCenter()`, and `Map.centerObject()`.

# The following script checks if the geemap package has been installed. If not, it will install geemap, which automatically installs its [dependencies](https://github.com/giswqs/geemap#dependencies), including earthengine-api, folium, and ipyleaflet.

# +

# Installs geemap package

import subprocess

try:

import geemap

except ImportError:

print('Installing geemap ...')

subprocess.check_call(["python", '-m', 'pip', 'install', 'geemap'])

# -

import ee

import geemap

# ## Create an interactive map

# The default basemap is `Google Maps`. [Additional basemaps](https://github.com/giswqs/geemap/blob/master/geemap/basemaps.py) can be added using the `Map.add_basemap()` function.

Map = geemap.Map(center=[40,-100], zoom=4)

Map

# ## Add Earth Engine Python script

# +

# Add Earth Engine dataset

# Load a raw Landsat scene and display it.

raw = ee.Image('LANDSAT/LC08/C01/T1/LC08_044034_20140318')

Map.centerObject(raw, 10)

Map.addLayer(raw, {'bands': ['B4', 'B3', 'B2'], 'min': 6000, 'max': 12000}, 'raw')

# Convert the raw data to radiance.

radiance = ee.Algorithms.Landsat.calibratedRadiance(raw)

Map.addLayer(radiance, {'bands': ['B4', 'B3', 'B2'], 'max': 90}, 'radiance')

# Convert the raw data to top-of-atmosphere reflectance.

toa = ee.Algorithms.Landsat.TOA(raw)

Map.addLayer(toa, {'bands': ['B4', 'B3', 'B2'], 'max': 0.2}, 'toa reflectance')

# -

# ## Display Earth Engine data layers

Map.addLayerControl() # This line is not needed for ipyleaflet-based Map.

Map

| Algorithms/landsat_radiance.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# Copyright 2020 NVIDIA Corporation. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# ==============================================================================

# -

# <img src="http://developer.download.nvidia.com/compute/machine-learning/frameworks/nvidia_logo.png" style="width: 90px; float: right;">

#

# # Object Detection with TRTorch (SSD)

# ---

# ## Overview

#

#

# In PyTorch 1.0, TorchScript was introduced as a method to separate your PyTorch model from Python, make it portable and optimizable.

#

# TRTorch is a compiler that uses TensorRT (NVIDIA's Deep Learning Optimization SDK and Runtime) to optimize TorchScript code. It compiles standard TorchScript modules into ones that internally run with TensorRT optimizations.

#

# TensorRT can take models from any major framework and specifically tune them to perform better on specific target hardware in the NVIDIA family, and TRTorch enables us to continue to remain in the PyTorch ecosystem whilst doing so. This allows us to leverage the great features in PyTorch, including module composability, its flexible tensor implementation, data loaders and more. TRTorch is available to use with both PyTorch and LibTorch.

#

# To get more background information on this, we suggest the **lenet-getting-started** notebook as a primer for getting started with TRTorch.

# ### Learning objectives

#

# This notebook demonstrates the steps for compiling a TorchScript module with TRTorch on a pretrained SSD network, and running it to test the speedup obtained.

#

# ## Contents

# 1. [Requirements](#1)

# 2. [SSD Overview](#2)

# 3. [Creating TorchScript modules](#3)

# 4. [Compiling with TRTorch](#4)

# 5. [Running Inference](#5)

# 6. [Measuring Speedup](#6)

# 7. [Conclusion](#7)

# ---

# <a id="1"></a>

# ## 1. Requirements

#

# Follow the steps in `notebooks/README` to prepare a Docker container, within which you can run this demo notebook.

#

# In addition to that, run the following cell to obtain additional libraries specific to this demo.

# Known working versions

# !pip install numpy==1.21.2 scipy==1.5.2 Pillow==6.2.0 scikit-image==0.17.2 matplotlib==3.3.0

# ---

# <a id="2"></a>

# ## 2. SSD

#

# ### Single Shot MultiBox Detector model for object detection

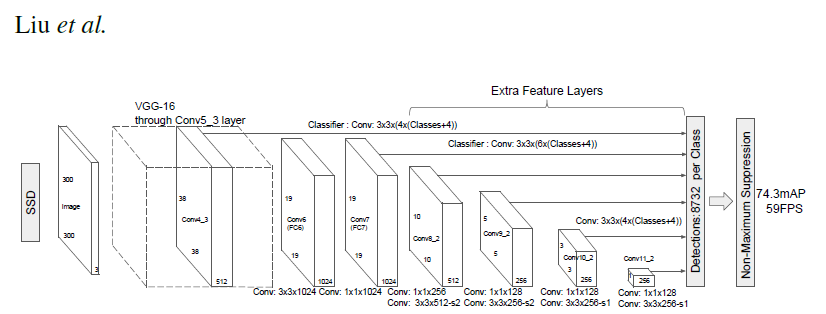

#

# _ | _

# - | -

#  |

# PyTorch has a model repository called the PyTorch Hub, which is a source for high quality implementations of common models. We can get our SSD model pretrained on [COCO](https://cocodataset.org/#home) from there.

#

# ### Model Description

#

# This SSD300 model is based on the

# [SSD: Single Shot MultiBox Detector](https://arxiv.org/abs/1512.02325) paper, which

# describes SSD as “a method for detecting objects in images using a single deep neural network".

# The input size is fixed to 300x300.

#

# The main difference between this model and the one described in the paper is in the backbone.

# Specifically, the VGG model is obsolete and is replaced by the ResNet-50 model.

#

# From the

# [Speed/accuracy trade-offs for modern convolutional object detectors](https://arxiv.org/abs/1611.10012)

# paper, the following enhancements were made to the backbone:

# * The conv5_x, avgpool, fc and softmax layers were removed from the original classification model.

# * All strides in conv4_x are set to 1x1.

#

# The backbone is followed by 5 additional convolutional layers.

# In addition to the convolutional layers, we attached 6 detection heads:

# * The first detection head is attached to the last conv4_x layer.

# * The other five detection heads are attached to the corresponding 5 additional layers.

#

# Detector heads are similar to the ones referenced in the paper, however,

# they are enhanced by additional BatchNorm layers after each convolution.

#

# More information about this SSD model is available at Nvidia's "DeepLearningExamples" Github [here](https://github.com/NVIDIA/DeepLearningExamples/tree/master/PyTorch/Detection/SSD).

import torch

torch.hub._validate_not_a_forked_repo=lambda a,b,c: True

# List of available models in PyTorch Hub from Nvidia/DeepLearningExamples

torch.hub.list('NVIDIA/DeepLearningExamples:torchhub')

# load SSD model pretrained on COCO from Torch Hub

precision = 'fp32'

ssd300 = torch.hub.load('NVIDIA/DeepLearningExamples:torchhub', 'nvidia_ssd', model_math=precision);

# Setting `precision="fp16"` will load a checkpoint trained with mixed precision

# into architecture enabling execution on Tensor Cores. Handling mixed precision data requires the Apex library.

# ### Sample Inference

# We can now run inference on the model. This is demonstrated below using sample images from the COCO 2017 Validation set.

# +

# Sample images from the COCO validation set

uris = [

'http://images.cocodataset.org/val2017/000000397133.jpg',

'http://images.cocodataset.org/val2017/000000037777.jpg',

'http://images.cocodataset.org/val2017/000000252219.jpg'

]

# For convenient and comprehensive formatting of input and output of the model, load a set of utility methods.

utils = torch.hub.load('NVIDIA/DeepLearningExamples:torchhub', 'nvidia_ssd_processing_utils')

# Format images to comply with the network input

inputs = [utils.prepare_input(uri) for uri in uris]

tensor = utils.prepare_tensor(inputs, False)

# The model was trained on COCO dataset, which we need to access in order to

# translate class IDs into object names.

classes_to_labels = utils.get_coco_object_dictionary()

# +

# Next, we run object detection

model = ssd300.eval().to("cuda")

detections_batch = model(tensor)

# By default, raw output from SSD network per input image contains 8732 boxes with

# localization and class probability distribution.

# Let’s filter this output to only get reasonable detections (confidence>40%) in a more comprehensive format.

results_per_input = utils.decode_results(detections_batch)

best_results_per_input = [utils.pick_best(results, 0.40) for results in results_per_input]

# -

# ### Visualize results

# +

from matplotlib import pyplot as plt

import matplotlib.patches as patches

# The utility plots the images and predicted bounding boxes (with confidence scores).

def plot_results(best_results):

for image_idx in range(len(best_results)):

fig, ax = plt.subplots(1)

# Show original, denormalized image...

image = inputs[image_idx] / 2 + 0.5

ax.imshow(image)

# ...with detections

bboxes, classes, confidences = best_results[image_idx]

for idx in range(len(bboxes)):

left, bot, right, top = bboxes[idx]

x, y, w, h = [val * 300 for val in [left, bot, right - left, top - bot]]

rect = patches.Rectangle((x, y), w, h, linewidth=1, edgecolor='r', facecolor='none')

ax.add_patch(rect)

ax.text(x, y, "{} {:.0f}%".format(classes_to_labels[classes[idx] - 1], confidences[idx]*100), bbox=dict(facecolor='white', alpha=0.5))

plt.show()

# -

# Visualize results without TRTorch/TensorRT

plot_results(best_results_per_input)

# ### Benchmark utility

# +

import time

import numpy as np

import torch.backends.cudnn as cudnn

cudnn.benchmark = True

# Helper function to benchmark the model

def benchmark(model, input_shape=(1024, 1, 32, 32), dtype='fp32', nwarmup=50, nruns=1000):

input_data = torch.randn(input_shape)

input_data = input_data.to("cuda")

if dtype=='fp16':

input_data = input_data.half()

print("Warm up ...")

with torch.no_grad():

for _ in range(nwarmup):

features = model(input_data)

torch.cuda.synchronize()

print("Start timing ...")

timings = []

with torch.no_grad():

for i in range(1, nruns+1):

start_time = time.time()

pred_loc, pred_label = model(input_data)

torch.cuda.synchronize()

end_time = time.time()

timings.append(end_time - start_time)

if i%10==0:

print('Iteration %d/%d, avg batch time %.2f ms'%(i, nruns, np.mean(timings)*1000))

print("Input shape:", input_data.size())

print("Output location prediction size:", pred_loc.size())

print("Output label prediction size:", pred_label.size())

print('Average batch time: %.2f ms'%(np.mean(timings)*1000))

# -

# We check how well the model performs **before** we use TRTorch/TensorRT

# Model benchmark without TRTorch/TensorRT

model = ssd300.eval().to("cuda")

benchmark(model, input_shape=(128, 3, 300, 300), nruns=100)

# ---

# <a id="3"></a>

# ## 3. Creating TorchScript modules

# To compile with TRTorch, the model must first be in **TorchScript**. TorchScript is a programming language included in PyTorch which removes the Python dependency normal PyTorch models have. This conversion is done via a JIT compiler which given a PyTorch Module will generate an equivalent TorchScript Module. There are two paths that can be used to generate TorchScript: **Tracing** and **Scripting**. <br>

# - Tracing follows execution of PyTorch generating ops in TorchScript corresponding to what it sees. <br>

# - Scripting does an analysis of the Python code and generates TorchScript, this allows the resulting graph to include control flow which tracing cannot do.

#

# Tracing however due to its simplicity is more likely to compile successfully with TRTorch (though both systems are supported).

model = ssd300.eval().to("cuda")

traced_model = torch.jit.trace(model, [torch.randn((1,3,300,300)).to("cuda")])

# If required, we can also save this model and use it independently of Python.

# This is just an example, and not required for the purposes of this demo

torch.jit.save(traced_model, "ssd_300_traced.jit.pt")

# Obtain the average time taken by a batch of input with Torchscript compiled modules

benchmark(traced_model, input_shape=(128, 3, 300, 300), nruns=100)

# ---

# <a id="4"></a>

# ## 4. Compiling with TRTorch

# TorchScript modules behave just like normal PyTorch modules and are intercompatible. From TorchScript we can now compile a TensorRT based module. This module will still be implemented in TorchScript but all the computation will be done in TensorRT.

# +

import trtorch

# The compiled module will have precision as specified by "op_precision".

# Here, it will have FP16 precision.

trt_model = trtorch.compile(traced_model, {

"inputs": [trtorch.Input((3, 3, 300, 300))],

"enabled_precisions": {torch.float, torch.half}, # Run with FP16

"workspace_size": 1 << 20

})

# -

# ---

# <a id="5"></a>

# ## 5. Running Inference

# Next, we run object detection

# +

# using a TRTorch module is exactly the same as how we usually do inference in PyTorch i.e. model(inputs)

detections_batch = trt_model(tensor.to(torch.half)) # convert the input to half precision

# By default, raw output from SSD network per input image contains 8732 boxes with

# localization and class probability distribution.

# Let’s filter this output to only get reasonable detections (confidence>40%) in a more comprehensive format.

results_per_input = utils.decode_results(detections_batch)

best_results_per_input_trt = [utils.pick_best(results, 0.40) for results in results_per_input]

# -

# Now, let's visualize our predictions!

#

# Visualize results with TRTorch/TensorRT

plot_results(best_results_per_input_trt)

# We get similar results as before!

# ---

# ## 6. Measuring Speedup

# We can run the benchmark function again to see the speedup gained! Compare this result with the same batch-size of input in the case without TRTorch/TensorRT above.

# +

batch_size = 128

# Recompiling with batch_size we use for evaluating performance

trt_model = trtorch.compile(traced_model, {

"inputs": [trtorch.Input((batch_size, 3, 300, 300))],

"enabled_precisions": {torch.float, torch.half}, # Run with FP16

"workspace_size": 1 << 20

})

benchmark(trt_model, input_shape=(batch_size, 3, 300, 300), nruns=100, dtype="fp16")

# -

# ---

# ## 7. Conclusion

#

# In this notebook, we have walked through the complete process of compiling a TorchScript SSD300 model with TRTorch, and tested the performance impact of the optimization. We find that using the TRTorch compiled model, we gain significant speedup in inference without any noticeable drop in performance!

# ### Details

# For detailed information on model input and output,

# training recipies, inference and performance visit:

# [github](https://github.com/NVIDIA/DeepLearningExamples/tree/master/PyTorch/Detection/SSD)

# and/or [NGC](https://ngc.nvidia.com/catalog/model-scripts/nvidia:ssd_for_pytorch)

#

# ### References

#

# - [SSD: Single Shot MultiBox Detector](https://arxiv.org/abs/1512.02325) paper

# - [Speed/accuracy trade-offs for modern convolutional object detectors](https://arxiv.org/abs/1611.10012) paper

# - [SSD on NGC](https://ngc.nvidia.com/catalog/model-scripts/nvidia:ssd_for_pytorch)

# - [SSD on github](https://github.com/NVIDIA/DeepLearningExamples/tree/master/PyTorch/Detection/SSD)

| notebooks/ssd-object-detection-demo.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# **Run the following two cells before you begin.**

# %autosave 10

# ______________________________________________________________________

# **First, import your data set and define the sigmoid function.**

# <details>

# <summary>Hint:</summary>

# The definition of the sigmoid is $f(x) = \frac{1}{1 + e^{-X}}$.

# </details>

# +

# Import the data set

import pandas as pd

import numpy as np

import matplotlib as mpl

import matplotlib.pyplot as plt

from sklearn.model_selection import train_test_split

from sklearn.linear_model import LogisticRegression

import seaborn as sns

df = pd.read_csv('cleaned_data.csv')

# -

# Define the sigmoid function

def sigmoid(X):

Y = 1 / (1 + np.exp(-X))

return Y

# **Now, create a train/test split (80/20) with `PAY_1` and `LIMIT_BAL` as features and `default payment next month` as values. Use a random state of 24.**

# Create a train/test split

X_train, X_test, y_train, y_test = train_test_split(df[['PAY_1', 'LIMIT_BAL']].values, df['default payment next month'].values,test_size=0.2, random_state=24)

# ______________________________________________________________________

# **Next, import LogisticRegression, with the default options, but set the solver to `'liblinear'`.**

lr_model = LogisticRegression(solver='liblinear')

lr_model

# ______________________________________________________________________

# **Now, train on the training data and obtain predicted classes, as well as class probabilities, using the testing data.**

# Fit the logistic regression model on training data

lr_model.fit(X_train,y_train)

# Make predictions using `.predict()`

y_pred = lr_model.predict(X_test)

# Find class probabilities using `.predict_proba()`

y_pred_proba = lr_model.predict_proba(X_test)

# ______________________________________________________________________

# **Then, pull out the coefficients and intercept from the trained model and manually calculate predicted probabilities. You'll need to add a column of 1s to your features, to multiply by the intercept.**

# Add column of 1s to features

ones_and_features = np.hstack([np.ones((X_test.shape[0],1)), X_test])

print(ones_and_features)

np.ones((X_test.shape[0],1)).shape

# Get coefficients and intercepts from trained model

intercept_and_coefs = np.concatenate([lr_model.intercept_.reshape(1,1), lr_model.coef_], axis=1)

intercept_and_coefs

# Manually calculate predicted probabilities

X_lin_comb = np.dot(intercept_and_coefs, np.transpose(ones_and_features))

y_pred_proba_manual = sigmoid(X_lin_comb)

# ______________________________________________________________________

# **Next, using a threshold of `0.5`, manually calculate predicted classes. Compare this to the class predictions output by scikit-learn.**

# Manually calculate predicted classes

y_pred_manual = y_pred_proba_manual >= 0.5

y_pred_manual.shape

y_pred.shape

# Compare to scikit-learn's predicted classes

np.array_equal(y_pred.reshape(1,-1), y_pred_manual)

y_test.shape

y_pred_proba_manual.shape

# ______________________________________________________________________

# **Finally, calculate ROC AUC using both scikit-learn's predicted probabilities, and your manually predicted probabilities, and compare.**

# + eid="e7697"

# Use scikit-learn's predicted probabilities to calculate ROC AUC

from sklearn.metrics import roc_auc_score

roc_auc_score(y_test, y_pred_proba_manual.reshape(y_pred_proba_manual.shape[1],))

# -

# Use manually calculated predicted probabilities to calculate ROC AUC

roc_auc_score(y_test, y_pred_proba[:,1])

| Mini-Project-2/Project 4/Fitting_a_Logistic_Regression_Model.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# name: python2

# ---

# + [markdown] colab_type="text" id="1Pi_B2cvdBiW"

# ##### Copyright 2019 The TF-Agents Authors.

# + [markdown] colab_type="text" id="f5926O3VkG_p"

# ### Get Started

# <table class="tfo-notebook-buttons" align="left">

# <td>

# <a target="_blank" href="https://colab.research.google.com/github/tensorflow/agents/blob/master/tf_agents/colabs/8_networks_tutorial.ipynb"><img src="https://www.tensorflow.org/images/colab_logo_32px.png" />Run in Google Colab</a>

# </td>

# <td>

# <a target="_blank" href="https://github.com/tensorflow/agents/blob/master/tf_agents/colabs/8_networks_tutorial.ipynb"><img src="https://www.tensorflow.org/images/GitHub-Mark-32px.png" />View source on GitHub</a>

# </td>

# </table>

# + colab_type="code" id="xsLTHlVdiZP3" colab={}

# Note: If you haven't installed tf-agents yet, run:

# !pip install tf-nightly

# !pip install tfp-nightly

# !pip install tf-agents-nightly

# + [markdown] colab_type="text" id="lEgSa5qGdItD"

# ### Imports

# + colab_type="code" id="sdvop99JlYSM" colab={}

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import abc

import tensorflow as tf

import numpy as np

from tf_agents.environments import random_py_environment

from tf_agents.environments import tf_py_environment

from tf_agents.networks import encoding_network

from tf_agents.networks import network

from tf_agents.networks import utils

from tf_agents.specs import array_spec

from tf_agents.utils import common as common_utils

from tf_agents.utils import nest_utils

tf.compat.v1.enable_v2_behavior()

# + [markdown] colab_type="text" id="31uij8nIo5bG"

# # Introduction

#

# In this colab we will cover how to define custom networks for your agents. The networks help us define the model that is trained by agents. In TF-Agents you will find several different types of networks which are useful across agents:

#

# **Main Networks**

#

# * **QNetwork**: Used in Qlearning for environments with discrete actions, this network maps an observation to value estimates for each possible action.

# * **CriticNetworks**: Also referred to as `ValueNetworks` in literature, learns to estimate some version of a Value function mapping some state into an estimate for the expected return of a policy. These networks estimate how good the state the agent is currently in is.

# * **ActorNetworks**: Learn a mapping from observations to actions. These networks are usually used by our policies to generate actions.

# * **ActorDistributionNetworks**: Similar to `ActorNetworks` but these generate a distribution which a policy can then sample to generate actions.

#

# **Helper Networks**

# * **EncodingNetwork**: Allows users to easily define a mapping of pre-processing layers to apply to a network's input.

# * **DynamicUnrollLayer**: Automatically resets the network's state on episode boundaries as it is applied over a time sequence.

# * **ProjectionNetwork**: Networks like `CategoricalProjectionNetwork` or `NormalProjectionNetwork` take inputs and generate the required parameters to generate Categorical, or Normal distributions.

#

# All examples in TF-Agents come with pre-configured networks. However these networks are not setup to handle complex observations.

#

# If you have an environment which exposes more than one observation/action and you need to customize your networks then this tutorial is for you!

# + [markdown] id="ums84-YP_21F" colab_type="text"

# #Defining Networks

#

# ##Network API

#

# In TF-Agents we subclass from Keras [Networks](https://github.com/tensorflow/tensorflow/blob/master/tensorflow/python/keras/engine/network.py). With it we can:

#

# * Simplify copy operations required when creating target networks.

# * Perform automatic variable creation when calling `network.variables()`.

# * Validate inputs based on network input_specs.

#

# ##EncodingNetwork

# As mentioned above the `EncodingNetwork` allows us to easily define a mapping of pre-processing layers to apply to a network's input to generate some encoding.

#

# The EncodingNetwork is composed of the following mostly optional layers:

#

# * Preprocessing layers

# * Preprocessing combiner

# * Conv2D

# * Flatten

# * Dense

#

# The special thing about encoding networks is that input preprocessing is applied. Input preprocessing is possible via `preprocessing_layers` and `preprocessing_combiner` layers. Each of these can be specified as a nested structure. If the `preprocessing_layers` nest is shallower than `input_tensor_spec`, then the layers will get the subnests. For example, if:

#

# ```

# input_tensor_spec = ([TensorSpec(3)] * 2, [TensorSpec(3)] * 5)

# preprocessing_layers = (Layer1(), Layer2())

# ```

#

# then preprocessing will call:

#

# ```

# preprocessed = [preprocessing_layers[0](observations[0]),

# preprocessing_layers[1](obsrevations[1])]

# ```

#

# However if

#

# ```

# preprocessing_layers = ([Layer1() for _ in range(2)],

# [Layer2() for _ in range(5)])

# ```

#

# then preprocessing will call:

#

# ```python

# preprocessed = [

# layer(obs) for layer, obs in zip(flatten(preprocessing_layers),

# flatten(observations))

# ]

# ```

#

# + [markdown] id="RP3H1bw0ykro" colab_type="text"

# ## Custom Networks

#

# To create your own networks you will only have to override the `__init__` and `__call__` methods. Let's create a custom network using what we learned about `EncodingNetworks` to create an ActorNetwork that takes observations which contain an image and a vector.

#

# + id="Zp0TjAJhYo4s" colab_type="code" colab={}

class ActorNetwork(network.Network):

def __init__(self,

observation_spec,

action_spec,

preprocessing_layers=None,

preprocessing_combiner=None,

conv_layer_params=None,

fc_layer_params=(75, 40),

dropout_layer_params=None,

activation_fn=tf.keras.activations.relu,

enable_last_layer_zero_initializer=False,

name='ActorNetwork'):

super(ActorNetwork, self).__init__(

input_tensor_spec=observation_spec, state_spec=(), name=name)

# For simplicity we will only support a single action float output.

self._action_spec = action_spec

flat_action_spec = tf.nest.flatten(action_spec)

if len(flat_action_spec) > 1:

raise ValueError('Only a single action is supported by this network')

self._single_action_spec = flat_action_spec[0]

if self._single_action_spec.dtype not in [tf.float32, tf.float64]:

raise ValueError('Only float actions are supported by this network.')

kernel_initializer = tf.keras.initializers.VarianceScaling(

scale=1. / 3., mode='fan_in', distribution='uniform')

self._encoder = encoding_network.EncodingNetwork(

observation_spec,

preprocessing_layers=preprocessing_layers,

preprocessing_combiner=preprocessing_combiner,

conv_layer_params=conv_layer_params,

fc_layer_params=fc_layer_params,

dropout_layer_params=dropout_layer_params,

activation_fn=activation_fn,

kernel_initializer=kernel_initializer,

batch_squash=False)

initializer = tf.keras.initializers.RandomUniform(

minval=-0.003, maxval=0.003)

self._action_projection_layer = tf.keras.layers.Dense(

flat_action_spec[0].shape.num_elements(),

activation=tf.keras.activations.tanh,

kernel_initializer=initializer,

name='action')

def call(self, observations, step_type=(), network_state=()):

outer_rank = nest_utils.get_outer_rank(observations, self.input_tensor_spec)

# We use batch_squash here in case the observations have a time sequence

# compoment.

batch_squash = utils.BatchSquash(outer_rank)

observations = tf.nest.map_structure(batch_squash.flatten, observations)

state, network_state = self._encoder(

observations, step_type=step_type, network_state=network_state)

actions = self._action_projection_layer(state)

actions = common_utils.scale_to_spec(actions, self._single_action_spec)

actions = batch_squash.unflatten(actions)

return tf.nest.pack_sequence_as(self._action_spec, [actions]), network_state

# + [markdown] id="Fm-MbMMLYiZj" colab_type="text"

# Let's create a `RandomPyEnvironment` to generate structured observations and validate our implementation.

# + id="E2XoNuuD66s5" colab_type="code" colab={}

action_spec = array_spec.BoundedArraySpec((3,), np.float32, minimum=0, maximum=10)

observation_spec = {

'image': array_spec.BoundedArraySpec((16, 16, 3), np.float32, minimum=0,

maximum=255),

'vector': array_spec.BoundedArraySpec((5,), np.float32, minimum=-100,

maximum=100)}

random_env = random_py_environment.RandomPyEnvironment(observation_spec, action_spec=action_spec)

# Convert the environment to a TFEnv to generate tensors.

tf_env = tf_py_environment.TFPyEnvironment(random_env)

# + [markdown] id="LM3uDTD7TNVx" colab_type="text"

# Since we've defined the observations to be a dict we need to create preprocessing layers to handle these.

# + id="r9U6JVevTAJw" colab_type="code" colab={}

preprocessing_layers = {

'image': tf.keras.models.Sequential([tf.keras.layers.Conv2D(8, 4),

tf.keras.layers.Flatten()]),

'vector': tf.keras.layers.Dense(5)

}

preprocessing_combiner = tf.keras.layers.Concatenate(axis=-1)

actor = ActorNetwork(tf_env.observation_spec(),

tf_env.action_spec(),

preprocessing_layers=preprocessing_layers,

preprocessing_combiner=preprocessing_combiner)

# + [markdown] id="mM9qedlwc41U" colab_type="text"

# Now that we have the actor network we can process observations from the environment.

# + id="JOkkeu7vXoei" colab_type="code" colab={}

time_step = tf_env.reset()

actor(time_step.observation, time_step.step_type)

# + [markdown] id="ALGxaQLWc9GI" colab_type="text"

# This same strategy can be used to customize any of the main networks used by the agents. You can define whatever preprocessing and connect it to the rest of the network. As you define your own custom make sure the output layer definitions of the network match.

| tf_agents/colabs/8_networks_tutorial.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Custom Types

#

# Often, the behavior for a field needs to be customized to support a particular shape or validation method that ParamTools does not support out of the box. In this case, you may use the `register_custom_type` function to add your new `type` to the ParamTools type registry. Each `type` has a corresponding `field` that is used for serialization and deserialization. ParamTools will then use this `field` any time it is handling a `value`, `label`, or `member` that is of this `type`.

#

# ParamTools is built on top of [`marshmallow`](https://github.com/marshmallow-code/marshmallow), a general purpose validation library. This means that you must implement a custom `marshmallow` field to go along with your new type. Please refer to the `marshmallow` [docs](https://marshmallow.readthedocs.io/en/stable/) if you have questions about the use of `marshmallow` in the examples below.

#

#

# ## 32 Bit Integer Example

#

# ParamTools's default integer field uses NumPy's `int64` type. This example shows you how to define an `int32` type and reference it in your `defaults`.

#

# First, let's define the Marshmallow class:

#

# +

import marshmallow as ma

import numpy as np

class Int32(ma.fields.Field):

"""

A custom type for np.int32.

https://numpy.org/devdocs/reference/arrays.dtypes.html

"""

# minor detail that makes this play nice with array_first

np_type = np.int32

def _serialize(self, value, *args, **kwargs):

"""Convert np.int32 to basic, serializable Python int."""

return value.tolist()

def _deserialize(self, value, *args, **kwargs):

"""Cast value from JSON to NumPy Int32."""

converted = np.int32(value)

return converted

# -

# Now, reference it in our defaults JSON/dict object:

#

# +

import paramtools as pt

# add int32 type to the paramtools type registry

pt.register_custom_type("int32", Int32())

class Params(pt.Parameters):

defaults = {

"small_int": {

"title": "Small integer",

"description": "Demonstrate how to define a custom type",

"type": "int32",

"value": 2

}

}

params = Params(array_first=True)

print(f"value: {params.small_int}, type: {type(params.small_int)}")

# -

# One problem with this is that we could run into some deserialization issues. Due to integer overflow, our deserialized result is not the number that we passed in--it's negative!

#

params.adjust(dict(

# this number wasn't chosen randomly.

small_int=2147483647 + 1

))

# ### Marshmallow Validator

#

# Fortunately, you can specify a custom validator with `marshmallow` or ParamTools. Making this works requires modifying the `_deserialize` method to check for overflow like this:

#

class Int32(ma.fields.Field):

"""

A custom type for np.int32.

https://numpy.org/devdocs/reference/arrays.dtypes.html

"""

# minor detail that makes this play nice with array_first

np_type = np.int32

def _serialize(self, value, *args, **kwargs):

"""Convert np.int32 to basic Python int."""

return value.tolist()

def _deserialize(self, value, *args, **kwargs):

"""Cast value from JSON to NumPy Int32."""

converted = np.int32(value)

# check for overflow and let range validator

# display the error message.

if converted != int(value):

return int(value)

return converted

# Now, let's see how to use `marshmallow` to fix this problem:

#

# +

import marshmallow as ma

import paramtools as pt

# get the minimum and maxium values for 32 bit integers.

min_int32 = -2147483648 # = np.iinfo(np.int32).min

max_int32 = 2147483647 # = np.iinfo(np.int32).max

# add int32 type to the paramtools type registry

pt.register_custom_type(

"int32",

Int32(validate=[

ma.validate.Range(min=min_int32, max=max_int32)

])

)

class Params(pt.Parameters):

defaults = {

"small_int": {

"title": "Small integer",

"description": "Demonstrate how to define a custom type",

"type": "int32",

"value": 2

}

}

params = Params(array_first=True)

params.adjust(dict(

small_int=np.int64(max_int32) + 1

))

# -

# ### ParamTools Validator

#

# Finally, we will use ParamTools to solve this problem. We need to modify how we create our custom `marshmallow` field so that it's wrapped by ParamTools's `PartialField`. This makes it clear that your field still needs to be initialized, and that your custom field is able to receive validation information from the `defaults` configuration:

#

# +

import paramtools as pt

# add int32 type to the paramtools type registry

pt.register_custom_type(

"int32",

pt.PartialField(Int32)

)

class Params(pt.Parameters):

defaults = {

"small_int": {

"title": "Small integer",

"description": "Demonstrate how to define a custom type",

"type": "int32",

"value": 2,

"validators": {

"range": {"min": -2147483648, "max": 2147483647}

}

}

}

params = Params(array_first=True)

params.adjust(dict(

small_int=2147483647 + 1

))

# -

| docs/api/custom-types.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:root] *

# language: python

# name: conda-root-py

# ---

# # Part 1: Data Ingestion

#

# This demo showcases financial fraud prevention and using the MLRun feature store to define complex features that help identify fraud. Fraud prevention specifically is a challenge as it requires processing raw transaction and events in real-time and being able to quickly respond and block transactions before they occur.

#

# To address this, we create a development pipeline and a production pipeline. Both pipelines share the same feature engineering and model code, but serve data very differently. Furthermore, we automate the data and model monitoring process, identify drift and trigger retraining in a CI/CD pipeline. This process is described in the diagram below:

#

#

# The raw data is described as follows:

#

# | TRANSACTIONS || ║ |USER EVENTS ||

# |-----------------|----------------------------------------------------------------|----------|-----------------|----------------------------------------------------------------|

# | **age** | age group value 0-6. Some values are marked as U for unknown | ║ | **source** | The party/entity related to the event |

# | **gender** | A character to define the age | ║ | **event** | event, such as login or password change |

# | **zipcodeOri** | ZIP code of the person originating the transaction | ║ | **timestamp** | The date and time of the event |

# | **zipMerchant** | ZIP code of the merchant receiving the transaction | ║ | | |

# | **category** | category of the transaction (e.g., transportation, food, etc.) | ║ | | |

# | **amount** | the total amount of the transaction | ║ | | |

# | **fraud** | whether the transaction is fraudulent | ║ | | |

# | **timestamp** | the date and time in which the transaction took place | ║ | | |

# | **source** | the ID of the party/entity performing the transaction | ║ | | |

# | **target** | the ID of the party/entity receiving the transaction | ║ | | |

# | **device** | the device ID used to perform the transaction | ║ | | |

# This notebook introduces how to **Ingest** different data sources to the **Feature Store**.

#

# The following FeatureSets will be created:

# - **Transactions**: Monetary transactions between a source and a target.

# - **Events**: Account events such as account login or a password change.

# - **Label**: Fraud label for the data.

#

# By the end of this tutorial you’ll learn how to:

#

# - Create an ingestion pipeline for each data source.

# - Define preprocessing, aggregation and validation of the pipeline.

# - Run the pipeline locally within the notebook.

# - Launch a real-time function to ingest live data.

# - Schedule a cron to run the task when needed.

project_name = 'fraud-demo'

# +

import mlrun

# Initialize the MLRun project object

project = mlrun.get_or_create_project(project_name, context="./", user_project=True)

# -

# ## Step 1 - Fetch, Process and Ingest our datasets

# ## 1.1 - Transactions

# ### Transactions

# + tags=["hide-cell"]

# Helper functions to adjust the timestamps of our data

# while keeping the order of the selected events and

# the relative distance from one event to the other

def date_adjustment(sample, data_max, new_max, old_data_period, new_data_period):

'''

Adjust a specific sample's date according to the original and new time periods

'''

sample_dates_scale = ((data_max - sample) / old_data_period)

sample_delta = new_data_period * sample_dates_scale

new_sample_ts = new_max - sample_delta

return new_sample_ts

def adjust_data_timespan(dataframe, timestamp_col='timestamp', new_period='2d', new_max_date_str='now'):

'''

Adjust the dataframe timestamps to the new time period

'''

# Calculate old time period

data_min = dataframe.timestamp.min()

data_max = dataframe.timestamp.max()

old_data_period = data_max-data_min

# Set new time period

new_time_period = pd.Timedelta(new_period)

new_max = pd.Timestamp(new_max_date_str)

new_min = new_max-new_time_period

new_data_period = new_max-new_min

# Apply the timestamp change

df = dataframe.copy()

df[timestamp_col] = df[timestamp_col].apply(lambda x: date_adjustment(x, data_max, new_max, old_data_period, new_data_period))

return df

# +

import pandas as pd

# Fetch the transactions dataset from the server

transactions_data = pd.read_csv('https://s3.wasabisys.com/iguazio/data/fraud-demo-mlrun-fs-docs/data.csv', parse_dates=['timestamp'], nrows=500)

# Adjust the samples timestamp for the past 2 days

transactions_data = adjust_data_timespan(transactions_data, new_period='2d')

# Preview

transactions_data.head(3)

# -

# ### Transactions - Create a FeatureSet and Preprocessing Pipeline

# Create the FeatureSet (data pipeline) definition for the **credit transaction processing** which describes the offline/online data transformations and aggregations.<br>

# The feature store will automatically add an offline `parquet` target and an online `NoSQL` target by using `set_targets()`.

#

# The data pipeline consists of:

#

# * **Extracting** the data components (hour, day of week)

# * **Mapping** the age values

# * **One hot encoding** for the transaction category and the gender

# * **Aggregating** the amount (avg, sum, count, max over 2/12/24 hour time windows)

# * **Aggregating** the transactions per category (over 14 days time windows)

# * **Writing** the results to **offline** (Parquet) and **online** (NoSQL) targets

# Import MLRun's Feature Store

import mlrun.feature_store as fstore

from mlrun.feature_store.steps import OneHotEncoder, MapValues, DateExtractor

# Define the transactions FeatureSet

transaction_set = fstore.FeatureSet("transactions",

entities=[fstore.Entity("source")],

timestamp_key='timestamp',

description="transactions feature set")

# +

# Define and add value mapping

main_categories = ["es_transportation", "es_health", "es_otherservices",

"es_food", "es_hotelservices", "es_barsandrestaurants",

"es_tech", "es_sportsandtoys", "es_wellnessandbeauty",

"es_hyper", "es_fashion", "es_home", "es_contents",

"es_travel", "es_leisure"]

# One Hot Encode the newly defined mappings

one_hot_encoder_mapping = {'category': main_categories,

'gender': list(transactions_data.gender.unique())}

# Define the graph steps

transaction_set.graph\

.to(DateExtractor(parts = ['hour', 'day_of_week'], timestamp_col = 'timestamp'))\

.to(MapValues(mapping={'age': {'U': '0'}}, with_original_features=True))\

.to(OneHotEncoder(mapping=one_hot_encoder_mapping))

# Add aggregations for 2, 12, and 24 hour time windows

transaction_set.add_aggregation(name='amount',

column='amount',

operations=['avg','sum', 'count','max'],

windows=['2h', '12h', '24h'],

period='1h')

# Add the category aggregations over a 14 day window

for category in main_categories:

transaction_set.add_aggregation(name=category,column=f'category_{category}',

operations=['count'], windows=['14d'], period='1d')

# Add default (offline-parquet & online-nosql) targets

transaction_set.set_targets()

# Plot the pipeline so we can see the different steps

transaction_set.plot(rankdir="LR", with_targets=True)

# -

# ### Transactions - Ingestion

# +

# Ingest our transactions dataset through our defined pipeline

transactions_df = fstore.ingest(transaction_set, transactions_data,

infer_options=fstore.InferOptions.default())

transactions_df.head(3)

# -

# ## 1.2 - User Events

# ### User Events - Fetching

# +

# Fetch our user_events dataset from the server

user_events_data = pd.read_csv('https://s3.wasabisys.com/iguazio/data/fraud-demo-mlrun-fs-docs/events.csv',

index_col=0, quotechar="\'", parse_dates=['timestamp'], nrows=500)

# Adjust to the last 2 days to see the latest aggregations in our online feature vectors

user_events_data = adjust_data_timespan(user_events_data, new_period='2d')

# Preview

user_events_data.head(3)

# -

# ### User Events - Create a FeatureSet and Preprocessing Pipeline

#

# Now we will define the events feature set.

# This is a pretty straight forward pipeline in which we only one hot encode the event categories and save the data to the default targets.

user_events_set = fstore.FeatureSet("events",

entities=[fstore.Entity("source")],

timestamp_key='timestamp',

description="user events feature set")

# +

# Define and add value mapping

events_mapping = {'event': list(user_events_data.event.unique())}

# One Hot Encode

user_events_set.graph.to(OneHotEncoder(mapping=events_mapping))

# Add default (offline-parquet & online-nosql) targets

user_events_set.set_targets()

# Plot the pipeline so we can see the different steps

user_events_set.plot(rankdir="LR", with_targets=True)

# -

# ### User Events - Ingestion

# Ingestion of our newly created events feature set

events_df = fstore.ingest(user_events_set, user_events_data)

events_df.head(3)

# ## Step 2 - Create a labels dataset for model training

# ### Label Set - Create a FeatureSet

# This feature set contains the label for the fraud demo, it will be ingested directly to the default targets without any changes

def create_labels(df):

labels = df[['fraud','source','timestamp']].copy()

labels = labels.rename(columns={"fraud": "label"})

labels['timestamp'] = labels['timestamp'].astype("datetime64[ms]")

labels['label'] = labels['label'].astype(int)

labels.set_index('source', inplace=True)

return labels

# +

# Define the "labels" feature set

labels_set = fstore.FeatureSet("labels",

entities=[fstore.Entity("source")],

timestamp_key='timestamp',

description="training labels",

engine="pandas")

labels_set.graph.to(name="create_labels", handler=create_labels)

# specify only Parquet (offline) target since its not used for real-time

labels_set.set_targets(['parquet'], with_defaults=False)

labels_set.plot(with_targets=True)

# -

# ### Label Set - Ingestion

# Ingest the labels feature set

labels_df = fstore.ingest(labels_set, transactions_data)

labels_df.head(3)

# ## Step 3 - Deploy a real-time pipeline

#

# When dealing with real-time aggregation, it's important to be able to update these aggregations in real-time.

# For this purpose, we will create live serving functions that will update the online feature store of the `transactions` FeatureSet and `Events` FeatureSet.

#

# Using MLRun's `serving` runtime, craetes a nuclio function loaded with our feature set's computational graph definition

# and an `HttpSource` to define the HTTP trigger.

#

# Notice that the implementation below does not require any rewrite of the pipeline logic.

# ## 3.1 - Transactions

# ### Transactions - Deploy our FeatureSet live endpoint

# Create iguazio v3io stream and transactions push API endpoint

transaction_stream = f'v3io:///projects/{project.name}/streams/transaction'

transaction_pusher = mlrun.datastore.get_stream_pusher(transaction_stream)

# +

# Define the source stream trigger (use v3io streams)

# we will define the `key` and `time` fields (extracted from the Json message).

source = mlrun.datastore.sources.StreamSource(path=transaction_stream , key_field='source', time_field='timestamp')

# Deploy the transactions feature set's ingestion service over a real-time (Nuclio) serverless function

# you can use the run_config parameter to pass function/service specific configuration

transaction_set_endpoint = fstore.deploy_ingestion_service(featureset=transaction_set, source=source)

# -

# ### Transactions - Test the feature set HTTP endpoint

# By defining our `transactions` feature set we can now use MLRun and Storey to deploy it as a live endpoint, ready to ingest new data!

#

# Using MLRun's `serving` runtime, we will create a nuclio function loaded with our feature set's computational graph definition and an `HttpSource` to define the HTTP trigger.

# +

import requests

import json

# Select a sample from the dataset and serialize it to JSON

transaction_sample = json.loads(transactions_data.sample(1).to_json(orient='records'))[0]

transaction_sample['timestamp'] = str(pd.Timestamp.now())

transaction_sample

# -

# Post the sample to the ingestion endpoint

requests.post(transaction_set_endpoint, json=transaction_sample).text

# ## 3.2 - User Events

# ### User Events - Deploy our FeatureSet live endpoint

# Deploy the events feature set's ingestion service using the feature set and all the previously defined resources.

# Create iguazio v3io stream and transactions push API endpoint

events_stream = f'v3io:///projects/{project.name}/streams/events'

events_pusher = mlrun.datastore.get_stream_pusher(events_stream)

# +

# Define the source stream trigger (use v3io streams)

# we will define the `key` and `time` fields (extracted from the Json message).

source = mlrun.datastore.sources.StreamSource(path=events_stream , key_field='source', time_field='timestamp')

# Deploy the transactions feature set's ingestion service over a real-time (Nuclio) serverless function

# you can use the run_config parameter to pass function/service specific configuration

events_set_endpoint = fstore.deploy_ingestion_service(featureset=user_events_set, source=source)

# -

# ### User Events - Test the feature set HTTP endpoint

# Select a sample from the events dataset and serialize it to JSON

user_events_sample = json.loads(user_events_data.sample(1).to_json(orient='records'))[0]

user_events_sample['timestamp'] = str(pd.Timestamp.now())

user_events_sample

# Post the sample to the ingestion endpoint

requests.post(events_set_endpoint, json=user_events_sample).text

# ## Done!

#

# You've completed Part 1 of the data-ingestion with the feature store.

# Proceed to [Part 2](02-create-training-model.ipynb) to learn how to train an ML model using the feature store data.

| docs/feature-store/end-to-end-demo/01-ingest-datasources.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

#

#

# <a href="https://hub.callysto.ca/jupyter/hub/user-redirect/git-pull?repo=https%3A%2F%2Fgithub.com%2Fcallysto%2Fcurriculum-notebooks&branch=master&subPath=Mathematics/Percentage/Percents.ipynb&depth=1" target="_parent"><img src="https://raw.githubusercontent.com/callysto/curriculum-notebooks/master/open-in-callysto-button.svg?sanitize=true" width="123" height="24" alt="Open in Callysto"/></a>

# **Run the cell below, this will add two buttons. Click on the "initialize" button before proceeding through the notebook**

# + tags=["hide-input"]

import uiButtons

# %uiButtons

# + tags=["hide-input"] language="html"

# <script src="https://d3js.org/d3.v3.min.js"></script>

# -

# # Percentages

# ## Introduction

# In this notebook we will discuss what percentages are and why this way of representing data is helpful in many different contexts. Common examples of percentages are sales tax or a mark for an assignment.

#

# The word percent comes from the Latin adverbial phrase *per centum* meaning “*by the hundred*”.

#

# For example, if the sales tax is $5\%$, this means that for every dollar you spend the tax adds $5$ cents to the total price of the purchase.

#

# A percentage simply represents a fraction (per hundred). For example, $90\%$ is the same as saying $\dfrac{90}{100}$. It is used to represent a ratio.

#

# What makes percentages so powerful is that they can represent any ratio.

#

# For example, getting $\dfrac{22}{25}$ on a math exam can be represented as $88\%$: $22$ is $88\%$ of $25$.

# ## How to Get a Percentage

# As mentioned in the introduction, a percentage is simply a fraction represented as a portion of 100.

#

# For this notebook we will only talk about percentages between 0% and 100%.

#

# This means the corresponding fraction will always be a value between $0$ and $1$.

#

# Let's look at our math exam mark example from above. The student correctly answered $22$ questions out of $25$, so the student received a grade of $\dfrac{22}{25}$.

#

# To represent this ratio as a percentage we first convert $\dfrac{22}{25}$ to its decimal representation (simply do the division in your calculator).

#

# $$

# \dfrac{22}{25} = 22 \div 25 = 0.88

# $$

#

# We are almost done: we now have the ratio represented as a value between 0 and 1. To finish getting the answer to our problem all we need to do is multiply this value by $100$ to get our percentage. $$0.88 \times 100 = 88\%$$

#

# Putting it all together we can say $22$ is $88\%$ of $25$.

#

# Think of a grade you recently received (as a fraction) and convert it to a percentage. Once you think you have an answer you can use the widget below to check your answer.

#

# Simply add the total marks of the test/assignment then move the slider until you get to your grade received.

# + tags=["hide-input"] language="html"

# <style>

# .main {

# font-family: 'Segoe UI', Tahoma, Geneva, Verdana, sans-serif;

# }

#

# .slider {

# width: 100px;

# }

#

# #maxVal {

# border:1px solid #cccccc;

# border-radius: 5px;

# width: 50px;

# }

# </style>

# <div class="main" style="border:2px solid black; width: 400px; padding: 20px;border-radius: 10px; margin: 0 auto; box-shadow: 3px 3px 12px #acacac">

# <div>

# <label for="maxValue">Enter the assignment/exam total marks</label>

# <input type="number" id="maxVal" value="100">

# </div>

# <div>

# <input type="range" min="0" max="100" value="0" class="slider" id="mySlider" style="width: 300px; margin-top: 20px;">

# </div>

# <h4 id="sliderVal">0</h3>

# </div>

#

# <script>

# var slider = document.getElementById('mySlider');

# var sliderVal = document.getElementById('sliderVal');

#

# slider.oninput = function () {

# var sliderMax = document.getElementById('maxVal').value;

# if(sliderMax < 0 || isNaN(sliderMax)) {

# sliderMax = 100;

# document.getElementById('maxVal').value = 100;

# }

# d3.select('#mySlider').attr('max', sliderMax);

# sliderVal.textContent = "If you answered " + this.value + "/" + sliderMax + " correct questions your grade will be " + ((

# this.value / sliderMax) * 100).toPrecision(3) + "%";

# }

# </script>

# -

# ## Solving Problems Using Percentages

#

# Now that we understand what percentages mean and how to get them from fractions, let's look at solving problems using percentages. Start by watching the video below to get a basic understanding.

# + tags=["hide-input"] language="html"

# <div align="middle">

# <iframe id="percentVid" width="640" height="360" src="https://www.youtube.com/embed/rR95Cbcjzus?end=368" frameborder="0" allow="autoplay; encrypted-media" allowfullscreen style="box-shadow: 3px 3px 12px #ACACAC">

# </iframe>

# <p><a href="https://www.youtube.com/channel/UC4a-Gbdw7vOaccHmFo40b9g" target="_blank">Click here</a> for more videos by Math Antics</p>

# </div>

# <script>

# $(function() {

# var reachable = false;

# var myFrame = $('#percentVid');

# var videoSrc = myFrame.attr("src");

# myFrame.attr("src", videoSrc)

# .on('load', function(){reachable = true;});

# setTimeout(function() {

# if(!reachable) {

# var ifrm = myFrame[0];

# ifrm = (ifrm.contentWindow) ? ifrm.contentWindow : (ifrm.contentDocument.document) ? ifrm.contentDocument.document : ifrm.contentDocument;

# ifrm.document.open();

# ifrm.document.write('If the video does not start click <a href="' + videoSrc + '" target="_blank">here</a>');

# ifrm.document.close();

# }

# }, 2000)

# });

# </script>

# -

# As shown in the video, taking $25\%$ of 20 "things" is the same as saying $\dfrac{25}{100}\times\dfrac{20}{1}=\dfrac{500}{100}=\dfrac{5}{1}=5$.

#

# Let's do another example, assume a retail store is having a weekend sale. The sale is $30\%$ off everything in store.

#

# Sam thinks this is a great time to buy new shoes, and the shoes she is interested in are regular price $\$89.99$.<br>

# If Sam buys these shoes this weekend how much will they cost? If the sales tax is $5\%$, what will the total price be?

#

# <img src="https://orig00.deviantart.net/5c3e/f/2016/211/b/d/converse_shoes_free_vector_by_superawesomevectors-dabxj2k.jpg" width="300">

# <img src="https://www.publicdomainpictures.net/pictures/170000/nahled/30-korting.jpg" width="300">

#

# Let's start by figuring out the sale price of the shoes before calculating the tax. To figure out the new price we must first take $30\%$ off the original price.

#

# So the shoes are regular priced at $\$89.99$ and the sale is for $30\%$ off

#

# $$

# \$89.99\times 30\%=\$89.99\times\frac{30}{100}=\$26.997

# $$

#

# We can round $\$26.997$ to $\$27$.

#

# Ok we now know how much Sam will save on her new shoes, but let's not forget that the question is asking how much her new shoes will cost, not how much she will save. All we need to do now is take the total price minus the savings to get the new price:

#

# $$

# \$89.99- \$27=\$62.99

# $$

#

# Wow, what savings!

#

# Now for the second part of the question: what will the total price be if the tax is $5\%$?

#

# We must now figure out what $5\%$ of $\$62.99$ is

#

# $$

# \$62.99\times5\%=\$62.99\times\frac{5}{100}=\$3.15

# $$

#

# Now we know that Sam will need to pay $\$3.15$ of tax on her new shoes so the final price is

#

# $$

# \$62.99+\$3.15=\$66.14

# $$

#

# A shortcut for finding the total price including the sales tax is to add 1 to the tax ratio, let's see how this works:

#

# $$

# \$62.99\times\left(\frac{5}{100}+1\right)=\$62.99\times1.05=\$66.14

# $$

#

# You can use this trick to quickly figure out a price after tax.

# ## Multiplying Percentages together

# Multiplying two or more percentages together is probably not something you would encounter often but it is easy to do if you remember that percentages are really fractions.

#

# Since percentages is simply a different way to represent a fraction, the rules for multiplying them are the same. Recall that multiplying two fractions together is the same as saying a *a fraction of a fraction*. For example $\dfrac{1}{2}\times\dfrac{1}{2}$ is the same as saying $\dfrac{1}{2}$ of $\dfrac{1}{2}$.

#

# Therefore if we write $50\%\times 20\%$ we really mean $50\%$ of $20\%$.

#

# The simplest approach to doing this is to first convert each fraction into their decimal representation (divide them by 100), so

#

# $$

# 50\%\div 100=0.50$$ and $$20\%\div 100=0.20

# $$

#

# Now that we have each fraction shown as their decimal representation we simply multiply them together:

#

# $$

# 0.50\times0.20=0.10

# $$

#

# and again to get this decimal to a percent we multiply by 100

#

# $$

# 0.10\times100=10\%

# $$

#

# Putting this into words we get: *$50\%$ of $20\%$ is $10\%$ (One half of $20\%$ is $10\%$)*.

# ## Sports Example

#

# As we know, statistics play a huge part in sports. Keeping track of a team's wins/losses or how many points a player has are integral parts of today's professional sports. Some of these stats may require more interesting mathematical formulas to figure them out. One such example is a goalie’s save percentage in hockey.

#

# The save percentage is the ratio of how many shots the goalie saved over how many he/she has faced. If you are familiar with the NHL you will know this statistic for goalies as Sv\% and is represented as a number like 0.939. In this case the $0.939$ is the percentage we are interested in. You can multiply this number by $100$ to get it in the familiar form $93.9\%$. This means the Sv\% is $93.9\%$, so this particular goalie has saved $93.9\%$ of the shots he's/she's faced.

#

# You will see below a "sport" like game. The objective of the game is to score on your opponent and protect your own net. As you play the game you will see (in real time) below the game window your Sv% and your opponents Sv%. Play a round or two before we discuss how to get this value.

#

# _**How to play:** choose the winning score from the drop down box then click "Start". In game use your mouse to move your paddle up and down (inside the play area). Don't let the ball go in your net!_

# + tags=["hide-input"] language="html"

# <style>

# .mainBody {

# font-family: Arial, Helvetica, sans-serif;

# }

# #startBtn {

# background-color: cornflowerblue;

# border: none;

# border-radius: 3px;

# font-size: 14px;

# color: white;

# font-weight: bold;

# padding: 2px 8px;

# text-transform: uppercase;

# }

# </style>

# <div class="mainBody">

# <div style="padding-bottom: 10px;">

# <label for="winningScore">Winning Score: </label>

# <select name="Winning Score" id="winningScore">

# <option value="3">3</option>

# <option value="5">5</option>

# <option value="7">7</option>

# <option value="10">10</option>

# </select>

# <button type="button" id="startBtn">Start</button>

# </div>

# <canvas id="gameCanvas" width="600" height="350" style="border: solid 1px black"></canvas>

#

# <div>

# <ul>

# <li>Player's point save average: <output id="playerAvg"></output></li>

# <li>Computer's point save average: <output id="compAvg"></output></li>

# </ul>

# </div>