license: llama2

language:

- en

pipeline_tag: text-generation

inference: false

tags:

- pytorch

- storywriting

- finetuned

- not-for-all-audiences

base_model: KoboldAI/LLaMA2-13B-Psyfighter2

model_type: llama

prompt_template: >

### Instruction:

Below is an instruction that describes a task. Write a response that

appropriately completes the request.

### Input:

{prompt}

### Response:

Model Card for Psyfighter2-13B-vore

This model is a version of KoboldAI/LLaMA2-13B-Psyfighter2 finetuned to better understand vore context. The primary purpose of this model is to be a storywriting assistant, a conversational model in a chat, and an interactive choose-your-own-adventure text game.

The model has been specifically trained to perform in Kobold AI Adventure Mode, the second-person choose-your-own-adventure story format.

This is the FP16-precision version of the model for merging and fine-tuning. For using the model, please see the quantized version and the instructions here: SnakyMcSnekFace/Psyfighter2-13B-vore-GGUF

Model Details

The model behaves similarly to KoboldAI/LLaMA2-13B-Psyfighter2, which it was derived from. Please see the README.md here to learn more.

Updates

- 14/09/2024 - aligned the model for better Adventure Mode flow and improved narrative quality

- 09/06/2024 - fine-tuned the model to follow Kobold AI Adventure Mode format

- 02/06/2024 - fixed errors in training and merging, significantly improving the overall prose quality

- 25/05/2024 - updated training process, making the model more coherent and improving the writing quality

- 13/04/2024 - uploaded the first version of the model

Bias, Risks, and Limitations

By design, this model has a strong vorny bias. It's not intended for use by anyone below 18 years old.

Training Details

The model was fine-tuned using a rank-stabilized QLoRA adapter. Training was performed using Unsloth AI library on Ubuntu 22.04.4 LTS with CUDA 12.1 and Pytorch 2.3.0.

The total training time on NVIDIA GeForce RTX 4060 Ti is about 26 hours.

After training, the adapter weights were merged into the dequantized model as described in ChrisHayduk's GitHub gist.

The quantized version of the model was prepared using llama.cpp.

QLoRa adapter configuration

- Rank: 64

- Alpha: 16

- Dropout rate: 0.1

- Target weights:

["q_proj", "k_proj", "v_proj", "o_proj", "gate_proj", "up_proj", "down_proj"], use_rslora=True

Targeting all projections for QLoRA adapter resulted in the smallest loss compared to other combinations, even compared to larger rank adapters.

Domain adaptation

The initial training phase consists of fine-tuning the adapter on ~55 MiB of free-form text that containing stories focused around the vore theme. The text is broken into paragraphs, which are aggregated into training samples of 4096 tokens or less, without crossing the document boundary. Each sample starts with BOS token (with its label set to -100), and ends in EOS token. The paragraph breaks are normalized to always consist of two line breaks.

Dataset pre-processing

The raw-text stories in dataset were edited as follows:

- titles, foreword, tags, and anything not comprising the text of the story are removed

- non-ascii characters and chapter separators are removed

- stories mentioning underage personas in any context are deleted

- names of private characters are randomized

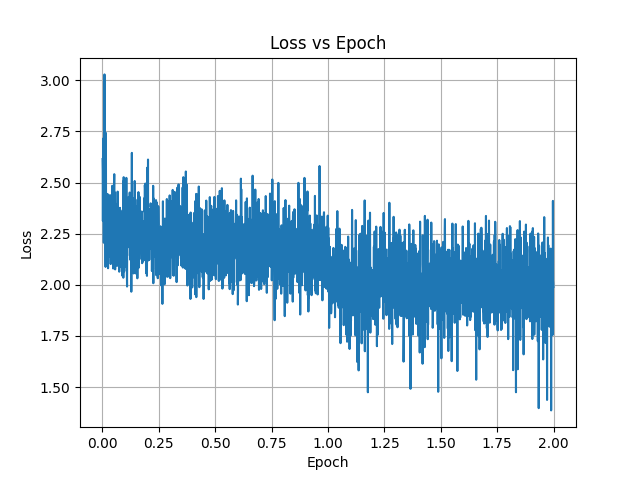

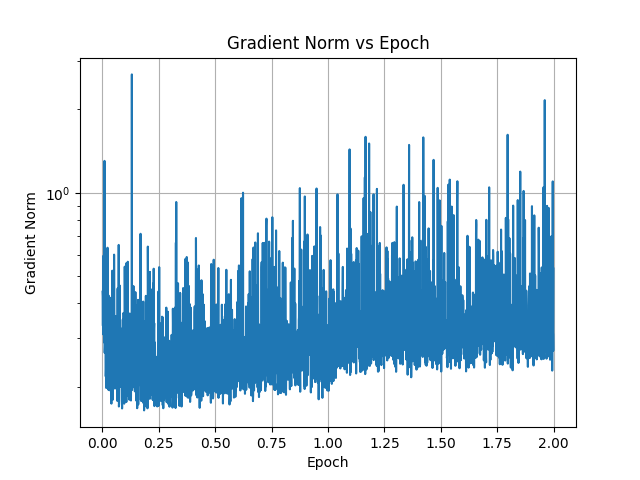

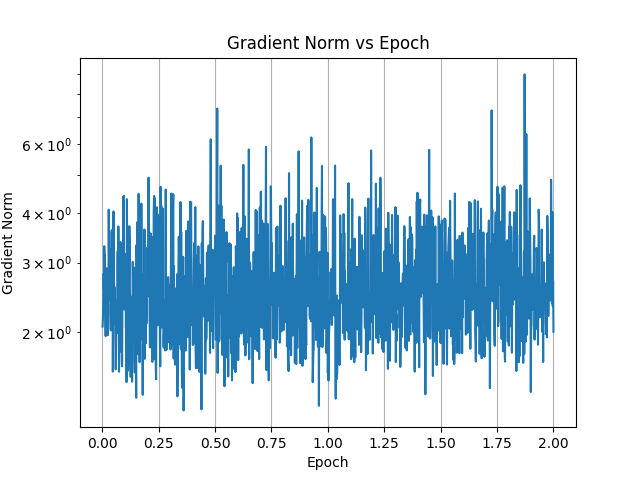

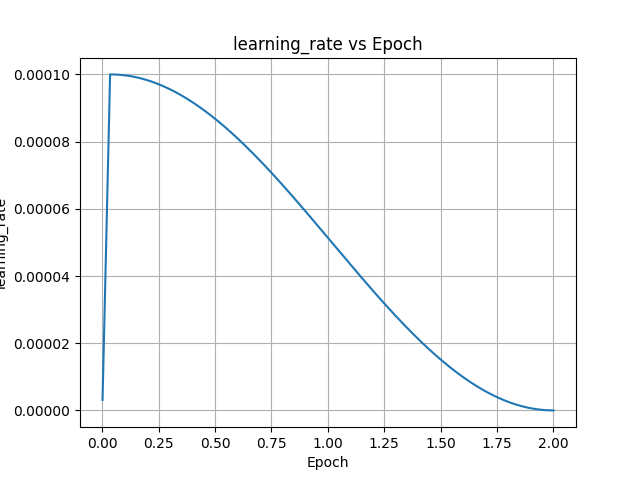

Training parameters

- Max. sequence length: 4096 tokens

- Samples per epoch: 5085

- Number of epochs: 2

- Learning rate: 1e-4

- Warmup: 64 steps

- LR Schedule: cosine

- Batch size: 1

- Gradient accumulation steps: 1

The training takes ~24 hours on NVIDIA GeForce RTX 4060 Ti.

Plots

Adventure mode SFT

The model is further trained on a private dataset of the adventure transcripts in Kobold AI adventure format, i.e:

As you venture deeper into the damp cave, you come across a lone goblin. The vile creature mumbles something to itself as it stares at the glowing text on a cave wall. It doesn't notice your approach.

> You sneak behind the goblin and hit it with the sword.

The dataset is generated by running adventure playthoughts with the model, and editing its output as necessary to create a cohesive evocative narrative. There are total of 657 player turns in the dataset.

The model is trained on completions only; the loss for the user input tokens is ignored by setting their label to -100. The prompt is truncated on the left with the maximum length of 2048 tokens.

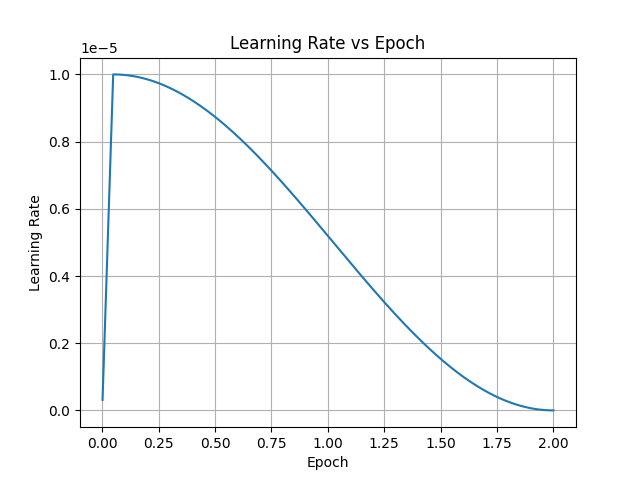

Training parameters

- Max. sequence length: 4096 tokens

- Samples per epoch: 657

- Number of epochs: 2

- Learning rate: 1e-5

- Warmup: 32 steps

- LR Schedule: cosine

- Batch size: 1

- Gradient accumulation steps: 1

The training takes ~150 minutes on NVIDIA GeForce RTX 4060 Ti.

Results

The fine-tuned model is able to understand the Kobold AI Adventure Format. It no longer attempts to generate the player's inputs starting with ">", and instead emits the EOS token, allowing the player to take turn.

Without the context, the model tends to produce very short responses, 1-2 paragraphs at most. The non-player characters are passive and the model does not advance the narrative. This behavior is easily corrected by setting up the context in the instruct format:

### Instruction:

Text transcript of a never-ending adventure story, written by the AI assistant. AI assistant uses vivid and evocative language to create a well-written novel. Characters are proactive and take initiative. Think about what goals the characters of the story have and write what they do to achieve those goals.

### Input:

<< transcript of the adventure + player's next turn >>

Write a few paragraphs that advance the plot of the story.

### Response:

(See instructions in SnakyMcSnekFace/Psyfighter2-13B-vore-GGUF for formatting the context in koboldcpp.)

Setting or removing the instructions allows the model to generate accepted/rejected synthetic data samples for KTO. This data can then be used to further steer the model towards better storytelling in the Adventure Mode without the need for the specially-crafted context.

Plots

Preference alignment for Adventure Mode

Although the fine-tuned model understands the Adventure format, it's output leaves much to be desired. The responses are often limited to a few sentences, and the narrative is lacking. To address this issue, the model is given prompts from the SFT dataset and asked to generate ~8 responses, which are manually categorized as accepted/rejected. The dataset contains 3696 samples, with 2231 accepted and 1465 rejected samples.

Dataset

Half of the samples was generated by this model where prompts contained the adventure transcripts and player turns in raw format. The other samples were generated by jebcarter/psyonic-cetacean-20B model fine-tuned on the same domain adaptation and SFT datasets, with the context using the instruct format described above. Those accounted for the majority of accepted samples. In addition, the accepted samples were punched-up and errors were corrected as necessary with the aid of the fine-tuned jebcarter/psyonic-cetacean-20B model model.

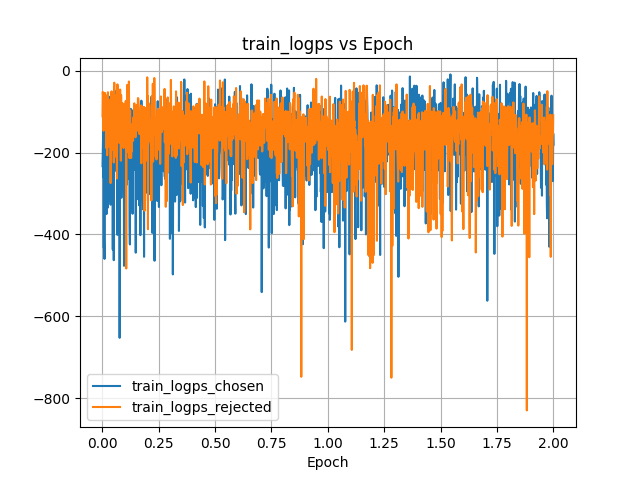

Training procedure

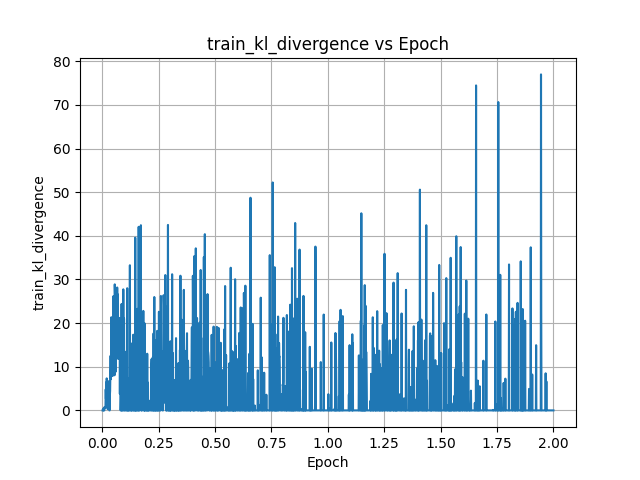

KTO trainer from Hugging Face TRL library was employed for performing preference alignment. The LoRA adapter from the previous training stages was merged into the model, and a new LoRA adapter was created for the KTO training. The quantized base model serves as a reference.

During the alignment, the model was encouraged to respect player's actions and agency, construct a coherent narrative, and use evocative language to describe the world and the outcome of the player's actions.

QLoRa adapter configuration

- Rank: 16

- Alpha: 16

- Dropout rate: 0.0

- Target weights:

["q_proj", "k_proj", "v_proj", "o_proj", "gate_proj", "up_proj", "down_proj"], use_rslora=True

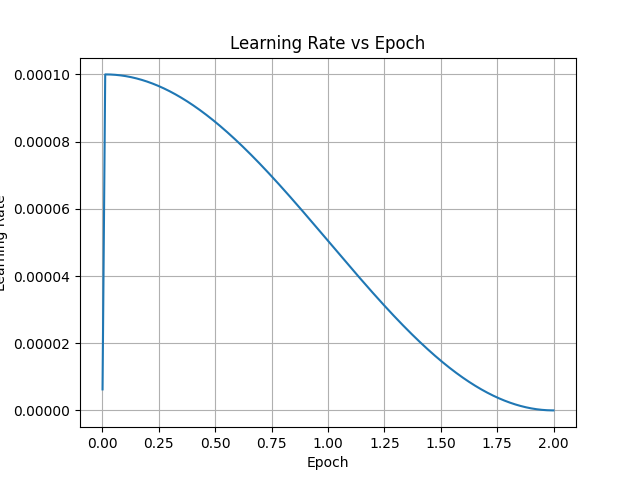

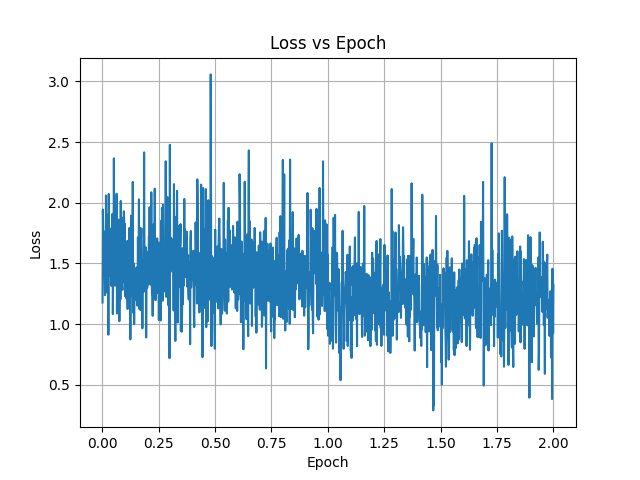

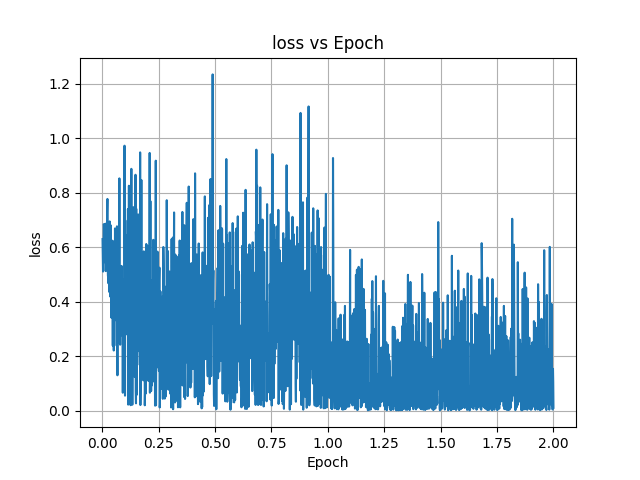

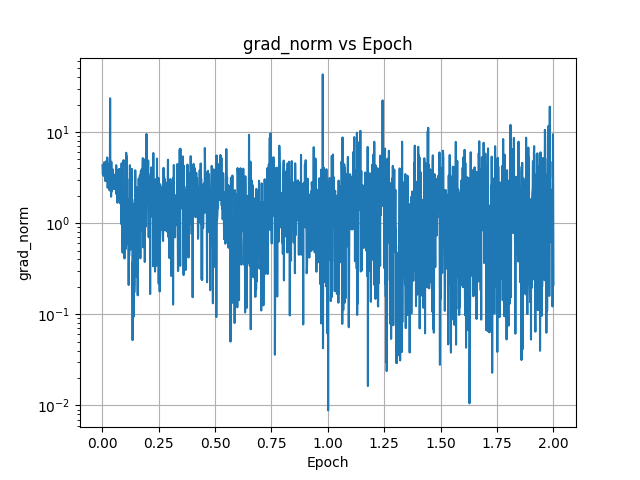

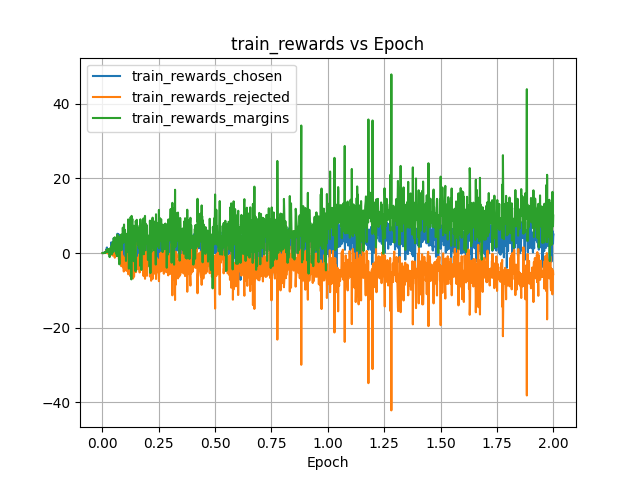

Training parameters

- Max. sequence length: 4096 tokens

- Max. prompt length: 3072

- Samples per epoch: 924

- Number of epochs: 2

- Learning rate: 1e-4

- Beta: 0.1

- Desirable weight: 1.0

- Undesirable weight: 1.52

- Warmup: 32 steps

- LR Schedule: cosine

- Batch size: 4

- Gradient accumulation steps: 1

- Gradient checkpointing: yes

The training takes ~20 hours on NVIDIA GeForce RTX 4060 Ti.

Results

The model's performance in Adventure Mode has improved substantially. The writing has become more creative and engaging; the model now advances the story more consistently and NPCs are more likely to act on their own instead of remaining passive. As a side effect, the model's "positivity bias" has diminished, making the NPCs more willing to take actions against the player.

Plots

Future plans

To further improve the model's performance in the future, I am planning to expand the domain adaptation dataset by an order of magnitude by improving and automating the data gathering and management. I will also be preparing additional preference alignment datasets to further improve the model's ability to act as a quality Game Master in Adventure Mode.