language:

- en

tags:

- stable-diffusion

- stable-diffusion-diffusers

- text-to-image

- text-to-panoramic

model-index:

- name: ldm3d-pano

results:

- task:

name: Latent Diffusion Model for 3D - Pano

type: latent-diffusion-model-for-3D-pano

dataset:

name: LAION-400M

type: laion/laion400m

metrics:

- name: FID

type: FID

value: 118.07

- name: IS

type: IS

value: 4.687

- name: CLIPsim

type: CLIPsim

value: 27.21

- name: MARE

type: MARE

value: 1.54

- name: ≤90%ile

type: ≤90%ile

value: 0.79

pipeline_tag: text-to-3d

license: creativeml-openrail-m

LDM3D-Pano model

The LDM3D-VR model suite was proposed in the paper LDM3D-VR: Latent Diffusion Model for 3D, authored by Gabriela Ben Melech Stan, Diana Wofk, Estelle Aflalo, Shao-Yen Tseng, Zhipeng Cai, Michael Paulitsch, and Vasudev Lal.

LDM3D-VR was accepted to the NeurIPS 2023 Workshop on Diffusion Models.

This new checkpoint, LDM3D-pano extends the LDM3D-4c model to panoramic image generation.

Model details

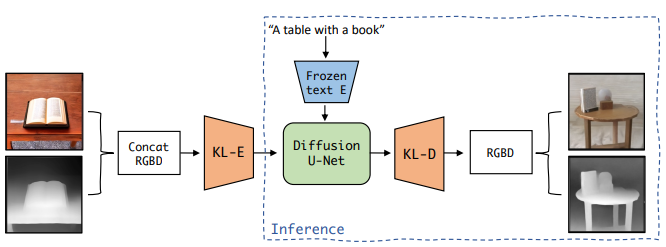

The abstract from the paper is the following: Latent diffusion models have proven to be state-of-the-art in the creation and manipulation of visual outputs. However, as far as we know, the generation of depth maps jointly with RGB is still limited. We introduce LDM3D-VR, a suite of diffusion models targeting virtual reality development that includes LDM3D-pano and LDM3D-SR. These models enable the generation of panoramic RGBD based on textual prompts and the upscaling of low-resolution inputs to high-resolution RGBD, respectively. Our models are fine-tuned from existing pretrained models on datasets containing panoramic/high-resolution RGB images, depth maps and captions. Both models are evaluated in comparison to existing related methods.

LDM3D overview taken from the LDM3D paper.

LDM3D overview taken from the LDM3D paper.

Usage

Here is how to use this model with PyTorch on both a CPU and GPU architecture:

from diffusers import StableDiffusionLDM3DPipeline

pipe = StableDiffusionLDM3DPipeline.from_pretrained("Intel/ldm3d-pano")

# On CPU

pipe.to("cpu")

# On GPU

pipe.to("cuda")

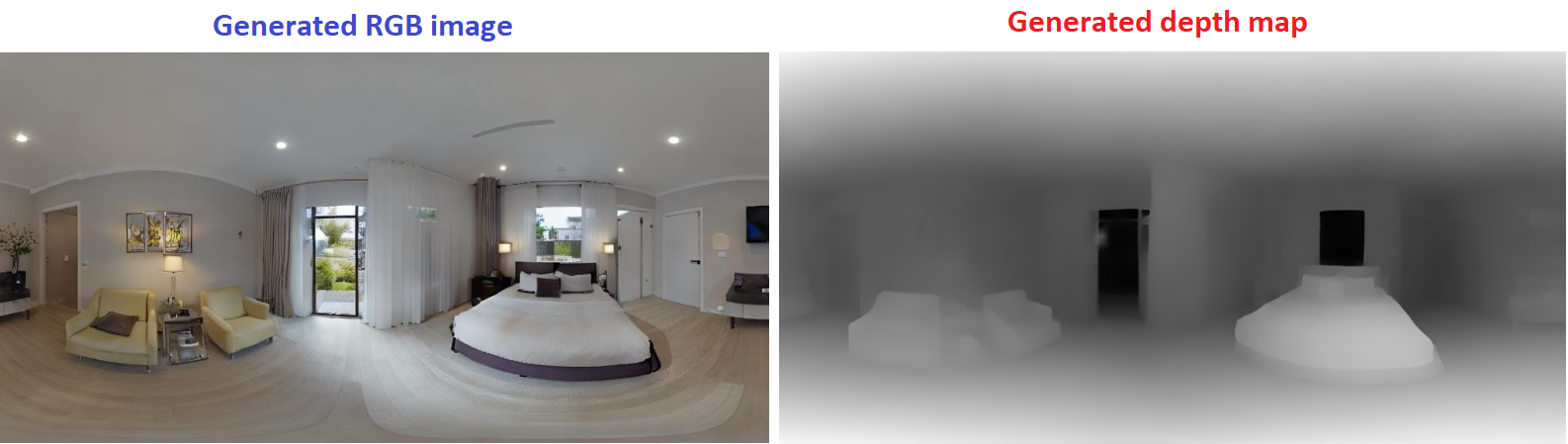

prompt = "360 view of a large bedroom"

name = "bedroom_pano"

output = pipe(

prompt,

width=1024,

height=512,

guidance_scale=7.0,

num_inference_steps=50,

)

rgb_image, depth_image = output.rgb, output.depth

rgb_image[0].save(name+"_ldm3d_rgb.jpg")

depth_image[0].save(name+"_ldm3d_depth.png")

This is the result:

Training data

The LDM3D model was fine-tuned on a dataset constructed from a subset of the LAION-400M dataset, a large-scale image-caption dataset that contains over 400 million image-caption pairs. An additional subset of LAION Aesthetics 6+ with tuples (captions, 512 x 512-sized images and depth maps from DPT-BEiT-L-512) is used to fine-tune the LDM3D-VR.

This checkpoint uses two panoramic-image datasets to further fine-tune the LDM3D-4c:

- polyhaven: 585 images for the training set, 66 images for the validation set

- ihdri: 57 outdoor images for the training set, 7 outdoor images for the validation set.

These datasets were augmented using Text2Light to create a dataset containing 13,852 training samples and 1,606 validation samples.

In order to generate the depth map of those samples, we used DPT-large and to generate the caption we used BLIP-2.

Finetuning

We adopt a multi-stage fine-tuning procedure. We first fine-tune the refined version of the KL-autoencoder in LDM3D-4c. Subsequently, the U-Net backbone is fine-tuned based on Stable Diffusion (SD) v1.5. The U-Net is then further fine-tuned on our panoramic image dataset.

Evaluation results

The table below shows the quantitative results of the text-to-pano image metrics at 512 x 1024, evaluated on 332 samples from the validation set.

| Method | FID ↓ | IS ↑ | CLIPsim ↑ |

|---|---|---|---|

| Text2light | 108.30 | 4.646±0.27 | 27.083±3.65 |

| LDM3D-pano | 118.07 | 4.687±0.50 | 27.210±3.24 |

The following table shows the quantitative results of the pano depth metrics at 512 x 1024. Reference depth is from DPT-BEiT-L-512.

| Method | MARE ↓ | ≤90%ile |

|---|---|---|

| Joint_3D60 | 1.75±2.87 | 0.92±0.87 |

| LDM3D-pano | 1.54±2.55 | 0.79±0.77 |

The results above can be referenced in Table 1 and Table 2 of the LDM3D-VR paper.

Ethical Considerations and Limitations

For image generation, the Stable Diffusion limitations and biases apply. For depth map generation, a first limitiation is that we are using DPT-large to produce the ground truth, hence, other limitations and biases from DPT are applicable.

Caveats and Recommendations

Users (both direct and downstream) should be made aware of the risks, biases and limitations of the model.

Here are a couple of useful links to learn more about Intel's AI software:

Disclaimer

The license on this model does not constitute legal advice. We are not responsible for the actions of third parties who use this model. Please consult an attorney before using this model for commercial purposes.

BibTeX entry and citation info

@misc{stan2023ldm3dvr,

title={LDM3D-VR: Latent Diffusion Model for 3D VR},

author={Gabriela Ben Melech Stan and Diana Wofk and Estelle Aflalo and Shao-Yen Tseng and Zhipeng Cai and Michael Paulitsch and Vasudev Lal},

year={2023},

eprint={2311.03226},

archivePrefix={arXiv},

primaryClass={cs.CV}

}