Spaces:

Running

on

A10G

Running

on

A10G

tastelikefeet

commited on

Commit

•

de7836d

1

Parent(s):

fdc24bb

first version

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +1 -0

- app.py +401 -0

- bert_tokenizer.py +421 -0

- cldm/cldm.py +617 -0

- cldm/ddim_hacked.py +317 -0

- cldm/embedding_manager.py +165 -0

- cldm/hack.py +111 -0

- cldm/logger.py +76 -0

- cldm/model.py +30 -0

- cldm/recognizer.py +303 -0

- dataset_util.py +77 -0

- example_images/banner.png +0 -0

- example_images/edit1.png +0 -0

- example_images/edit10.png +0 -0

- example_images/edit11.png +0 -0

- example_images/edit12.png +0 -0

- example_images/edit13.png +0 -0

- example_images/edit14.png +0 -0

- example_images/edit2.png +0 -0

- example_images/edit3.png +0 -0

- example_images/edit4.png +0 -0

- example_images/edit5.png +0 -0

- example_images/edit6.png +0 -0

- example_images/edit7.png +0 -0

- example_images/edit8.png +0 -0

- example_images/edit9.png +0 -0

- example_images/gen1.png +0 -0

- example_images/gen10.png +0 -0

- example_images/gen11.png +0 -0

- example_images/gen12.png +0 -0

- example_images/gen13.png +0 -0

- example_images/gen14.png +0 -0

- example_images/gen15.png +0 -0

- example_images/gen16.png +0 -0

- example_images/gen2.png +0 -0

- example_images/gen3.png +0 -0

- example_images/gen4.png +0 -0

- example_images/gen5.png +0 -0

- example_images/gen6.png +0 -0

- example_images/gen7.png +0 -0

- example_images/gen8.png +0 -0

- example_images/gen9.png +0 -0

- example_images/ref1.jpg +0 -0

- example_images/ref10.jpg +0 -0

- example_images/ref11.jpg +0 -0

- example_images/ref12.png +0 -0

- example_images/ref13.jpg +0 -0

- example_images/ref14.png +0 -0

- example_images/ref2.jpg +0 -0

- example_images/ref3.jpg +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

*.ttf filter=lfs diff=lfs merge=lfs -text

|

app.py

ADDED

|

@@ -0,0 +1,401 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

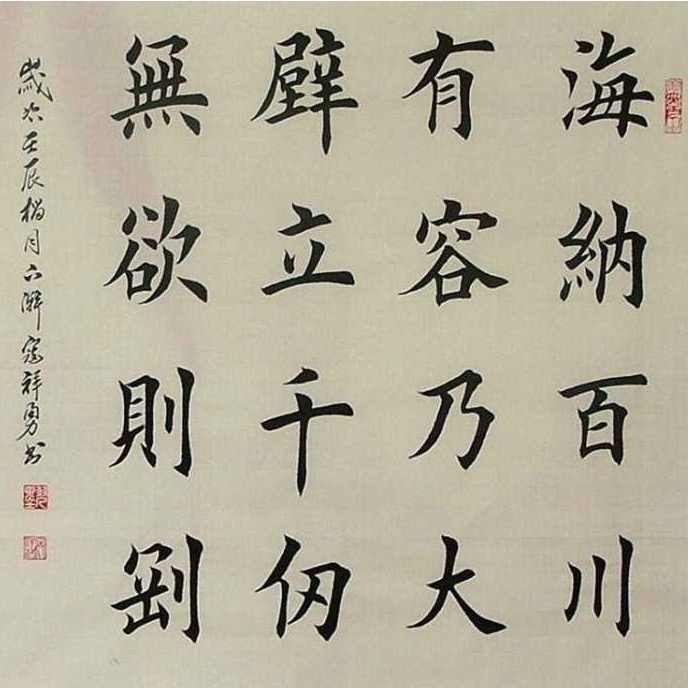

|

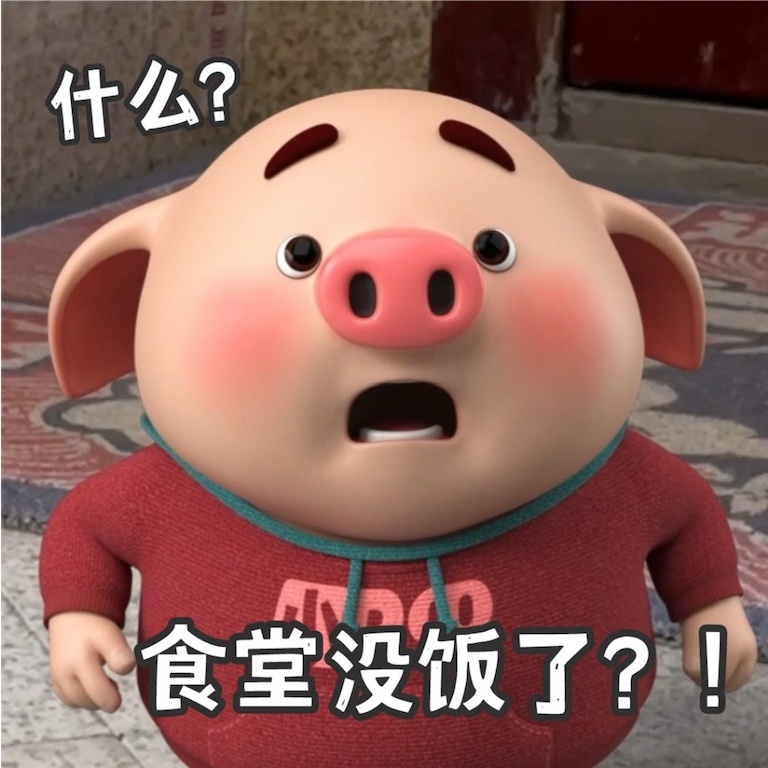

|

|

|

|

|

|

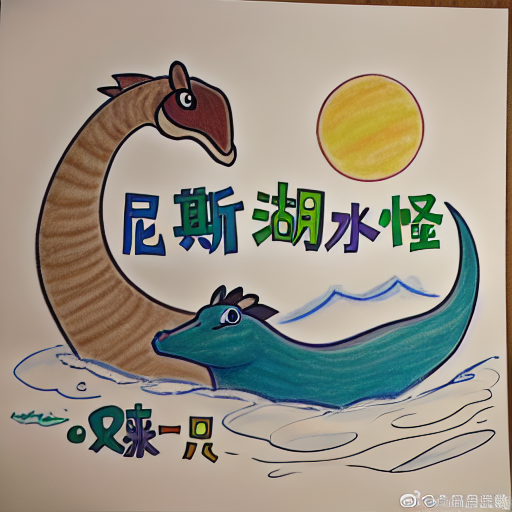

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

'''

|

| 2 |

+

AnyText: Multilingual Visual Text Generation And Editing

|

| 3 |

+

Paper: https://arxiv.org/abs/2311.03054

|

| 4 |

+

Code: https://github.com/tyxsspa/AnyText

|

| 5 |

+

Copyright (c) Alibaba, Inc. and its affiliates.

|

| 6 |

+

'''

|

| 7 |

+

import os

|

| 8 |

+

from modelscope.pipelines import pipeline

|

| 9 |

+

import cv2

|

| 10 |

+

import gradio as gr

|

| 11 |

+

import numpy as np

|

| 12 |

+

import re

|

| 13 |

+

from gradio.components import Component

|

| 14 |

+

from util import check_channels, resize_image, save_images

|

| 15 |

+

import json

|

| 16 |

+

|

| 17 |

+

BBOX_MAX_NUM = 8

|

| 18 |

+

img_save_folder = 'SaveImages'

|

| 19 |

+

load_model = True

|

| 20 |

+

if load_model:

|

| 21 |

+

inference = pipeline('my-anytext-task', model='damo/cv_anytext_text_generation_editing', model_revision='v1.1.0')

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

def count_lines(prompt):

|

| 25 |

+

prompt = prompt.replace('“', '"')

|

| 26 |

+

prompt = prompt.replace('”', '"')

|

| 27 |

+

p = '"(.*?)"'

|

| 28 |

+

strs = re.findall(p, prompt)

|

| 29 |

+

if len(strs) == 0:

|

| 30 |

+

strs = [' ']

|

| 31 |

+

return len(strs)

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

def generate_rectangles(w, h, n, max_trys=200):

|

| 35 |

+

img = np.zeros((h, w, 1), dtype=np.uint8)

|

| 36 |

+

rectangles = []

|

| 37 |

+

attempts = 0

|

| 38 |

+

n_pass = 0

|

| 39 |

+

low_edge = int(max(w, h)*0.3 if n <= 3 else max(w, h)*0.2) # ~150, ~100

|

| 40 |

+

while attempts < max_trys:

|

| 41 |

+

rect_w = min(np.random.randint(max((w*0.5)//n, low_edge), w), int(w*0.8))

|

| 42 |

+

ratio = np.random.uniform(4, 10)

|

| 43 |

+

rect_h = max(low_edge, int(rect_w/ratio))

|

| 44 |

+

rect_h = min(rect_h, int(h*0.8))

|

| 45 |

+

# gen rotate angle

|

| 46 |

+

rotation_angle = 0

|

| 47 |

+

rand_value = np.random.rand()

|

| 48 |

+

if rand_value < 0.7:

|

| 49 |

+

pass

|

| 50 |

+

elif rand_value < 0.8:

|

| 51 |

+

rotation_angle = np.random.randint(0, 40)

|

| 52 |

+

elif rand_value < 0.9:

|

| 53 |

+

rotation_angle = np.random.randint(140, 180)

|

| 54 |

+

else:

|

| 55 |

+

rotation_angle = np.random.randint(85, 95)

|

| 56 |

+

# rand position

|

| 57 |

+

x = np.random.randint(0, w - rect_w)

|

| 58 |

+

y = np.random.randint(0, h - rect_h)

|

| 59 |

+

# get vertex

|

| 60 |

+

rect_pts = cv2.boxPoints(((rect_w/2, rect_h/2), (rect_w, rect_h), rotation_angle))

|

| 61 |

+

rect_pts = np.int32(rect_pts)

|

| 62 |

+

# move

|

| 63 |

+

rect_pts += (x, y)

|

| 64 |

+

# check boarder

|

| 65 |

+

if np.any(rect_pts < 0) or np.any(rect_pts[:, 0] >= w) or np.any(rect_pts[:, 1] >= h):

|

| 66 |

+

attempts += 1

|

| 67 |

+

continue

|

| 68 |

+

# check overlap

|

| 69 |

+

if any(check_overlap_polygon(rect_pts, rp) for rp in rectangles):

|

| 70 |

+

attempts += 1

|

| 71 |

+

continue

|

| 72 |

+

n_pass += 1

|

| 73 |

+

cv2.fillPoly(img, [rect_pts], 255)

|

| 74 |

+

rectangles.append(rect_pts)

|

| 75 |

+

if n_pass == n:

|

| 76 |

+

break

|

| 77 |

+

print("attempts:", attempts)

|

| 78 |

+

if len(rectangles) != n:

|

| 79 |

+

raise gr.Error(f'Failed in auto generate positions after {attempts} attempts, try again!')

|

| 80 |

+

return img

|

| 81 |

+

|

| 82 |

+

|

| 83 |

+

def check_overlap_polygon(rect_pts1, rect_pts2):

|

| 84 |

+

poly1 = cv2.convexHull(rect_pts1)

|

| 85 |

+

poly2 = cv2.convexHull(rect_pts2)

|

| 86 |

+

rect1 = cv2.boundingRect(poly1)

|

| 87 |

+

rect2 = cv2.boundingRect(poly2)

|

| 88 |

+

if rect1[0] + rect1[2] >= rect2[0] and rect2[0] + rect2[2] >= rect1[0] and rect1[1] + rect1[3] >= rect2[1] and rect2[1] + rect2[3] >= rect1[1]:

|

| 89 |

+

return True

|

| 90 |

+

return False

|

| 91 |

+

|

| 92 |

+

|

| 93 |

+

def draw_rects(width, height, rects):

|

| 94 |

+

img = np.zeros((height, width, 1), dtype=np.uint8)

|

| 95 |

+

for rect in rects:

|

| 96 |

+

x1 = int(rect[0] * width)

|

| 97 |

+

y1 = int(rect[1] * height)

|

| 98 |

+

w = int(rect[2] * width)

|

| 99 |

+

h = int(rect[3] * height)

|

| 100 |

+

x2 = x1 + w

|

| 101 |

+

y2 = y1 + h

|

| 102 |

+

cv2.rectangle(img, (x1, y1), (x2, y2), 255, -1)

|

| 103 |

+

return img

|

| 104 |

+

|

| 105 |

+

|

| 106 |

+

def process(mode, prompt, pos_radio, sort_radio, revise_pos, show_debug, draw_img, rect_img, ref_img, ori_img, img_count, ddim_steps, w, h, strength, cfg_scale, seed, eta, a_prompt, n_prompt, *rect_list):

|

| 107 |

+

n_lines = count_lines(prompt)

|

| 108 |

+

# Text Generation

|

| 109 |

+

if mode == 'gen':

|

| 110 |

+

# create pos_imgs

|

| 111 |

+

if pos_radio == 'Manual-draw(手绘)':

|

| 112 |

+

if draw_img is not None:

|

| 113 |

+

pos_imgs = 255 - draw_img['image']

|

| 114 |

+

if 'mask' in draw_img:

|

| 115 |

+

pos_imgs = pos_imgs.astype(np.float32) + draw_img['mask'][..., 0:3].astype(np.float32)

|

| 116 |

+

pos_imgs = pos_imgs.clip(0, 255).astype(np.uint8)

|

| 117 |

+

else:

|

| 118 |

+

pos_imgs = np.zeros((w, h, 1))

|

| 119 |

+

elif pos_radio == 'Manual-rect(拖框)':

|

| 120 |

+

rect_check = rect_list[:BBOX_MAX_NUM]

|

| 121 |

+

rect_xywh = rect_list[BBOX_MAX_NUM:]

|

| 122 |

+

checked_rects = []

|

| 123 |

+

for idx, c in enumerate(rect_check):

|

| 124 |

+

if c:

|

| 125 |

+

_xywh = rect_xywh[4*idx:4*(idx+1)]

|

| 126 |

+

checked_rects += [_xywh]

|

| 127 |

+

pos_imgs = draw_rects(w, h, checked_rects)

|

| 128 |

+

elif pos_radio == 'Auto-rand(随机)':

|

| 129 |

+

pos_imgs = generate_rectangles(w, h, n_lines, max_trys=500)

|

| 130 |

+

# Text Editing

|

| 131 |

+

elif mode == 'edit':

|

| 132 |

+

revise_pos = False # disable pos revise in edit mode

|

| 133 |

+

if ref_img is None or ori_img is None:

|

| 134 |

+

raise gr.Error('No reference image, please upload one for edit!')

|

| 135 |

+

edit_image = ori_img.clip(1, 255) # for mask reason

|

| 136 |

+

edit_image = check_channels(edit_image)

|

| 137 |

+

edit_image = resize_image(edit_image, max_length=768)

|

| 138 |

+

h, w = edit_image.shape[:2]

|

| 139 |

+

if isinstance(ref_img, dict) and 'mask' in ref_img and ref_img['mask'].mean() > 0:

|

| 140 |

+

pos_imgs = 255 - edit_image

|

| 141 |

+

edit_mask = cv2.resize(ref_img['mask'][..., 0:3], (w, h))

|

| 142 |

+

pos_imgs = pos_imgs.astype(np.float32) + edit_mask.astype(np.float32)

|

| 143 |

+

pos_imgs = pos_imgs.clip(0, 255).astype(np.uint8)

|

| 144 |

+

else:

|

| 145 |

+

if isinstance(ref_img, dict) and 'image' in ref_img:

|

| 146 |

+

ref_img = ref_img['image']

|

| 147 |

+

pos_imgs = 255 - ref_img # example input ref_img is used as pos

|

| 148 |

+

cv2.imwrite('pos_imgs.png', 255-pos_imgs[..., ::-1])

|

| 149 |

+

params = {

|

| 150 |

+

"sort_priority": sort_radio,

|

| 151 |

+

"show_debug": show_debug,

|

| 152 |

+

"revise_pos": revise_pos,

|

| 153 |

+

"image_count": img_count,

|

| 154 |

+

"ddim_steps": ddim_steps,

|

| 155 |

+

"image_width": w,

|

| 156 |

+

"image_height": h,

|

| 157 |

+

"strength": strength,

|

| 158 |

+

"cfg_scale": cfg_scale,

|

| 159 |

+

"eta": eta,

|

| 160 |

+

"a_prompt": a_prompt,

|

| 161 |

+

"n_prompt": n_prompt

|

| 162 |

+

}

|

| 163 |

+

input_data = {

|

| 164 |

+

"prompt": prompt,

|

| 165 |

+

"seed": seed,

|

| 166 |

+

"draw_pos": pos_imgs,

|

| 167 |

+

"ori_image": ori_img,

|

| 168 |

+

}

|

| 169 |

+

results, rtn_code, rtn_warning, debug_info = inference(input_data, mode=mode, **params)

|

| 170 |

+

if rtn_code >= 0:

|

| 171 |

+

# save_images(results, img_save_folder)

|

| 172 |

+

# print(f'Done, result images are saved in: {img_save_folder}')

|

| 173 |

+

if rtn_warning:

|

| 174 |

+

gr.Warning(rtn_warning)

|

| 175 |

+

else:

|

| 176 |

+

raise gr.Error(rtn_warning)

|

| 177 |

+

return results, gr.Markdown(debug_info, visible=show_debug)

|

| 178 |

+

|

| 179 |

+

|

| 180 |

+

def create_canvas(w=512, h=512, c=3, line=5):

|

| 181 |

+

image = np.full((h, w, c), 200, dtype=np.uint8)

|

| 182 |

+

for i in range(h):

|

| 183 |

+

if i % (w//line) == 0:

|

| 184 |

+

image[i, :, :] = 150

|

| 185 |

+

for j in range(w):

|

| 186 |

+

if j % (w//line) == 0:

|

| 187 |

+

image[:, j, :] = 150

|

| 188 |

+

image[h//2-8:h//2+8, w//2-8:w//2+8, :] = [200, 0, 0]

|

| 189 |

+

return image

|

| 190 |

+

|

| 191 |

+

|

| 192 |

+

def resize_w(w, img1, img2):

|

| 193 |

+

if isinstance(img2, dict):

|

| 194 |

+

img2 = img2['image']

|

| 195 |

+

return [cv2.resize(img1, (w, img1.shape[0])), cv2.resize(img2, (w, img2.shape[0]))]

|

| 196 |

+

|

| 197 |

+

|

| 198 |

+

def resize_h(h, img1, img2):

|

| 199 |

+

if isinstance(img2, dict):

|

| 200 |

+

img2 = img2['image']

|

| 201 |

+

return [cv2.resize(img1, (img1.shape[1], h)), cv2.resize(img2, (img2.shape[1], h))]

|

| 202 |

+

|

| 203 |

+

|

| 204 |

+

is_t2i = 'true'

|

| 205 |

+

block = gr.Blocks(css='style.css', theme=gr.themes.Soft()).queue()

|

| 206 |

+

|

| 207 |

+

with open('javascript/bboxHint.js', 'r') as file:

|

| 208 |

+

value = file.read()

|

| 209 |

+

escaped_value = json.dumps(value)

|

| 210 |

+

|

| 211 |

+

with block:

|

| 212 |

+

block.load(fn=None,

|

| 213 |

+

_js=f"""() => {{

|

| 214 |

+

const script = document.createElement("script");

|

| 215 |

+

const text = document.createTextNode({escaped_value});

|

| 216 |

+

script.appendChild(text);

|

| 217 |

+

document.head.appendChild(script);

|

| 218 |

+

}}""")

|

| 219 |

+

gr.HTML('<div style="text-align: center; margin: 20px auto;"> \

|

| 220 |

+

<img id="banner" src="https://modelscope.cn/api/v1/studio/damo/studio_anytext/repo?Revision=master&FilePath=example_images/banner.png&View=true" alt="anytext"> <br> \

|

| 221 |

+

[<a href="https://arxiv.org/abs/2311.03054" style="color:blue; font-size:18px;">arXiv</a>] \

|

| 222 |

+

[<a href="https://github.com/tyxsspa/AnyText" style="color:blue; font-size:18px;">Code</a>] \

|

| 223 |

+

[<a href="https://modelscope.cn/models/damo/cv_anytext_text_generation_editing/summary" style="color:blue; font-size:18px;">ModelScope</a>]\

|

| 224 |

+

version: 1.1.0 </div>')

|

| 225 |

+

with gr.Row(variant='compact'):

|

| 226 |

+

with gr.Column():

|

| 227 |

+

with gr.Accordion('🕹Instructions(说明)', open=False,):

|

| 228 |

+

with gr.Tabs():

|

| 229 |

+

with gr.Tab("English"):

|

| 230 |

+

gr.Markdown('<span style="color:navy;font-size:20px">Run Examples</span>')

|

| 231 |

+

gr.Markdown('<span style="color:black;font-size:16px">AnyText has two modes: Text Generation and Text Editing, and we provides a variety of examples. Select one, click on [Run!] button to run.</span>')

|

| 232 |

+

gr.Markdown('<span style="color:gray;font-size:12px">Please note, before running examples, ensure the manual draw area is empty, otherwise may get wrong results. Additionally, different examples use \

|

| 233 |

+

different parameters (such as resolution, seed, etc.). When generate your own, please pay attention to the parameter changes, or refresh the page to restore the default parameters.</span>')

|

| 234 |

+

gr.Markdown('<span style="color:navy;font-size:20px">Text Generation</span>')

|

| 235 |

+

gr.Markdown('<span style="color:black;font-size:16px">Enter the textual description (in Chinese or English) of the image you want to generate in [Prompt]. Each text line that needs to be generated should be \

|

| 236 |

+

enclosed in double quotes. Then, manually draw the specified position for each text line to generate the image.</span>\

|

| 237 |

+

<span style="color:red;font-size:16px">The drawing of text positions is crucial to the quality of the resulting image</span>, \

|

| 238 |

+

<span style="color:black;font-size:16px">please do not draw too casually or too small. The number of positions should match the number of text lines, and the size of each position should be matched \

|

| 239 |

+

as closely as possible to the length or width of the corresponding text line. If [Manual-draw] is inconvenient, you can try dragging rectangles [Manual-rect] or random positions [Auto-rand].</span>')

|

| 240 |

+

gr.Markdown('<span style="color:gray;font-size:12px">When generating multiple lines, each position is matched with the text line according to a certain rule. The [Sort Position] option is used to \

|

| 241 |

+

determine whether to prioritize sorting from top to bottom or from left to right. You can open the [Show Debug] option in the parameter settings to observe the text position and glyph image \

|

| 242 |

+

in the result. You can also select the [Revise Position] which uses the bounding box of the rendered text as the revised position. However, it is occasionally found that the creativity of the \

|

| 243 |

+

generated text is slightly lower using this method.</span>')

|

| 244 |

+

gr.Markdown('<span style="color:navy;font-size:20px">Text Editing</span>')

|

| 245 |

+

gr.Markdown('<span style="color:black;font-size:16px">Please upload an image in [Ref] as a reference image, then adjust the brush size, and mark the area(s) to be edited. Input the textual description and \

|

| 246 |

+

the new text to be modified in [Prompt], then generate the image.</span>')

|

| 247 |

+

gr.Markdown('<span style="color:gray;font-size:12px">The reference image can be of any resolution, but it will be internally processed with a limit that the longer side cannot exceed 768 pixels, and the \

|

| 248 |

+

width and height will both be scaled to multiples of 64.</span>')

|

| 249 |

+

with gr.Tab("简体中文"):

|

| 250 |

+

gr.Markdown('<span style="color:navy;font-size:20px">运行示例</span>')

|

| 251 |

+

gr.Markdown('<span style="color:black;font-size:16px">AnyText有两种运行模式:文字生成和文字编辑,每种模式下提供了丰富的示例,选择一个,点击[Run!]即可。</span>')

|

| 252 |

+

gr.Markdown('<span style="color:gray;font-size:12px">请注意,运行示例前确保手绘位置区域是空的,防止影响示例结果,另外不同示例使用不同的参数(如分辨率,种子数等),如果要自行生成时,请留意参数变化,或刷新页面恢复到默认参数。</span>')

|

| 253 |

+

gr.Markdown('<span style="color:navy;font-size:20px">文字生成</span>')

|

| 254 |

+

gr.Markdown('<span style="color:black;font-size:16px">在Prompt中输入描述提示词(支持中英文),需要生成的每一行文字用双引号包裹,然后依次手绘指定每行文字的位置,生成图片。</span>\

|

| 255 |

+

<span style="color:red;font-size:16px">文字位置的绘制对成图质量很关键</span>, \

|

| 256 |

+

<span style="color:black;font-size:16px">请不要画的太随意或太小,位置的数量要与文字行数量一致,每个位置的尺寸要与对应的文字行的长短或宽高尽量匹配。如果手绘(Manual-draw)不方便,\

|

| 257 |

+

可以尝试拖框矩形(Manual-rect)或随机生成(Auto-rand)。</span>')

|

| 258 |

+

gr.Markdown('<span style="color:gray;font-size:12px">多行生成时,每个位置按照一定规则排序后与文字行做对应,Sort Position选项用于确定排序时优先从上到下还是从左到右。\

|

| 259 |

+

可以在参数设置中打开Show Debug选项,在结果图像中观察文字位置和字形图。也可以勾选Revise Position选项,这样会用渲染文字的外接矩形作为修正后的位置,不过偶尔发现这样生成的文字创造性略低。</span>')

|

| 260 |

+

gr.Markdown('<span style="color:navy;font-size:20px">文字编辑</span>')

|

| 261 |

+

gr.Markdown('<span style="color:black;font-size:16px">请上传一张待编辑的图片作为参考图(Ref),然后调整笔触大小后,在参考图上涂抹要编辑的位置,在Prompt中输入描述提示词和要修改的文字内容,生成图片。</span>')

|

| 262 |

+

gr.Markdown('<span style="color:gray;font-size:12px">参考图可以为任意分辨率,但内部处理时会限制长边不能超过768,并且宽高都被缩放为64的整数倍。</span>')

|

| 263 |

+

with gr.Accordion('🛠Parameters(参数)', open=False):

|

| 264 |

+

with gr.Row(variant='compact'):

|

| 265 |

+

img_count = gr.Slider(label="Image Count(图片��)", minimum=1, maximum=12, value=4, step=1)

|

| 266 |

+

ddim_steps = gr.Slider(label="Steps(步数)", minimum=1, maximum=100, value=20, step=1)

|

| 267 |

+

with gr.Row(variant='compact'):

|

| 268 |

+

image_width = gr.Slider(label="Image Width(宽度)", minimum=256, maximum=768, value=512, step=64)

|

| 269 |

+

image_height = gr.Slider(label="Image Height(高度)", minimum=256, maximum=768, value=512, step=64)

|

| 270 |

+

with gr.Row(variant='compact'):

|

| 271 |

+

strength = gr.Slider(label="Strength(控制力度)", minimum=0.0, maximum=2.0, value=1.0, step=0.01)

|

| 272 |

+

cfg_scale = gr.Slider(label="CFG-Scale(CFG强度)", minimum=0.1, maximum=30.0, value=9.0, step=0.1)

|

| 273 |

+

with gr.Row(variant='compact'):

|

| 274 |

+

seed = gr.Slider(label="Seed(种子数)", minimum=-1, maximum=99999999, step=1, randomize=False, value=-1)

|

| 275 |

+

eta = gr.Number(label="eta (DDIM)", value=0.0)

|

| 276 |

+

with gr.Row(variant='compact'):

|

| 277 |

+

show_debug = gr.Checkbox(label='Show Debug(调试信息)', value=False)

|

| 278 |

+

gr.Markdown('<span style="color:silver;font-size:12px">whether show glyph image and debug information in the result(是否在结果中显示glyph图以及调试信息)</span>')

|

| 279 |

+

a_prompt = gr.Textbox(label="Added Prompt(附加提示词)", value='best quality, extremely detailed,4k, HD, supper legible text, clear text edges, clear strokes, neat writing, no watermarks')

|

| 280 |

+

n_prompt = gr.Textbox(label="Negative Prompt(负向提示词)", value='low-res, bad anatomy, extra digit, fewer digits, cropped, worst quality, low quality, watermark, unreadable text, messy words, distorted text, disorganized writing, advertising picture')

|

| 281 |

+

prompt = gr.Textbox(label="Prompt(提示词)")

|

| 282 |

+

with gr.Tabs() as tab_modes:

|

| 283 |

+

with gr.Tab("🖼Text Generation(文字生成)", elem_id='MD-tab-t2i') as mode_gen:

|

| 284 |

+

pos_radio = gr.Radio(["Manual-draw(手绘)", "Manual-rect(拖框)", "Auto-rand(随机)"], value='Manual-draw(手绘)', label="Pos-Method(位置方式)", info="choose a method to specify text positions(选择方法用于指定文字位置).")

|

| 285 |

+

with gr.Row():

|

| 286 |

+

sort_radio = gr.Radio(["↕", "↔"], value='↕', label="Sort Position(位置排序)", info="position sorting priority(位置排序时的优先级)")

|

| 287 |

+

revise_pos = gr.Checkbox(label='Revise Position(修正位置)', value=False)

|

| 288 |

+

# gr.Markdown('<span style="color:silver;font-size:12px">try to revise according to text\'s bounding rectangle(尝试通过渲染后的文字行的外接矩形框修正位置)</span>')

|

| 289 |

+

with gr.Row(variant='compact'):

|

| 290 |

+

rect_cb_list: list[Component] = []

|

| 291 |

+

rect_xywh_list: list[Component] = []

|

| 292 |

+

for i in range(BBOX_MAX_NUM):

|

| 293 |

+

e = gr.Checkbox(label=f'{i}', value=False, visible=False, min_width='10')

|

| 294 |

+

x = gr.Slider(label='x', value=0.4, minimum=0.0, maximum=1.0, step=0.0001, elem_id=f'MD-t2i-{i}-x', visible=False)

|

| 295 |

+

y = gr.Slider(label='y', value=0.4, minimum=0.0, maximum=1.0, step=0.0001, elem_id=f'MD-t2i-{i}-y', visible=False)

|

| 296 |

+

w = gr.Slider(label='w', value=0.2, minimum=0.0, maximum=1.0, step=0.0001, elem_id=f'MD-t2i-{i}-w', visible=False)

|

| 297 |

+

h = gr.Slider(label='h', value=0.2, minimum=0.0, maximum=1.0, step=0.0001, elem_id=f'MD-t2i-{i}-h', visible=False)

|

| 298 |

+

x.change(fn=None, inputs=x, outputs=x, _js=f'v => onBoxChange({is_t2i}, {i}, "x", v)', show_progress=False, queue=False)

|

| 299 |

+

y.change(fn=None, inputs=y, outputs=y, _js=f'v => onBoxChange({is_t2i}, {i}, "y", v)', show_progress=False, queue=False)

|

| 300 |

+

w.change(fn=None, inputs=w, outputs=w, _js=f'v => onBoxChange({is_t2i}, {i}, "w", v)', show_progress=False, queue=False)

|

| 301 |

+

h.change(fn=None, inputs=h, outputs=h, _js=f'v => onBoxChange({is_t2i}, {i}, "h", v)', show_progress=False, queue=False)

|

| 302 |

+

|

| 303 |

+

e.change(fn=None, inputs=e, outputs=e, _js=f'e => onBoxEnableClick({is_t2i}, {i}, e)', queue=False)

|

| 304 |

+

rect_cb_list.extend([e])

|

| 305 |

+

rect_xywh_list.extend([x, y, w, h])

|

| 306 |

+

|

| 307 |

+

rect_img = gr.Image(value=create_canvas(), label="Rext Position(方框位置)", elem_id="MD-bbox-rect-t2i", show_label=False, visible=False)

|

| 308 |

+

draw_img = gr.Image(value=create_canvas(), label="Draw Position(绘制位置)", visible=True, tool='sketch', show_label=False, brush_radius=60)

|

| 309 |

+

|

| 310 |

+

def re_draw():

|

| 311 |

+

return [gr.Image(value=create_canvas(), tool='sketch'), gr.Slider(value=512), gr.Slider(value=512)]

|

| 312 |

+

draw_img.clear(re_draw, None, [draw_img, image_width, image_height])

|

| 313 |

+

image_width.release(resize_w, [image_width, rect_img, draw_img], [rect_img, draw_img])

|

| 314 |

+

image_height.release(resize_h, [image_height, rect_img, draw_img], [rect_img, draw_img])

|

| 315 |

+

|

| 316 |

+

def change_options(selected_option):

|

| 317 |

+

return [gr.Checkbox(visible=selected_option == 'Manual-rect(拖框)')] * BBOX_MAX_NUM + \

|

| 318 |

+

[gr.Image(visible=selected_option == 'Manual-rect(拖框)'),

|

| 319 |

+

gr.Image(visible=selected_option == 'Manual-draw(手绘)'),

|

| 320 |

+

gr.Radio(visible=selected_option != 'Auto-rand(随机)'),

|

| 321 |

+

gr.Checkbox(value=selected_option == 'Auto-rand(随机)')]

|

| 322 |

+

pos_radio.change(change_options, pos_radio, rect_cb_list + [rect_img, draw_img, sort_radio, revise_pos], show_progress=False, queue=False)

|

| 323 |

+

with gr.Row():

|

| 324 |

+

gr.Markdown("")

|

| 325 |

+

run_gen = gr.Button(value="Run(运行)!", scale=0.3, elem_classes='run')

|

| 326 |

+

gr.Markdown("")

|

| 327 |

+

|

| 328 |

+

def exp_gen_click():

|

| 329 |

+

return [gr.Slider(value=512), gr.Slider(value=512)] # all examples are 512x512, refresh draw_img

|

| 330 |

+

exp_gen = gr.Examples(

|

| 331 |

+

[

|

| 332 |

+

['一只浣熊站在黑板前,上面写着"深度学习"', "example_images/gen1.png", "Manual-draw(手绘)", "↕", False, 4, 81808278],

|

| 333 |

+

['一个儿童蜡笔画,森林里有一个可爱的蘑菇形状的房子,标题是"森林小屋"', "example_images/gen16.png", "Manual-draw(手绘)", "↕", False, 4, 40173333],

|

| 334 |

+

['一个精美设计的logo,画的是一个黑白风格的厨师,带着厨师帽,logo下方写着“深夜食堂”', "example_images/gen14.png", "Manual-draw(手绘)", "↕", False, 4, 6970544],

|

| 335 |

+

['photo of caramel macchiato coffee on the table, top-down perspective, with "Any" "Text" written on it using cream', "example_images/gen9.png", "Manual-draw(手绘)", "↕", False, 4, 66273235],

|

| 336 |

+

['一张户外雪地靴的电商广告,上面写着 “双12大促!”,“立减50”,“加绒加厚”,“穿脱方便”,“温暖24小时送达”, “包邮”,高级设计感,精美构图', "example_images/gen15.png", "Manual-draw(手绘)", "↕", False, 4, 66980376],

|

| 337 |

+

['Sign on the clean building that reads "科学" and "과학" and "ステップ" and "SCIENCE"', "example_images/gen6.png", "Manual-draw(手绘)", "↕", True, 4, 13246309],

|

| 338 |

+

['一个精致的马克杯,上面雕刻着一首中国古诗,内容是 "花落知多少" "夜来风雨声" "处处闻啼鸟" "春眠不觉晓"', "example_images/gen3.png", "Manual-draw(手绘)", "↔", False, 4, 60358279],

|

| 339 |

+

['A delicate square cake, cream and fruit, with "CHEERS" "to the" and "GRADUATE" written in chocolate', "example_images/gen8.png", "Manual-draw(手绘)", "↕", False, 4, 93424638],

|

| 340 |

+

['一件精美的毛衣,上面有针织的文字:"通义丹青"', "example_images/gen4.png", "Manual-draw(手绘)", "↕", False, 4, 48769450],

|

| 341 |

+

['一个双肩包的特写照,上面用针织文字写着”为了无法“ ”计算的价值“', "example_images/gen12.png", "Manual-draw(手绘)", "↕", False, 4, 35552323],

|

| 342 |

+

['A nice drawing in pencil of Michael Jackson, with the words "Micheal" and "Jackson" written on it', "example_images/gen7.png", "Manual-draw(手绘)", "↕", False, 4, 83866922],

|

| 343 |

+

['一个漂亮的蜡笔画,有行星,宇航员,还有宇宙飞船,上面写的是"去火星旅行", "王小明", "11月1日"', "example_images/gen5.png", "Manual-draw(手绘)", "↕", False, 4, 42328250],

|

| 344 |

+

['一个装饰华丽的蛋糕,上面用奶油写着“阿里云”和"APSARA"', "example_images/gen13.png", "Manual-draw(手绘)", "↕", False, 4, 62357019],

|

| 345 |

+

['一张关于墙上的彩色涂鸦艺术的摄影作品,上面写着“人工智能" 和 "神经网络"', "example_images/gen10.png", "Manual-draw(手绘)", "↕", False, 4, 64722007],

|

| 346 |

+

['一枚中国古代铜钱, 上面的文字是 "康" "寶" "通" "熙"', "example_images/gen2.png", "Manual-draw(手绘)", "↕", False, 4, 24375031],

|

| 347 |

+

['a well crafted ice sculpture that made with "Happy" and "Holidays". Dslr photo, perfect illumination', "example_images/gen11.png", "Manual-draw(手绘)", "↕", True, 4, 64901362],

|

| 348 |

+

],

|

| 349 |

+

[prompt, draw_img, pos_radio, sort_radio, revise_pos, img_count, seed],

|

| 350 |

+

examples_per_page=5,

|

| 351 |

+

)

|

| 352 |

+

exp_gen.dataset.click(exp_gen_click, None, [image_width, image_height])

|

| 353 |

+

|

| 354 |

+

with gr.Tab("🎨Text Editing(文字编辑)") as mode_edit:

|

| 355 |

+

with gr.Row(variant='compact'):

|

| 356 |

+

ref_img = gr.Image(label='Ref(参考图)', source='upload')

|

| 357 |

+

ori_img = gr.Image(label='Ori(原图)')

|

| 358 |

+

|

| 359 |

+

def upload_ref(x):

|

| 360 |

+

return [gr.Image(type="numpy", brush_radius=60, tool='sketch'),

|

| 361 |

+

gr.Image(value=x)]

|

| 362 |

+

|

| 363 |

+

def clear_ref(x):

|

| 364 |

+

return gr.Image(source='upload', tool=None)

|

| 365 |

+

ref_img.upload(upload_ref, ref_img, [ref_img, ori_img])

|

| 366 |

+

ref_img.clear(clear_ref, ref_img, ref_img)

|

| 367 |

+

with gr.Row():

|

| 368 |

+

gr.Markdown("")

|

| 369 |

+

run_edit = gr.Button(value="Run(运行)!", scale=0.3, elem_classes='run')

|

| 370 |

+

gr.Markdown("")

|

| 371 |

+

gr.Examples(

|

| 372 |

+

[

|

| 373 |

+

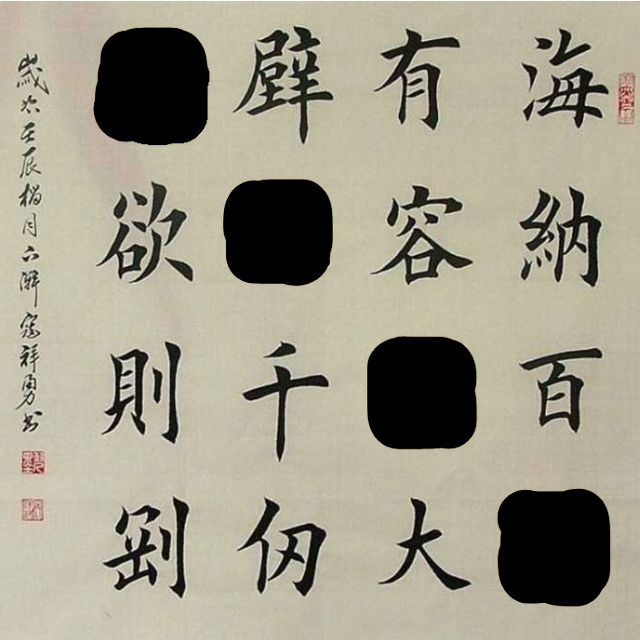

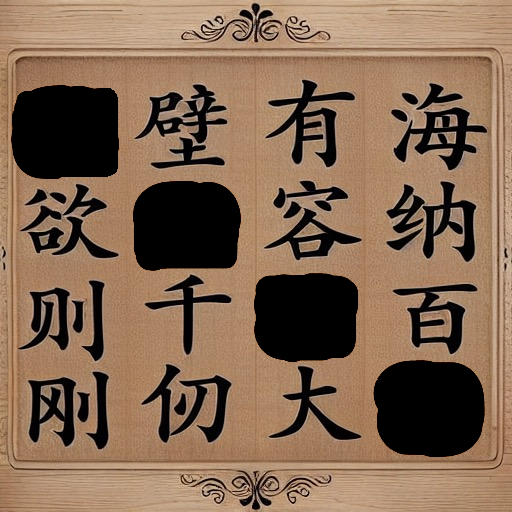

['精美的书法作品,上面写着“志” “存” “高” ”远“', "example_images/ref10.jpg", "example_images/edit10.png", 4, 98053044],

|

| 374 |

+

['一个表情包,小猪说 "下班"', "example_images/ref2.jpg", "example_images/edit2.png", 2, 43304008],

|

| 375 |

+

['Characters written in chalk on the blackboard that says "DADDY"', "example_images/ref8.jpg", "example_images/edit8.png", 4, 73556391],

|

| 376 |

+

['一个中国古代铜钱,上面写着"乾" "隆"', "example_images/ref12.png", "example_images/edit12.png", 4, 89159482],

|

| 377 |

+

['黑板上写着"Here"', "example_images/ref11.jpg", "example_images/edit11.png", 2, 15353513],

|

| 378 |

+

['A letter picture that says "THER"', "example_images/ref6.jpg", "example_images/edit6.png", 4, 72321415],

|

| 379 |

+

['一堆水果, 中间写着“UIT”', "example_images/ref13.jpg", "example_images/edit13.png", 4, 54263567],

|

| 380 |

+

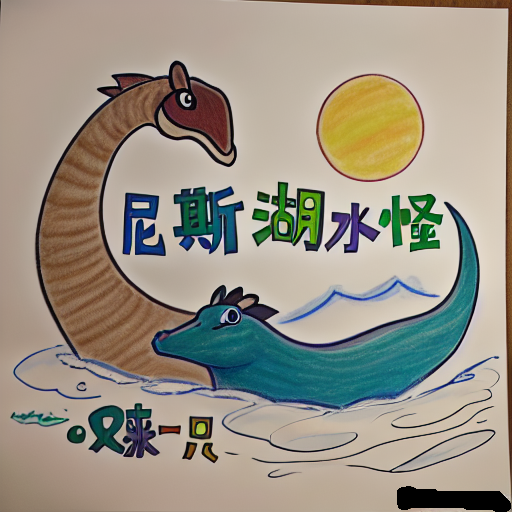

['一个漫画,上面写着" "', "example_images/ref14.png", "example_images/edit14.png", 4, 94081527],

|

| 381 |

+

['一个黄色标志牌,上边写着"不要" 和 "大意"', "example_images/ref3.jpg", "example_images/edit3.png", 2, 64010349],

|

| 382 |

+

['A cake with colorful characters that reads "EVERYDAY"', "example_images/ref7.jpg", "example_images/edit7.png", 4, 8943410],

|

| 383 |

+

['一个青铜鼎,上面写着" "和" "', "example_images/ref4.jpg", "example_images/edit4.png", 4, 71139289],

|

| 384 |

+

['一个建筑物前面的字母标牌, 上面写着 " "', "example_images/ref5.jpg", "example_images/edit5.png", 4, 50416289],

|

| 385 |

+

],

|

| 386 |

+

[prompt, ori_img, ref_img, img_count, seed],

|

| 387 |

+

examples_per_page=5,

|

| 388 |

+

)

|

| 389 |

+

with gr.Column():

|

| 390 |

+

result_gallery = gr.Gallery(label='Result(结果)', show_label=True, preview=True, columns=2, allow_preview=True, height=600)

|

| 391 |

+

result_info = gr.Markdown('', visible=False)

|

| 392 |

+

ips = [prompt, pos_radio, sort_radio, revise_pos, show_debug, draw_img, rect_img, ref_img, ori_img, img_count, ddim_steps, image_width, image_height, strength, cfg_scale, seed, eta, a_prompt, n_prompt, *(rect_cb_list+rect_xywh_list)]

|

| 393 |

+

run_gen.click(fn=process, inputs=[gr.State('gen')] + ips, outputs=[result_gallery, result_info])

|

| 394 |

+

run_edit.click(fn=process, inputs=[gr.State('edit')] + ips, outputs=[result_gallery, result_info])

|

| 395 |

+

|

| 396 |

+

block.launch(

|

| 397 |

+

server_name='0.0.0.0' if os.getenv('GRADIO_LISTEN', '') != '' else "127.0.0.1",

|

| 398 |

+

share=False,

|

| 399 |

+

root_path=f"/{os.getenv('GRADIO_PROXY_PATH')}" if os.getenv('GRADIO_PROXY_PATH') else ""

|

| 400 |

+

)

|

| 401 |

+

# block.launch(server_name='0.0.0.0')

|

bert_tokenizer.py

ADDED

|

@@ -0,0 +1,421 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright 2018 The Google AI Language Team Authors.

|

| 2 |

+

#

|

| 3 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 4 |

+

# you may not use this file except in compliance with the License.

|

| 5 |

+

# You may obtain a copy of the License at

|

| 6 |

+

#

|

| 7 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 8 |

+

#

|

| 9 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 10 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 11 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 12 |

+

# See the License for the specific language governing permissions and

|

| 13 |

+

# limitations under the License.

|

| 14 |

+

"""Tokenization classes."""

|

| 15 |

+

|

| 16 |

+

from __future__ import absolute_import, division, print_function

|

| 17 |

+

import collections

|

| 18 |

+

import re

|

| 19 |

+

import unicodedata

|

| 20 |

+

|

| 21 |

+

import six

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

def validate_case_matches_checkpoint(do_lower_case, init_checkpoint):

|

| 25 |

+

"""Checks whether the casing config is consistent with the checkpoint name."""

|

| 26 |

+

|

| 27 |

+

# The casing has to be passed in by the user and there is no explicit check

|

| 28 |

+

# as to whether it matches the checkpoint. The casing information probably

|

| 29 |

+

# should have been stored in the bert_config.json file, but it's not, so

|

| 30 |

+

# we have to heuristically detect it to validate.

|

| 31 |

+

|

| 32 |

+

if not init_checkpoint:

|

| 33 |

+

return

|

| 34 |

+

|

| 35 |

+

m = re.match('^.*?([A-Za-z0-9_-]+)/bert_model.ckpt', init_checkpoint)

|

| 36 |

+

if m is None:

|

| 37 |

+

return

|

| 38 |

+

|

| 39 |

+

model_name = m.group(1)

|

| 40 |

+

|

| 41 |

+

lower_models = [

|

| 42 |

+

'uncased_L-24_H-1024_A-16', 'uncased_L-12_H-768_A-12',

|

| 43 |

+

'multilingual_L-12_H-768_A-12', 'chinese_L-12_H-768_A-12'

|

| 44 |

+

]

|

| 45 |

+

|

| 46 |

+

cased_models = [

|

| 47 |

+

'cased_L-12_H-768_A-12', 'cased_L-24_H-1024_A-16',

|

| 48 |

+

'multi_cased_L-12_H-768_A-12'

|

| 49 |

+

]

|

| 50 |

+

|

| 51 |

+

is_bad_config = False

|

| 52 |

+

if model_name in lower_models and not do_lower_case:

|

| 53 |

+

is_bad_config = True

|

| 54 |

+

actual_flag = 'False'

|

| 55 |

+

case_name = 'lowercased'

|

| 56 |

+

opposite_flag = 'True'

|

| 57 |

+

|

| 58 |

+

if model_name in cased_models and do_lower_case:

|

| 59 |

+

is_bad_config = True

|

| 60 |

+

actual_flag = 'True'

|

| 61 |

+

case_name = 'cased'

|

| 62 |

+

opposite_flag = 'False'

|

| 63 |

+

|

| 64 |

+

if is_bad_config:

|

| 65 |

+

raise ValueError(

|

| 66 |

+

'You passed in `--do_lower_case=%s` with `--init_checkpoint=%s`. '

|

| 67 |

+

'However, `%s` seems to be a %s model, so you '

|

| 68 |

+

'should pass in `--do_lower_case=%s` so that the fine-tuning matches '

|

| 69 |

+

'how the model was pre-training. If this error is wrong, please '

|

| 70 |

+

'just comment out this check.' %

|

| 71 |

+

(actual_flag, init_checkpoint, model_name, case_name,

|

| 72 |

+

opposite_flag))

|

| 73 |

+

|

| 74 |

+

|

| 75 |

+

def convert_to_unicode(text):

|

| 76 |

+

"""Converts `text` to Unicode (if it's not already), assuming utf-8 input."""

|

| 77 |

+

if six.PY3:

|

| 78 |

+

if isinstance(text, str):

|

| 79 |

+

return text

|

| 80 |

+

elif isinstance(text, bytes):

|

| 81 |

+

return text.decode('utf-8', 'ignore')

|

| 82 |

+

else:

|

| 83 |

+

raise ValueError('Unsupported string type: %s' % (type(text)))

|

| 84 |

+

elif six.PY2:

|

| 85 |

+

if isinstance(text, str):

|

| 86 |

+

return text.decode('utf-8', 'ignore')

|

| 87 |

+

elif isinstance(text, unicode):

|

| 88 |

+

return text

|

| 89 |

+

else:

|

| 90 |

+

raise ValueError('Unsupported string type: %s' % (type(text)))

|

| 91 |

+

else:

|

| 92 |

+

raise ValueError('Not running on Python2 or Python 3?')

|

| 93 |

+

|

| 94 |

+

|

| 95 |

+

def printable_text(text):

|

| 96 |

+

"""Returns text encoded in a way suitable for print or `tf.logging`."""

|

| 97 |

+

|

| 98 |

+

# These functions want `str` for both Python2 and Python3, but in one case

|

| 99 |

+

# it's a Unicode string and in the other it's a byte string.

|

| 100 |

+

if six.PY3:

|

| 101 |

+

if isinstance(text, str):

|

| 102 |

+

return text

|

| 103 |

+

elif isinstance(text, bytes):

|

| 104 |

+

return text.decode('utf-8', 'ignore')

|

| 105 |

+

else:

|

| 106 |

+

raise ValueError('Unsupported string type: %s' % (type(text)))

|

| 107 |

+

elif six.PY2:

|

| 108 |

+

if isinstance(text, str):

|

| 109 |

+

return text

|

| 110 |

+

elif isinstance(text, unicode):

|

| 111 |

+

return text.encode('utf-8')

|

| 112 |

+

else:

|

| 113 |

+

raise ValueError('Unsupported string type: %s' % (type(text)))

|

| 114 |

+

else:

|

| 115 |

+

raise ValueError('Not running on Python2 or Python 3?')

|

| 116 |

+

|

| 117 |

+

|

| 118 |

+

def load_vocab(vocab_file):

|

| 119 |

+

"""Loads a vocabulary file into a dictionary."""

|

| 120 |

+

vocab = collections.OrderedDict()

|

| 121 |

+

index = 0

|

| 122 |

+

with open(vocab_file, 'r', encoding='utf-8') as reader:

|

| 123 |

+

while True:

|

| 124 |

+

token = convert_to_unicode(reader.readline())

|

| 125 |

+

if not token:

|

| 126 |

+

break

|

| 127 |

+

token = token.strip()

|

| 128 |

+

vocab[token] = index

|

| 129 |

+

index += 1

|

| 130 |

+

return vocab

|

| 131 |

+

|

| 132 |

+

|

| 133 |

+

def convert_by_vocab(vocab, items):

|

| 134 |

+

"""Converts a sequence of [tokens|ids] using the vocab."""

|

| 135 |

+

output = []

|

| 136 |

+

for item in items:

|

| 137 |

+

output.append(vocab[item])

|

| 138 |

+

return output

|

| 139 |

+

|

| 140 |

+

|

| 141 |

+

def convert_tokens_to_ids(vocab, tokens):

|

| 142 |

+

return convert_by_vocab(vocab, tokens)

|

| 143 |

+

|

| 144 |

+

|

| 145 |

+

def convert_ids_to_tokens(inv_vocab, ids):

|

| 146 |

+

return convert_by_vocab(inv_vocab, ids)

|

| 147 |

+

|

| 148 |

+

|

| 149 |

+

def whitespace_tokenize(text):

|

| 150 |

+

"""Runs basic whitespace cleaning and splitting on a piece of text."""

|

| 151 |

+

text = text.strip()

|

| 152 |

+

if not text:

|

| 153 |

+

return []

|

| 154 |

+

tokens = text.split()

|

| 155 |

+

return tokens

|

| 156 |

+

|

| 157 |

+

|

| 158 |

+

class FullTokenizer(object):

|

| 159 |

+

"""Runs end-to-end tokenziation."""

|

| 160 |

+

|

| 161 |

+

def __init__(self, vocab_file, do_lower_case=True):

|

| 162 |

+

self.vocab = load_vocab(vocab_file)

|

| 163 |

+

self.inv_vocab = {v: k for k, v in self.vocab.items()}

|

| 164 |

+

self.basic_tokenizer = BasicTokenizer(do_lower_case=do_lower_case)

|

| 165 |

+

self.wordpiece_tokenizer = WordpieceTokenizer(vocab=self.vocab)

|

| 166 |

+

|

| 167 |

+

def tokenize(self, text):

|

| 168 |

+

split_tokens = []

|

| 169 |

+

for token in self.basic_tokenizer.tokenize(text):

|

| 170 |

+

for sub_token in self.wordpiece_tokenizer.tokenize(token):

|

| 171 |

+

split_tokens.append(sub_token)

|

| 172 |

+

|

| 173 |

+

return split_tokens

|

| 174 |

+

|

| 175 |

+

def convert_tokens_to_ids(self, tokens):

|

| 176 |

+

return convert_by_vocab(self.vocab, tokens)

|

| 177 |

+

|

| 178 |

+

def convert_ids_to_tokens(self, ids):

|

| 179 |

+

return convert_by_vocab(self.inv_vocab, ids)

|

| 180 |

+

|

| 181 |

+

@staticmethod

|

| 182 |

+

def convert_tokens_to_string(tokens, clean_up_tokenization_spaces=True):

|

| 183 |

+

""" Converts a sequence of tokens (string) in a single string. """

|

| 184 |

+

|

| 185 |

+

def clean_up_tokenization(out_string):

|

| 186 |

+

""" Clean up a list of simple English tokenization artifacts

|

| 187 |

+

like spaces before punctuations and abreviated forms.

|

| 188 |

+

"""

|

| 189 |

+

out_string = (

|

| 190 |

+

out_string.replace(' .', '.').replace(' ?', '?').replace(

|

| 191 |

+

' !', '!').replace(' ,', ',').replace(" ' ", "'").replace(

|

| 192 |

+

" n't", "n't").replace(" 'm", "'m").replace(

|

| 193 |

+

" 's", "'s").replace(" 've",

|

| 194 |

+

"'ve").replace(" 're", "'re"))

|

| 195 |

+

return out_string

|

| 196 |

+

|

| 197 |

+

text = ' '.join(tokens).replace(' ##', '').strip()

|

| 198 |

+

if clean_up_tokenization_spaces:

|

| 199 |

+

clean_text = clean_up_tokenization(text)

|

| 200 |

+

return clean_text

|

| 201 |

+

else:

|

| 202 |

+

return text

|

| 203 |

+

|

| 204 |

+

def vocab_size(self):

|

| 205 |

+

return len(self.vocab)

|

| 206 |

+

|

| 207 |

+

|

| 208 |

+

class BasicTokenizer(object):

|

| 209 |

+

"""Runs basic tokenization (punctuation splitting, lower casing, etc.)."""

|

| 210 |

+

|

| 211 |

+

def __init__(self, do_lower_case=True):

|

| 212 |

+

"""Constructs a BasicTokenizer.

|

| 213 |

+

|

| 214 |

+

Args:

|

| 215 |

+

do_lower_case: Whether to lower case the input.

|

| 216 |

+

"""

|

| 217 |

+

self.do_lower_case = do_lower_case

|

| 218 |

+

|

| 219 |

+

def tokenize(self, text):

|

| 220 |

+

"""Tokenizes a piece of text."""

|

| 221 |

+

text = convert_to_unicode(text)

|

| 222 |

+

text = self._clean_text(text)

|

| 223 |

+

|

| 224 |

+

# This was added on November 1st, 2018 for the multilingual and Chinese

|

| 225 |

+

# models. This is also applied to the English models now, but it doesn't

|

| 226 |

+

# matter since the English models were not trained on any Chinese data

|

| 227 |

+

# and generally don't have any Chinese data in them (there are Chinese

|

| 228 |

+

# characters in the vocabulary because Wikipedia does have some Chinese

|

| 229 |

+

# words in the English Wikipedia.).

|

| 230 |

+

text = self._tokenize_chinese_chars(text)

|

| 231 |

+

|

| 232 |

+

orig_tokens = whitespace_tokenize(text)

|

| 233 |

+

split_tokens = []

|

| 234 |

+

for token in orig_tokens:

|

| 235 |

+

if self.do_lower_case:

|

| 236 |

+

token = token.lower()

|

| 237 |

+

token = self._run_strip_accents(token)

|

| 238 |

+

split_tokens.extend(self._run_split_on_punc(token))

|

| 239 |

+

|

| 240 |

+

output_tokens = whitespace_tokenize(' '.join(split_tokens))

|

| 241 |

+

return output_tokens

|

| 242 |

+

|

| 243 |

+

def _run_strip_accents(self, text):

|

| 244 |

+

"""Strips accents from a piece of text."""

|

| 245 |

+

text = unicodedata.normalize('NFD', text)

|

| 246 |

+

output = []

|

| 247 |

+

for char in text:

|

| 248 |

+

cat = unicodedata.category(char)

|

| 249 |

+

if cat == 'Mn':

|

| 250 |

+

continue

|

| 251 |

+

output.append(char)

|

| 252 |

+

return ''.join(output)

|

| 253 |

+

|

| 254 |

+

def _run_split_on_punc(self, text):

|

| 255 |

+

"""Splits punctuation on a piece of text."""

|

| 256 |

+

chars = list(text)

|

| 257 |

+

i = 0

|

| 258 |

+

start_new_word = True

|

| 259 |

+

output = []

|

| 260 |

+

while i < len(chars):

|

| 261 |

+

char = chars[i]

|

| 262 |

+

if _is_punctuation(char):

|

| 263 |

+

output.append([char])

|

| 264 |

+

start_new_word = True

|

| 265 |

+

else:

|

| 266 |

+

if start_new_word:

|

| 267 |

+

output.append([])

|

| 268 |

+

start_new_word = False

|

| 269 |

+

output[-1].append(char)

|

| 270 |

+

i += 1

|

| 271 |

+

|

| 272 |

+

return [''.join(x) for x in output]

|

| 273 |

+

|

| 274 |

+

def _tokenize_chinese_chars(self, text):

|

| 275 |

+

"""Adds whitespace around any CJK character."""

|

| 276 |

+

output = []

|

| 277 |

+

for char in text:

|

| 278 |

+

cp = ord(char)

|

| 279 |

+

if self._is_chinese_char(cp):

|

| 280 |

+

output.append(' ')

|

| 281 |

+

output.append(char)

|

| 282 |

+

output.append(' ')

|

| 283 |

+

else:

|

| 284 |

+

output.append(char)

|

| 285 |

+

return ''.join(output)

|