Spaces:

Runtime error

Runtime error

Commit

•

613a03d

1

Parent(s):

9ec9fb6

Upload 5 files

Browse files- Dockerfile +23 -0

- app.py +81 -0

- document.png +0 -0

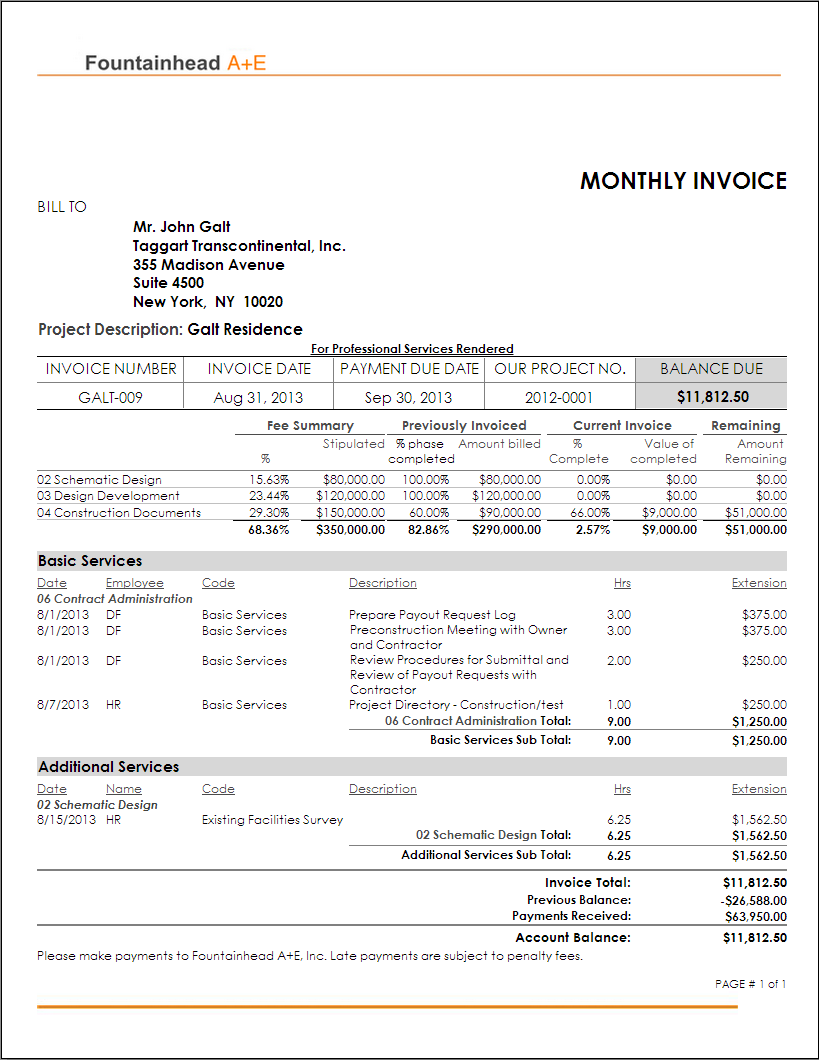

- invoice.png +0 -0

- requirements.txt +7 -0

Dockerfile

ADDED

|

@@ -0,0 +1,23 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

FROM python:3.9

|

| 2 |

+

|

| 3 |

+

RUN pip install virtualenv

|

| 4 |

+

ENV VIRTUAL_ENV=/venv

|

| 5 |

+

RUN virtualenv venv -p python3

|

| 6 |

+

ENV PATH="VIRTUAL_ENV/bin:$PATH"

|

| 7 |

+

WORKDIR /app

|

| 8 |

+

ADD . /app

|

| 9 |

+

|

| 10 |

+

# Install dependencies

|

| 11 |

+

RUN apt-get update

|

| 12 |

+

RUN apt-get install -y tesseract-ocr

|

| 13 |

+

|

| 14 |

+

RUN pip install -r requirements.txt

|

| 15 |

+

|

| 16 |

+

RUN git clone https://github.com/facebookresearch/detectron2.git

|

| 17 |

+

RUN python -m pip install -e detectron2

|

| 18 |

+

|

| 19 |

+

# Expose port

|

| 20 |

+

EXPOSE 5000

|

| 21 |

+

|

| 22 |

+

# Run the application:

|

| 23 |

+

CMD ["python", "app.py"]

|

app.py

ADDED

|

@@ -0,0 +1,81 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import gradio as gr

|

| 2 |

+

import numpy as np

|

| 3 |

+

from transformers import LayoutLMv2Processor, LayoutLMv2ForTokenClassification

|

| 4 |

+

from datasets import load_dataset

|

| 5 |

+

from PIL import Image, ImageDraw, ImageFont

|

| 6 |

+

|

| 7 |

+

processor = LayoutLMv2Processor.from_pretrained("microsoft/layoutlmv2-base-uncased")

|

| 8 |

+

model = LayoutLMv2ForTokenClassification.from_pretrained("nielsr/layoutlmv2-finetuned-funsd")

|

| 9 |

+

|

| 10 |

+

# load image example

|

| 11 |

+

dataset = load_dataset("nielsr/funsd", split="test")

|

| 12 |

+

image = Image.open(dataset[0]["image_path"]).convert("RGB")

|

| 13 |

+

image = Image.open("./invoice.png")

|

| 14 |

+

image.save("document.png")

|

| 15 |

+

# define id2label, label2color

|

| 16 |

+

labels = dataset.features['ner_tags'].feature.names

|

| 17 |

+

id2label = {v: k for v, k in enumerate(labels)}

|

| 18 |

+

label2color = {'question':'blue', 'answer':'green', 'header':'orange', 'other':'violet'}

|

| 19 |

+

|

| 20 |

+

def unnormalize_box(bbox, width, height):

|

| 21 |

+

return [

|

| 22 |

+

width * (bbox[0] / 1000),

|

| 23 |

+

height * (bbox[1] / 1000),

|

| 24 |

+

width * (bbox[2] / 1000),

|

| 25 |

+

height * (bbox[3] / 1000),

|

| 26 |

+

]

|

| 27 |

+

|

| 28 |

+

def iob_to_label(label):

|

| 29 |

+

label = label[2:]

|

| 30 |

+

if not label:

|

| 31 |

+

return 'other'

|

| 32 |

+

return label

|

| 33 |

+

|

| 34 |

+

def process_image(image):

|

| 35 |

+

width, height = image.size

|

| 36 |

+

|

| 37 |

+

# encode

|

| 38 |

+

encoding = processor(image, truncation=True, return_offsets_mapping=True, return_tensors="pt")

|

| 39 |

+

offset_mapping = encoding.pop('offset_mapping')

|

| 40 |

+

|

| 41 |

+

# forward pass

|

| 42 |

+

outputs = model(**encoding)

|

| 43 |

+

|

| 44 |

+

# get predictions

|

| 45 |

+

predictions = outputs.logits.argmax(-1).squeeze().tolist()

|

| 46 |

+

token_boxes = encoding.bbox.squeeze().tolist()

|

| 47 |

+

|

| 48 |

+

# only keep non-subword predictions

|

| 49 |

+

is_subword = np.array(offset_mapping.squeeze().tolist())[:,0] != 0

|

| 50 |

+

true_predictions = [id2label[pred] for idx, pred in enumerate(predictions) if not is_subword[idx]]

|

| 51 |

+

true_boxes = [unnormalize_box(box, width, height) for idx, box in enumerate(token_boxes) if not is_subword[idx]]

|

| 52 |

+

|

| 53 |

+

# draw predictions over the image

|

| 54 |

+

draw = ImageDraw.Draw(image)

|

| 55 |

+

font = ImageFont.load_default()

|

| 56 |

+

for prediction, box in zip(true_predictions, true_boxes):

|

| 57 |

+

predicted_label = iob_to_label(prediction).lower()

|

| 58 |

+

draw.rectangle(box, outline=label2color[predicted_label])

|

| 59 |

+

draw.text((box[0]+10, box[1]-10), text=predicted_label, fill=label2color[predicted_label], font=font)

|

| 60 |

+

|

| 61 |

+

return image

|

| 62 |

+

|

| 63 |

+

|

| 64 |

+

title = "Interactive demo: LayoutLMv2"

|

| 65 |

+

description = "Demo for Microsoft's LayoutLMv2, a Transformer for state-of-the-art document image understanding tasks. This particular model is fine-tuned on FUNSD, a dataset of manually annotated forms. It annotates the words appearing in the image as QUESTION/ANSWER/HEADER/OTHER. To use it, simply upload an image or use the example image below and click 'Submit'. Results will show up in a few seconds. If you want to make the output bigger, right-click on it and select 'Open image in new tab'."

|

| 66 |

+

article = "<p style='text-align: center'><a href='https://arxiv.org/abs/2012.14740' target='_blank'>LayoutLMv2: Multi-modal Pre-training for Visually-Rich Document Understanding</a> | <a href='https://github.com/microsoft/unilm' target='_blank'>Github Repo</a></p>"

|

| 67 |

+

examples =[['document.png']]

|

| 68 |

+

|

| 69 |

+

css = ".output-image, .input-image {height: 40rem !important; width: 100% !important;}"

|

| 70 |

+

|

| 71 |

+

css = ".image-preview {height: auto !important;}"

|

| 72 |

+

|

| 73 |

+

iface = gr.Interface(fn=process_image,

|

| 74 |

+

inputs=gr.components.Image(type="pil"),

|

| 75 |

+

outputs=gr.components.Image(type="pil", label="annotated image"),

|

| 76 |

+

title=title,

|

| 77 |

+

description=description,

|

| 78 |

+

article=article,

|

| 79 |

+

examples=examples,

|

| 80 |

+

css=css)

|

| 81 |

+

iface.launch(debug=True, enable_queue=True, share=True)

|

document.png

ADDED

|

invoice.png

ADDED

|

requirements.txt

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

gradio

|

| 2 |

+

Pillow

|

| 3 |

+

numpy

|

| 4 |

+

datasets

|

| 5 |

+

transformers

|

| 6 |

+

torch

|

| 7 |

+

torchvision

|