Update README.md

Browse files

README.md

CHANGED

|

@@ -1,13 +1,16 @@

|

|

| 1 |

---

|

| 2 |

-

language:

|

|

|

|

|

|

|

| 3 |

tags:

|

| 4 |

-

-

|

|

|

|

|

|

|

| 5 |

- data augmentation

|

| 6 |

-

- keywords-to-text generation

|

| 7 |

-

- sketch-to-text generation

|

| 8 |

license: apache-2.0

|

| 9 |

datasets:

|

| 10 |

- c4

|

|

|

|

| 11 |

|

| 12 |

|

| 13 |

widget:

|

|

@@ -28,48 +31,59 @@ inference:

|

|

| 28 |

num_beams: 3

|

| 29 |

do_sample: True

|

| 30 |

---

|

|

|

|

| 31 |

|

| 32 |

-

|

|

|

|

| 33 |

|

| 34 |

-

**

|

| 35 |

-

**基于草稿的生成式增强模型**

|

| 36 |

|

| 37 |

-

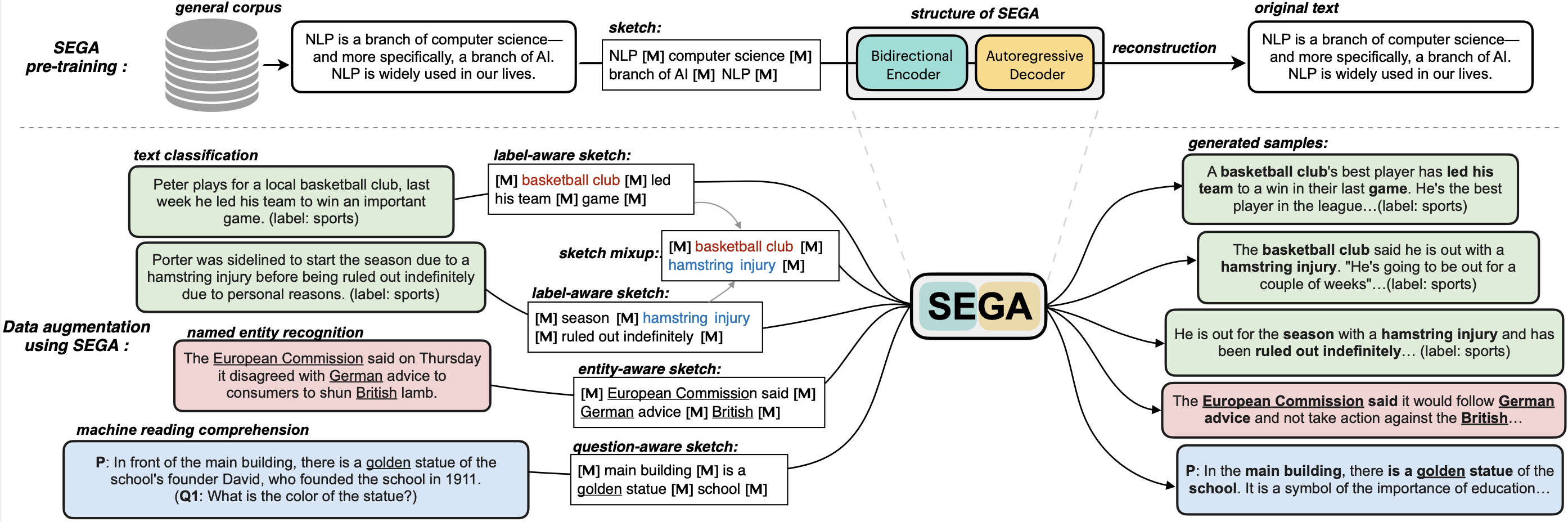

**SEGA** is a **general text augmentation model** that can be used for data augmentation for **various NLP tasks** (including sentiment analysis, topic classification, NER, and QA). SEGA uses an encoder-decoder structure (based on the BART architecture) and is pre-trained on the `C4-realnewslike` corpus.

|

| 38 |

|

|

|

|

| 39 |

|

| 40 |

-

|

| 41 |

|

| 42 |

-

|

| 43 |

-

- GitHub: [SEGA](https://github.com/beyondguo/SEGA).

|

| 44 |

|

| 45 |

-

**SEGA** is able to write complete paragraphs given a *sketch*, which can be composed of:

|

| 46 |

-

- keywords /key-phrases, like "––NLP––AI––computer––science––"

|

| 47 |

-

- spans, like "Conference on Empirical Methods––submission of research papers––"

|

| 48 |

-

- sentences, like "I really like machine learning––I work at Google since last year––"

|

| 49 |

-

- or mixup~

|

| 50 |

|

|

|

|

| 51 |

|

| 52 |

**Model variations:**

|

|

|

|

| 53 |

| Model | #params | Language | comment|

|

| 54 |

|------------------------|--------------------------------|-------|---------|

|

| 55 |

-

| [`

|

| 56 |

-

| [`

|

| 57 |

-

| [`

|

| 58 |

-

| [`

|

| 59 |

-

| [`

|

| 60 |

|

| 61 |

---

|

| 62 |

|

| 63 |

-

|

| 64 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 65 |

```python

|

| 66 |

from transformers import pipeline

|

| 67 |

# 1. load the model with the huggingface `pipeline`

|

| 68 |

-

|

| 69 |

# 2. provide a sketch (joint by <mask> tokens)

|

| 70 |

sketch = "<mask> Conference on Empirical Methods <mask> submission of research papers <mask> Deep Learning <mask>"

|

| 71 |

-

# 3.

|

| 72 |

-

generated_text =

|

| 73 |

print(generated_text)

|

| 74 |

```

|

| 75 |

Output:

|

|

@@ -77,14 +91,20 @@ Output:

|

|

| 77 |

'The Conference on Empirical Methods welcomes the submission of research papers. Abstracts should be in the form of a paper or presentation. Please submit abstracts to the following email address: eemml.stanford.edu. The conference will be held at Stanford University on April 1618, 2019. The theme of the conference is Deep Learning.'

|

| 78 |

```

|

| 79 |

|

| 80 |

-

|

| 81 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 82 |

|

| 83 |

-

---

|

| 84 |

|

| 85 |

-

|

|

|

|

|

|

|

| 86 |

- Setting: Low-resource setting, where only n={50,100,200,500,1000} labeled samples are available for training. The below results are the average of all training sizes.

|

| 87 |

-

- Datasets: [HuffPost](https://huggingface.co/datasets/khalidalt/HuffPost), [BBC](https://huggingface.co/datasets/SetFit/bbc-news), [SST2](https://huggingface.co/datasets/glue), [IMDB](https://huggingface.co/datasets/imdb), [Yahoo](https://huggingface.co/datasets/yahoo_answers_topics), [20NG](https://huggingface.co/datasets/newsgroup).

|

| 88 |

- Base classifier: [DistilBERT](https://huggingface.co/distilbert-base-cased)

|

| 89 |

|

| 90 |

|

|

@@ -98,8 +118,8 @@ In-distribution (ID) evaluations:

|

|

| 98 |

| C-MLM | 80.60 | 96.13 | 45.40 | 46.36 | 77.31 | 76.91 | 70.45 |

|

| 99 |

| LAMBADA | 81.46 | 93.74 | 50.49 | 47.72 | 78.22 | 78.31 | 71.66 |

|

| 100 |

| STA | 80.74 | 95.64 | 46.96 | 47.27 | 77.88 | 77.80 | 71.05 |

|

| 101 |

-

| **

|

| 102 |

-

| **

|

| 103 |

|

| 104 |

Out-of-distribution (OOD) evaluations:

|

| 105 |

| | Huff->BBC | BBC->Huff | IMDB->SST2 | SST2->IMDB | avg. |

|

|

@@ -111,10 +131,11 @@ Out-of-distribution (OOD) evaluations:

|

|

| 111 |

| C-MLM | 64.94 | **67.80** | 74.98 | 71.78 | 69.87 |

|

| 112 |

| LAMBADA | 68.57 | 52.79 | 75.24 | 76.04 | 68.16 |

|

| 113 |

| STA | 69.31 | 64.82 | 74.72 | 73.62 | 70.61 |

|

| 114 |

-

| **

|

| 115 |

-

| **

|

| 116 |

|

|

|

|

|

|

|

| 117 |

|

| 118 |

|

| 119 |

-

### BibTeX entry and citation info

|

| 120 |

|

|

|

|

| 1 |

---

|

| 2 |

+

language:

|

| 3 |

+

- en

|

| 4 |

+

- zh

|

| 5 |

tags:

|

| 6 |

+

- GENIUS

|

| 7 |

+

- conditional text generation

|

| 8 |

+

- sketch-based text generation

|

| 9 |

- data augmentation

|

|

|

|

|

|

|

| 10 |

license: apache-2.0

|

| 11 |

datasets:

|

| 12 |

- c4

|

| 13 |

+

- beyond/chinese_clean_passages_80m

|

| 14 |

|

| 15 |

|

| 16 |

widget:

|

|

|

|

| 31 |

num_beams: 3

|

| 32 |

do_sample: True

|

| 33 |

---

|

| 34 |

+

# 💡GENIUS – generating text using sketches!

|

| 35 |

|

| 36 |

+

- **Paper: [GENIUS: Sketch-based Language Model Pre-training via Extreme and Selective Masking for Text Generation and Augmentation](https://github.com/beyondguo/genius/blob/master/GENIUS_gby_arxiv.pdf)**

|

| 37 |

+

- **GitHub: [GENIUS project, GENIUS pre-training, GeniusAug for data augmentation](https://github.com/beyondguo/genius)**

|

| 38 |

|

| 39 |

+

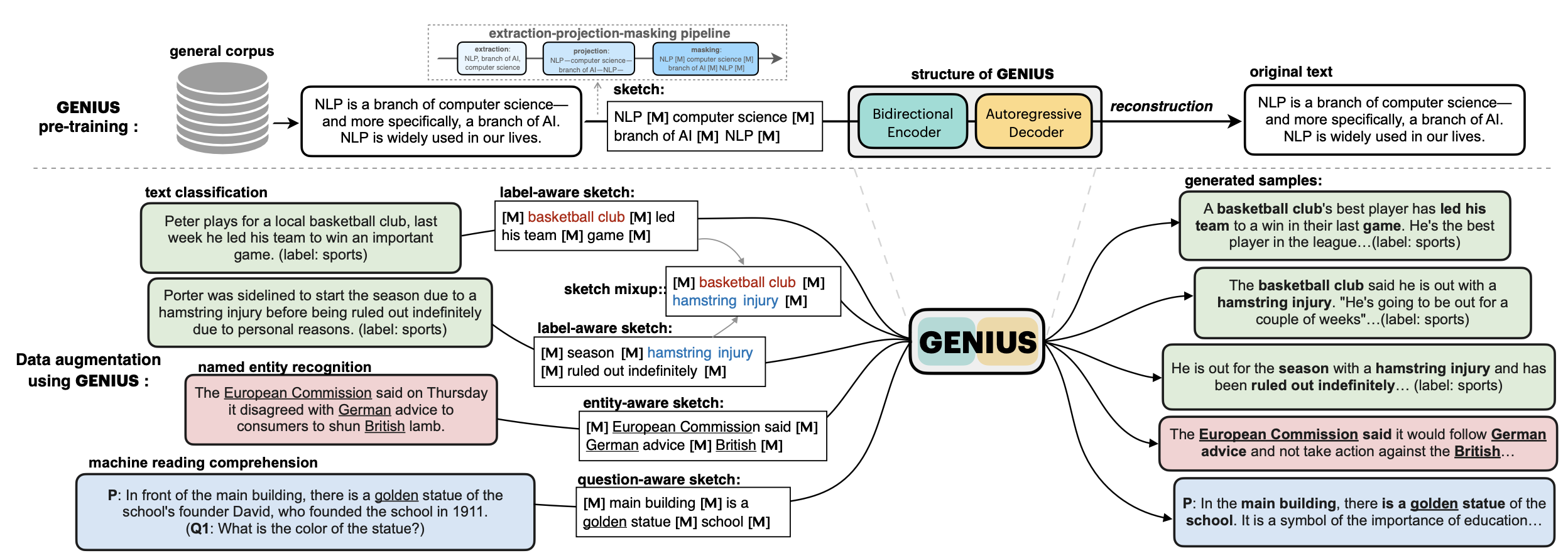

💡**GENIUS** is a powerful conditional text generation model using sketches as input, which can fill in the missing contexts for a given **sketch** (key information consisting of textual spans, phrases, or words, concatenated by mask tokens). GENIUS is pre-trained on a large-scale textual corpus with a novel *reconstruction from sketch* objective using an *extreme and selective masking* strategy, enabling it to generate diverse and high-quality texts given sketches.

|

|

|

|

| 40 |

|

|

|

|

| 41 |

|

| 42 |

+

**GENIUS** can also be used as a general textual **data augmentation tool** for **various NLP tasks** (including sentiment analysis, topic classification, NER, and QA).

|

| 43 |

|

|

|

|

| 44 |

|

| 45 |

+

|

|

|

|

| 46 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 47 |

|

| 48 |

+

- Models hosted in 🤗 Huggingface:

|

| 49 |

|

| 50 |

**Model variations:**

|

| 51 |

+

|

| 52 |

| Model | #params | Language | comment|

|

| 53 |

|------------------------|--------------------------------|-------|---------|

|

| 54 |

+

| [`genius-large`](https://huggingface.co/beyond/genius-large) | 406M | English | The version used in **paper** (recommend) |

|

| 55 |

+

| [`genius-large-k2t`](https://huggingface.co/beyond/genius-large-k2t) | 406M | English | keywords-to-text |

|

| 56 |

+

| [`genius-base`](https://huggingface.co/beyond/genius-base) | 139M | English | smaller version |

|

| 57 |

+

| [`genius-base-ps`](https://huggingface.co/beyond/genius-base) | 139M | English | pre-trained both in paragraphs and short sentences |

|

| 58 |

+

| [`genius-base-chinese`](https://huggingface.co/beyond/genius-base-chinese) | 116M | 中文 | 在一千万纯净中文段落上预训练|

|

| 59 |

|

| 60 |

---

|

| 61 |

|

| 62 |

+

## Usage

|

| 63 |

+

|

| 64 |

+

### What is a sketch?

|

| 65 |

+

|

| 66 |

+

First, what is a **sketch**? As defined in our paper, a sketch is "key information consisting of textual spans, phrases, or words, concatenated by mask tokens". It's like a draft or framework when you begin to write an article. With GENIUS model, you can input some key elements you want to mention in your wrinting, then the GENIUS model can generate cohrent text based on your sketch.

|

| 67 |

+

|

| 68 |

+

The sketch which can be composed of:

|

| 69 |

+

|

| 70 |

+

- keywords /key-phrases, like `__NLP__AI__computer__science__`

|

| 71 |

+

- spans, like `Conference on Empirical Methods__submission of research papers__`

|

| 72 |

+

- sentences, like `I really like machine learning__I work at Google since last year__`

|

| 73 |

+

- or a mixup!

|

| 74 |

+

|

| 75 |

+

(the `__` is the mask token. Use `<mask>` for English, and `[MASK]` for Chinese)

|

| 76 |

+

|

| 77 |

+

### How to use the model

|

| 78 |

+

#### 1. If you already have a sketch in mind, and want to get a paragraph based on it...

|

| 79 |

```python

|

| 80 |

from transformers import pipeline

|

| 81 |

# 1. load the model with the huggingface `pipeline`

|

| 82 |

+

genius = pipeline("text2text-generation", model='beyond/genius-large', device=0)

|

| 83 |

# 2. provide a sketch (joint by <mask> tokens)

|

| 84 |

sketch = "<mask> Conference on Empirical Methods <mask> submission of research papers <mask> Deep Learning <mask>"

|

| 85 |

+

# 3. here we go!

|

| 86 |

+

generated_text = genius(sketch, num_beams=3, do_sample=True, max_length=200)[0]['generated_text']

|

| 87 |

print(generated_text)

|

| 88 |

```

|

| 89 |

Output:

|

|

|

|

| 91 |

'The Conference on Empirical Methods welcomes the submission of research papers. Abstracts should be in the form of a paper or presentation. Please submit abstracts to the following email address: eemml.stanford.edu. The conference will be held at Stanford University on April 1618, 2019. The theme of the conference is Deep Learning.'

|

| 92 |

```

|

| 93 |

|

| 94 |

+

If you have a lot of sketches, you can batch-up your sketches to a Huggingface `Dataset` object, which can be much faster.

|

| 95 |

+

|

| 96 |

+

TODO: we are also building a python package for more convenient use of GENIUS, which will be released in few weeks.

|

| 97 |

+

|

| 98 |

+

#### 2. If you have an NLP dataset (e.g. classification) and want to do data augmentation to enlarge your dataset...

|

| 99 |

+

|

| 100 |

+

Please check [genius/augmentation_clf](https://github.com/beyondguo/genius/tree/master/augmentation_clf) and [genius/augmentation_ner_qa](https://github.com/beyondguo/genius/tree/master/augmentation_ner_qa), where we provide ready-to-run scripts for data augmentation for text classification/NER/MRC tasks.

|

| 101 |

|

|

|

|

| 102 |

|

| 103 |

+

|

| 104 |

+

## Augmentation Experiments:

|

| 105 |

+

Data augmentation is an important application for natural language generation (NLG) models, which is also a valuable evaluation of whether the generated text can be used in real applications.

|

| 106 |

- Setting: Low-resource setting, where only n={50,100,200,500,1000} labeled samples are available for training. The below results are the average of all training sizes.

|

| 107 |

+

- Text Classification Datasets: [HuffPost](https://huggingface.co/datasets/khalidalt/HuffPost), [BBC](https://huggingface.co/datasets/SetFit/bbc-news), [SST2](https://huggingface.co/datasets/glue), [IMDB](https://huggingface.co/datasets/imdb), [Yahoo](https://huggingface.co/datasets/yahoo_answers_topics), [20NG](https://huggingface.co/datasets/newsgroup).

|

| 108 |

- Base classifier: [DistilBERT](https://huggingface.co/distilbert-base-cased)

|

| 109 |

|

| 110 |

|

|

|

|

| 118 |

| C-MLM | 80.60 | 96.13 | 45.40 | 46.36 | 77.31 | 76.91 | 70.45 |

|

| 119 |

| LAMBADA | 81.46 | 93.74 | 50.49 | 47.72 | 78.22 | 78.31 | 71.66 |

|

| 120 |

| STA | 80.74 | 95.64 | 46.96 | 47.27 | 77.88 | 77.80 | 71.05 |

|

| 121 |

+

| **GeniusAug** | 81.43 | 95.74 | 49.60 | 50.38 | **80.16** | 78.82 | 72.68 |

|

| 122 |

+

| **GeniusAug-f** | **81.82** | 95.99 | **50.42** | **50.81** | 79.40 | **80.57** | **73.17** |

|

| 123 |

|

| 124 |

Out-of-distribution (OOD) evaluations:

|

| 125 |

| | Huff->BBC | BBC->Huff | IMDB->SST2 | SST2->IMDB | avg. |

|

|

|

|

| 131 |

| C-MLM | 64.94 | **67.80** | 74.98 | 71.78 | 69.87 |

|

| 132 |

| LAMBADA | 68.57 | 52.79 | 75.24 | 76.04 | 68.16 |

|

| 133 |

| STA | 69.31 | 64.82 | 74.72 | 73.62 | 70.61 |

|

| 134 |

+

| **GeniusAug** | 74.87 | 66.85 | 76.02 | 74.76 | 73.13 |

|

| 135 |

+

| **GeniusAug-f** | **76.18** | 66.89 | **77.45** | **80.36** | **75.22** |

|

| 136 |

|

| 137 |

+

### BibTeX entry and citation info

|

| 138 |

+

TBD

|

| 139 |

|

| 140 |

|

|

|

|

| 141 |

|