Updating model files

Browse files

README.md

CHANGED

|

@@ -5,6 +5,17 @@ tags:

|

|

| 5 |

- causal-lm

|

| 6 |

- llama

|

| 7 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 8 |

# Wizard-Vicuna-13B-HF

|

| 9 |

|

| 10 |

This is a float16 HF format repo for [junelee's wizard-vicuna 13B](https://huggingface.co/junelee/wizard-vicuna-13b).

|

|

@@ -19,6 +30,18 @@ This model was converted to float16 to make it easier to load and manage.

|

|

| 19 |

* [4bit and 5bit GGML models for CPU inference](https://huggingface.co/TheBloke/wizard-vicuna-13B-GGML).

|

| 20 |

* [float16 HF format model for GPU inference](https://huggingface.co/TheBloke/wizard-vicuna-13B-HF).

|

| 21 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 22 |

# Original WizardVicuna-13B model card

|

| 23 |

|

| 24 |

Github page: https://github.com/melodysdreamj/WizardVicunaLM

|

|

@@ -33,7 +56,7 @@ I am a big fan of the ideas behind WizardLM and VicunaLM. I particularly like th

|

|

| 33 |

|

| 34 |

|

| 35 |

|

| 36 |

-

### Detail

|

| 37 |

|

| 38 |

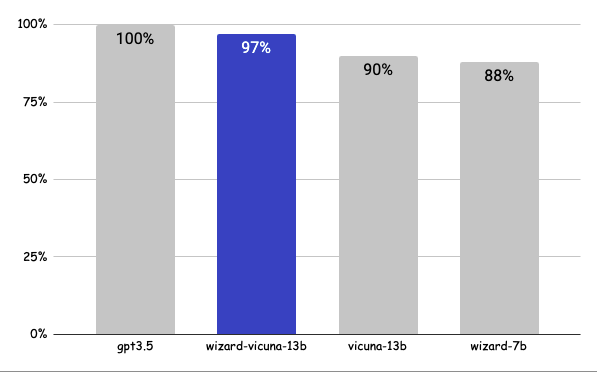

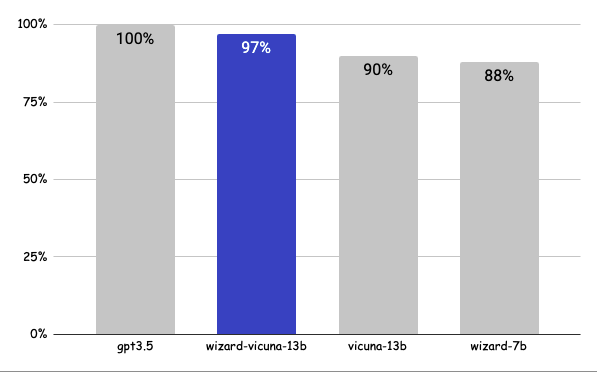

The questions presented here are not from rigorous tests, but rather, I asked a few questions and requested GPT-4 to score them. The models compared were ChatGPT 3.5, WizardVicunaLM, VicunaLM, and WizardLM, in that order.

|

| 39 |

|

|

|

|

| 5 |

- causal-lm

|

| 6 |

- llama

|

| 7 |

---

|

| 8 |

+

<div style="width: 100%;">

|

| 9 |

+

<img src="https://i.imgur.com/EBdldam.jpg" alt="TheBlokeAI" style="width: 100%; min-width: 400px; display: block; margin: auto;">

|

| 10 |

+

</div>

|

| 11 |

+

<div style="display: flex; justify-content: space-between; width: 100%;">

|

| 12 |

+

<div style="display: flex; flex-direction: column; align-items: flex-start;">

|

| 13 |

+

<p><a href="https://discord.gg/UBgz4VXf">Chat & support: my new Discord server</a></p>

|

| 14 |

+

</div>

|

| 15 |

+

<div style="display: flex; flex-direction: column; align-items: flex-end;">

|

| 16 |

+

<p><a href="https://www.patreon.com/TheBlokeAI">Want to contribute? Patreon coming soon!</a></p>

|

| 17 |

+

</div>

|

| 18 |

+

</div>

|

| 19 |

# Wizard-Vicuna-13B-HF

|

| 20 |

|

| 21 |

This is a float16 HF format repo for [junelee's wizard-vicuna 13B](https://huggingface.co/junelee/wizard-vicuna-13b).

|

|

|

|

| 30 |

* [4bit and 5bit GGML models for CPU inference](https://huggingface.co/TheBloke/wizard-vicuna-13B-GGML).

|

| 31 |

* [float16 HF format model for GPU inference](https://huggingface.co/TheBloke/wizard-vicuna-13B-HF).

|

| 32 |

|

| 33 |

+

## Want to support my work?

|

| 34 |

+

|

| 35 |

+

I've had a lot of people ask if they can contribute. I love providing models and helping people, but it is starting to rack up pretty big cloud computing bills.

|

| 36 |

+

|

| 37 |

+

So if you're able and willing to contribute, it'd be most gratefully received and will help me to keep providing models, and work on various AI projects.

|

| 38 |

+

|

| 39 |

+

Donaters will get priority support on any and all AI/LLM/model questions, and I'll gladly quantise any model you'd like to try.

|

| 40 |

+

|

| 41 |

+

* Patreon: coming soon! (just awaiting approval)

|

| 42 |

+

* Ko-Fi: https://ko-fi.com/TheBlokeAI

|

| 43 |

+

* Discord: https://discord.gg/UBgz4VXf

|

| 44 |

+

|

| 45 |

# Original WizardVicuna-13B model card

|

| 46 |

|

| 47 |

Github page: https://github.com/melodysdreamj/WizardVicunaLM

|

|

|

|

| 56 |

|

| 57 |

|

| 58 |

|

| 59 |

+

### Detail

|

| 60 |

|

| 61 |

The questions presented here are not from rigorous tests, but rather, I asked a few questions and requested GPT-4 to score them. The models compared were ChatGPT 3.5, WizardVicunaLM, VicunaLM, and WizardLM, in that order.

|

| 62 |

|