# H2O's H2OGPT Research OASST1 LLaMa 65B GPTQ

These files are GPTQ 4bit model files for [H2O's H2OGPT Research OASST1 LLaMa 65B](https://huggingface.co/h2oai/h2ogpt-research-oasst1-llama-65b).

It is the result of quantising to 4bit using [GPTQ-for-LLaMa](https://github.com/qwopqwop200/GPTQ-for-LLaMa).

## Repositories available

* [4-bit GPTQ models for GPU inference](https://huggingface.co/TheBloke/h2ogpt-research-oasst1-llama-65B-GPTQ)

* [2, 3, 4, 5, 6 and 8-bit GGML models for CPU+GPU inference](https://huggingface.co/TheBloke/h2ogpt-research-oasst1-llama-65B-GGML)

* [Unquantised fp16 model in pytorch format, for GPU inference and for further conversions](https://huggingface.co/h2oai/h2ogpt-research-oasst1-llama-65b)

## How to easily download and use this model in text-generation-webui

Please make sure you're using the latest version of text-generation-webui

1. Click the **Model tab**.

2. Under **Download custom model or LoRA**, enter `TheBloke/h2ogpt-research-oasst1-llama-65B-GPTQ`.

3. Click **Download**.

4. The model will start downloading. Once it's finished it will say "Done"

5. In the top left, click the refresh icon next to **Model**.

6. In the **Model** dropdown, choose the model you just downloaded: `h2ogpt-research-oasst1-llama-65B-GPTQ`

7. The model will automatically load, and is now ready for use!

8. If you want any custom settings, set them and then click **Save settings for this model** followed by **Reload the Model** in the top right.

* Note that you do not need to and should not set manual GPTQ parameters any more. These are set automatically from the file `quantize_config.json`.

9. Once you're ready, click the **Text Generation tab** and enter a prompt to get started!

## How to use this GPTQ model from Python code

First make sure you have [AutoGPTQ](https://github.com/PanQiWei/AutoGPTQ) installed:

`pip install auto-gptq`

Then try the following example code:

```python

from transformers import AutoTokenizer, pipeline, logging

from auto_gptq import AutoGPTQForCausalLM, BaseQuantizeConfig

import argparse

model_name_or_path = "TheBloke/h2ogpt-research-oasst1-llama-65B-GPTQ"

model_basename = "h2ogpt-research-oasst1-llama-65b-GPTQ-4bit--1g.act.order"

use_triton = False

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path, use_fast=True)

model = AutoGPTQForCausalLM.from_quantized(model_name_or_path,

model_basename=model_basename,

use_safetensors=True,

trust_remote_code=False,

device="cuda:0",

use_triton=use_triton,

quantize_config=None)

# Note: check the prompt template is correct for this model.

prompt = "Tell me about AI"

prompt_template=f'''USER: {prompt}

ASSISTANT:'''

print("\n\n*** Generate:")

input_ids = tokenizer(prompt_template, return_tensors='pt').input_ids.cuda()

output = model.generate(inputs=input_ids, temperature=0.7, max_new_tokens=512)

print(tokenizer.decode(output[0]))

# Inference can also be done using transformers' pipeline

# Prevent printing spurious transformers error when using pipeline with AutoGPTQ

logging.set_verbosity(logging.CRITICAL)

print("*** Pipeline:")

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

max_new_tokens=512,

temperature=0.7,

top_p=0.95,

repetition_penalty=1.15

)

print(pipe(prompt_template)[0]['generated_text'])

```

## Provided files

**h2ogpt-research-oasst1-llama-65b-GPTQ-4bit--1g.act.order.safetensors**

This will work with AutoGPTQ, ExLlama, and CUDA versions of GPTQ-for-LLaMa. There are reports of issues with Triton mode of recent GPTQ-for-LLaMa. If you have issues, please use AutoGPTQ instead.

It was created without group_size to lower VRAM requirements, and with --act-order (desc_act) to boost inference accuracy as much as possible.

* `h2ogpt-research-oasst1-llama-65b-GPTQ-4bit--1g.act.order.safetensors`

* Works with AutoGPTQ in CUDA or Triton modes.

* LLaMa models also work with [ExLlama](https://github.com/turboderp/exllama}, which usually provides much higher performance, and uses less VRAM, than AutoGPTQ.

* Works with GPTQ-for-LLaMa in CUDA mode. May have issues with GPTQ-for-LLaMa Triton mode.

* Works with text-generation-webui, including one-click-installers.

* Parameters: Groupsize = -1. Act Order / desc_act = True.

## Discord

For further support, and discussions on these models and AI in general, join us at:

[TheBloke AI's Discord server](https://discord.gg/theblokeai)

## Thanks, and how to contribute.

Thanks to the [chirper.ai](https://chirper.ai) team!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

* Patreon: https://patreon.com/TheBlokeAI

* Ko-Fi: https://ko-fi.com/TheBlokeAI

**Special thanks to**: Luke from CarbonQuill, Aemon Algiz, Dmitriy Samsonov.

**Patreon special mentions**: zynix, ya boyyy, Trenton Dambrowitz, Imad Khwaja, Alps Aficionado, chris gileta, John Detwiler, Willem Michiel, RoA, Mano Prime, Rainer Wilmers, Fred von Graf, Matthew Berman, Ghost , Nathan LeClaire, Iucharbius , Ai Maven, Illia Dulskyi, Joseph William Delisle, Space Cruiser, Lone Striker, Karl Bernard, Eugene Pentland, Greatston Gnanesh, Jonathan Leane, Randy H, Pierre Kircher, Willian Hasse, Stephen Murray, Alex , terasurfer , Edmond Seymore, Oscar Rangel, Luke Pendergrass, Asp the Wyvern, Junyu Yang, David Flickinger, Luke, Spiking Neurons AB, subjectnull, Pyrater, Nikolai Manek, senxiiz, Ajan Kanaga, Johann-Peter Hartmann, Artur Olbinski, Kevin Schuppel, Derek Yates, Kalila, K, Talal Aujan, Khalefa Al-Ahmad, Gabriel Puliatti, John Villwock, WelcomeToTheClub, Daniel P. Andersen, Preetika Verma, Deep Realms, Fen Risland, trip7s trip, webtim, Sean Connelly, Michael Levine, Chris McCloskey, biorpg, vamX, Viktor Bowallius, Cory Kujawski.

Thank you to all my generous patrons and donaters!

# Original model card: H2O's H2OGPT Research OASST1 LLaMa 65B

# h2oGPT Model Card

## Summary

H2O.ai's `h2ogpt-research-oasst1-llama-65b` is a 65 billion parameter instruction-following large language model (NOT licensed for commercial use).

- Base model: [decapoda-research/llama-65b-hf](https://huggingface.co/decapoda-research/llama-65b-hf)

- Fine-tuning dataset: [h2oai/openassistant_oasst1_h2ogpt_graded](https://huggingface.co/datasets/h2oai/openassistant_oasst1_h2ogpt_graded)

- Data-prep and fine-tuning code: [H2O.ai GitHub](https://github.com/h2oai/h2ogpt)

- Training logs: [zip](https://huggingface.co/h2oai/h2ogpt-research-oasst1-llama-65b/blob/main/llama-65b-hf.h2oaiopenassistant_oasst1_h2ogpt_graded.1_epochs.113510499324f0f007cbec9d9f1f8091441f2469.3.zip)

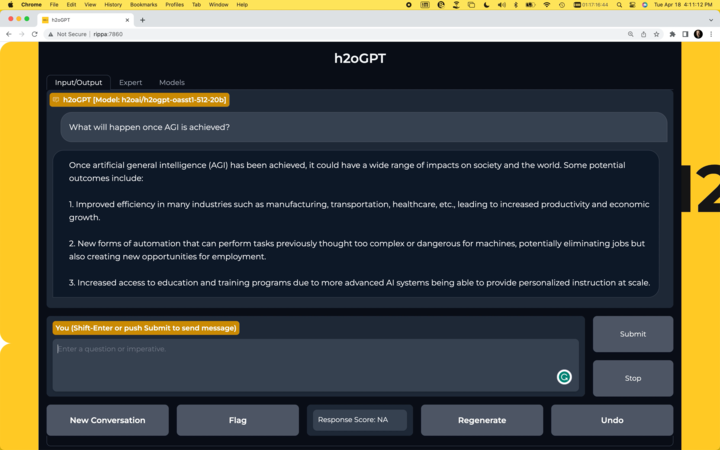

## Chatbot

- Run your own chatbot: [H2O.ai GitHub](https://github.com/h2oai/h2ogpt)

[](https://github.com/h2oai/h2ogpt)

## Usage

To use the model with the `transformers` library on a machine with GPUs, first make sure you have the following libraries installed.

```bash

pip install transformers==4.29.2

pip install accelerate==0.19.0

pip install torch==2.0.1

pip install einops==0.6.1

```

```python

import torch

from transformers import pipeline, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("h2oai/h2ogpt-research-oasst1-llama-65b", padding_side="left")

generate_text = pipeline(model="h2oai/h2ogpt-research-oasst1-llama-65b", tokenizer=tokenizer, torch_dtype=torch.bfloat16, trust_remote_code=True, device_map="auto", prompt_type="human_bot")

res = generate_text("Why is drinking water so healthy?", max_new_tokens=100)

print(res[0]["generated_text"])

```

Alternatively, if you prefer to not use `trust_remote_code=True` you can download [instruct_pipeline.py](https://huggingface.co/h2oai/h2ogpt-research-oasst1-llama-65b/blob/main/h2oai_pipeline.py),

store it alongside your notebook, and construct the pipeline yourself from the loaded model and tokenizer:

```python

import torch

from h2oai_pipeline import H2OTextGenerationPipeline

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("h2oai/h2ogpt-research-oasst1-llama-65b", padding_side="left")

model = AutoModelForCausalLM.from_pretrained("h2oai/h2ogpt-research-oasst1-llama-65b", torch_dtype=torch.bfloat16, device_map="auto")

generate_text = H2OTextGenerationPipeline(model=model, tokenizer=tokenizer, prompt_type="human_bot")

res = generate_text("Why is drinking water so healthy?", max_new_tokens=100)

print(res[0]["generated_text"])

```

## Model Architecture

```

LlamaForCausalLM(

(model): LlamaModel(

(embed_tokens): Embedding(32000, 8192, padding_idx=31999)

(layers): ModuleList(

(0-79): 80 x LlamaDecoderLayer(

(self_attn): LlamaAttention(

(q_proj): Linear(in_features=8192, out_features=8192, bias=False)

(k_proj): Linear(in_features=8192, out_features=8192, bias=False)

(v_proj): Linear(in_features=8192, out_features=8192, bias=False)

(o_proj): Linear(in_features=8192, out_features=8192, bias=False)

(rotary_emb): LlamaRotaryEmbedding()

)

(mlp): LlamaMLP(

(gate_proj): Linear(in_features=8192, out_features=22016, bias=False)

(down_proj): Linear(in_features=22016, out_features=8192, bias=False)

(up_proj): Linear(in_features=8192, out_features=22016, bias=False)

(act_fn): SiLUActivation()

)

(input_layernorm): LlamaRMSNorm()

(post_attention_layernorm): LlamaRMSNorm()

)

)

(norm): LlamaRMSNorm()

)

(lm_head): Linear(in_features=8192, out_features=32000, bias=False)

)

```

## Model Configuration

```json

LlamaConfig {

"_name_or_path": "h2oai/h2ogpt-research-oasst1-llama-65b",

"architectures": [

"LlamaForCausalLM"

],

"bos_token_id": 0,

"custom_pipelines": {

"text-generation": {

"impl": "h2oai_pipeline.H2OTextGenerationPipeline",

"pt": "AutoModelForCausalLM"

}

},

"eos_token_id": 1,

"hidden_act": "silu",

"hidden_size": 8192,

"initializer_range": 0.02,

"intermediate_size": 22016,

"max_position_embeddings": 2048,

"max_sequence_length": 2048,

"model_type": "llama",

"num_attention_heads": 64,

"num_hidden_layers": 80,

"pad_token_id": -1,

"rms_norm_eps": 1e-05,

"tie_word_embeddings": false,

"torch_dtype": "float16",

"transformers_version": "4.30.1",

"use_cache": true,

"vocab_size": 32000

}

```

## Model Validation

Model validation results using [EleutherAI lm-evaluation-harness](https://github.com/EleutherAI/lm-evaluation-harness).

TBD

## Disclaimer

Please read this disclaimer carefully before using the large language model provided in this repository. Your use of the model signifies your agreement to the following terms and conditions.

- Biases and Offensiveness: The large language model is trained on a diverse range of internet text data, which may contain biased, racist, offensive, or otherwise inappropriate content. By using this model, you acknowledge and accept that the generated content may sometimes exhibit biases or produce content that is offensive or inappropriate. The developers of this repository do not endorse, support, or promote any such content or viewpoints.

- Limitations: The large language model is an AI-based tool and not a human. It may produce incorrect, nonsensical, or irrelevant responses. It is the user's responsibility to critically evaluate the generated content and use it at their discretion.

- Use at Your Own Risk: Users of this large language model must assume full responsibility for any consequences that may arise from their use of the tool. The developers and contributors of this repository shall not be held liable for any damages, losses, or harm resulting from the use or misuse of the provided model.

- Ethical Considerations: Users are encouraged to use the large language model responsibly and ethically. By using this model, you agree not to use it for purposes that promote hate speech, discrimination, harassment, or any form of illegal or harmful activities.

- Reporting Issues: If you encounter any biased, offensive, or otherwise inappropriate content generated by the large language model, please report it to the repository maintainers through the provided channels. Your feedback will help improve the model and mitigate potential issues.

- Changes to this Disclaimer: The developers of this repository reserve the right to modify or update this disclaimer at any time without prior notice. It is the user's responsibility to periodically review the disclaimer to stay informed about any changes.

By using the large language model provided in this repository, you agree to accept and comply with the terms and conditions outlined in this disclaimer. If you do not agree with any part of this disclaimer, you should refrain from using the model and any content generated by it.