zR

commited on

Commit

•

0588cb6

1

Parent(s):

fcc6d82

release

Browse files- README.md +121 -0

- README_zh.md +96 -0

README.md

ADDED

|

@@ -0,0 +1,121 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: other

|

| 3 |

+

license_name: glm-4

|

| 4 |

+

license_link: https://huggingface.co/THUDM/glm-4-9b-chat-hf/blob/main/LICENSE

|

| 5 |

+

language:

|

| 6 |

+

- en

|

| 7 |

+

- zh

|

| 8 |

+

base_model:

|

| 9 |

+

- THUDM/glm-4-9b-chat-1m

|

| 10 |

+

new_version: THUDM/glm-4-9b-chat-1m-hf

|

| 11 |

+

pipeline_tag: text-generation

|

| 12 |

+

library_name: transformers

|

| 13 |

+

tags:

|

| 14 |

+

- chatglm

|

| 15 |

+

inference: false

|

| 16 |

+

---

|

| 17 |

+

|

| 18 |

+

# GLM-4-9B-Chat-1M

|

| 19 |

+

|

| 20 |

+

中文阅读,请看[这里](README_zh.md).

|

| 21 |

+

|

| 22 |

+

If you are using the weights from this repository, please update to

|

| 23 |

+

|

| 24 |

+

<span style="color:red; font-weight:bold;"> transformers>=4.46.0 </span>

|

| 25 |

+

|

| 26 |

+

These weights are **not compatible** with older versions of the transformers library.

|

| 27 |

+

|

| 28 |

+

## Model Introduction

|

| 29 |

+

|

| 30 |

+

GLM-4-9B is the open-source version of the latest generation of pre-trained models in the GLM-4 series launched by Zhipu

|

| 31 |

+

AI. In the evaluation of data sets in semantics, mathematics, reasoning, code, and knowledge, **GLM-4-9B**

|

| 32 |

+

and its human preference-aligned version **GLM-4-9B-Chat** have shown superior performance beyond Llama-3-8B. In

|

| 33 |

+

addition to multi-round conversations, GLM-4-9B-Chat also has advanced features such as web browsing, code execution,

|

| 34 |

+

custom tool calls (Function Call), and long text

|

| 35 |

+

reasoning (supporting up to 128K context). This generation of models has added multi-language support, supporting 26

|

| 36 |

+

languages including Japanese, Korean, and German. We have also launched the **GLM-4-9B-Chat-1M** model that supports 1M

|

| 37 |

+

context length (about 2 million Chinese characters) and the multimodal model GLM-4V-9B based on GLM-4-9B.

|

| 38 |

+

**GLM-4V-9B** possesses dialogue capabilities in both Chinese and English at a high resolution of 1120*1120.

|

| 39 |

+

In various multimodal evaluations, including comprehensive abilities in Chinese and English, perception & reasoning,

|

| 40 |

+

text recognition, and chart understanding, GLM-4V-9B demonstrates superior performance compared to

|

| 41 |

+

GPT-4-turbo-2024-04-09, Gemini 1.0 Pro, Qwen-VL-Max, and Claude 3 Opus.

|

| 42 |

+

|

| 43 |

+

### Long Context

|

| 44 |

+

|

| 45 |

+

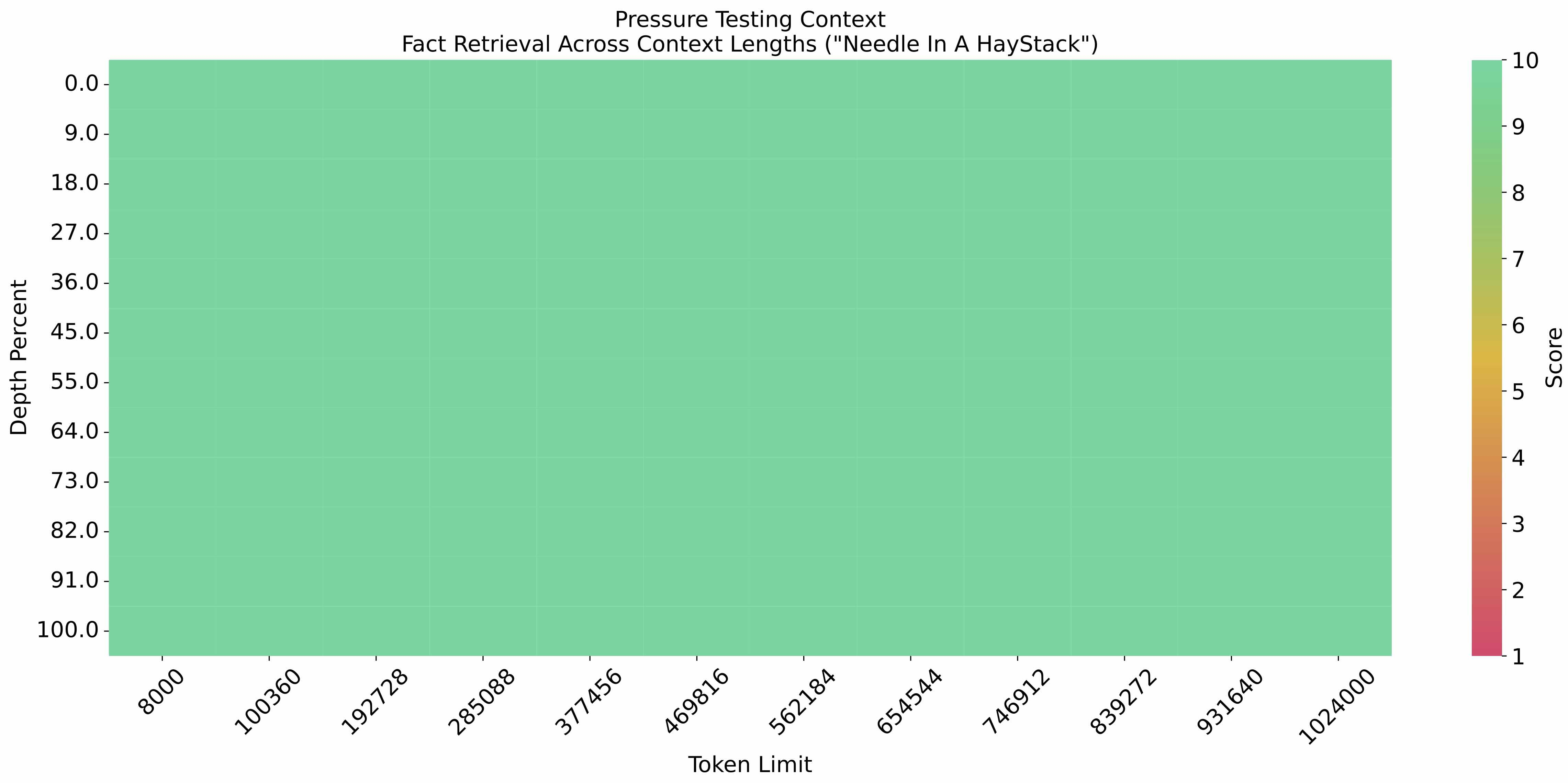

The [eval_needle experiment](https://github.com/LargeWorldModel/LWM/blob/main/scripts/eval_needle.py) was conducted with

|

| 46 |

+

a context length of 1M, and the results are as follows:

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

|

| 50 |

+

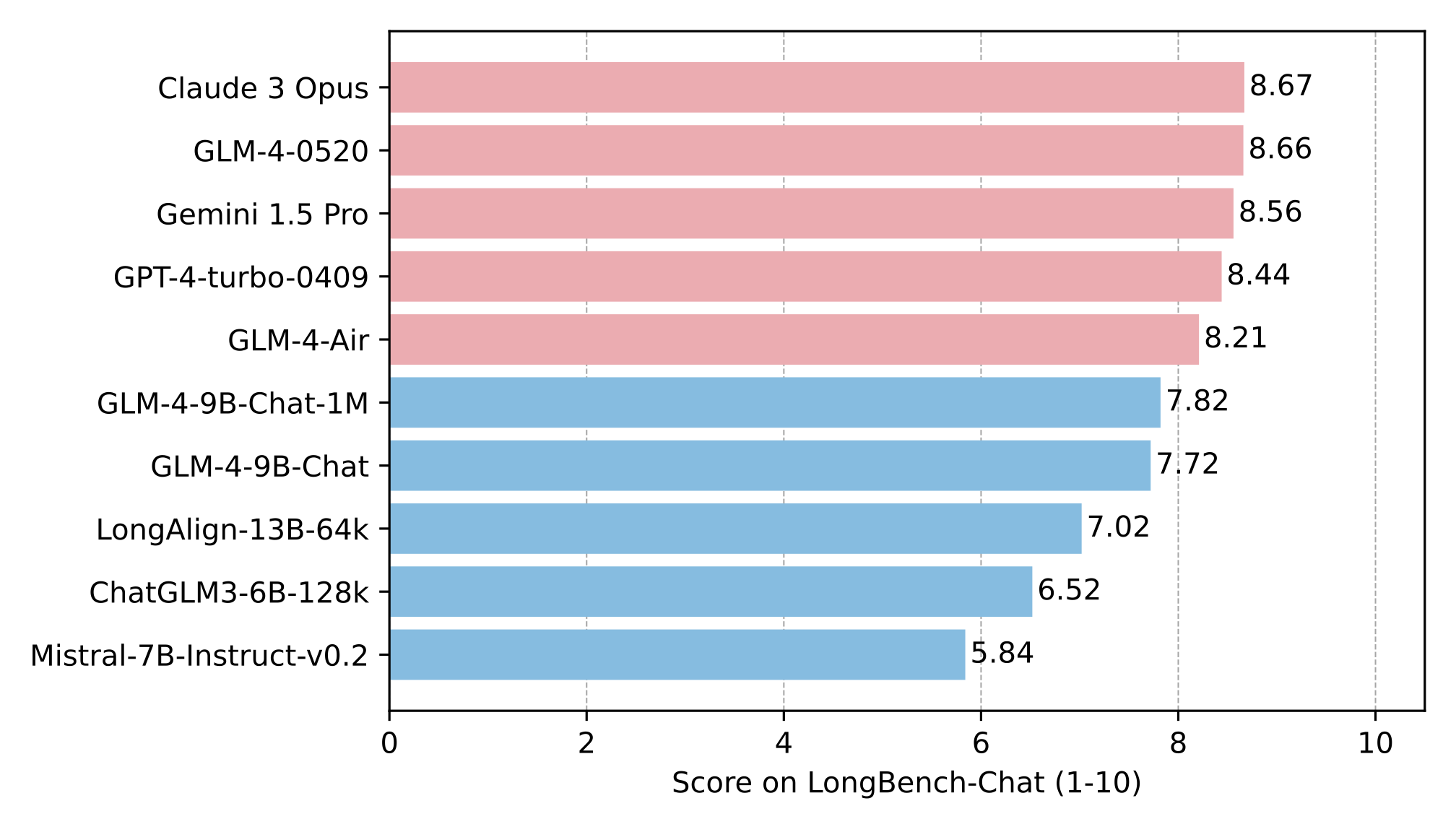

The long text capability was further evaluated on LongBench, and the results are as follows:

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

|

| 54 |

+

**This repository is the model repository of GLM-4-9B-Chat-1M, supporting `1M` context length.**

|

| 55 |

+

|

| 56 |

+

## Quick Start

|

| 57 |

+

|

| 58 |

+

**For more inference code and requirements, please visit our [github page](https://github.com/THUDM/GLM-4).**

|

| 59 |

+

|

| 60 |

+

**Please strictly follow the [dependencies](https://github.com/THUDM/GLM-4/blob/main/basic_demo/requirements.txt) to

|

| 61 |

+

install, otherwise it will not run properly**

|

| 62 |

+

|

| 63 |

+

### Use the following method to quickly call the GLM-4-9B-Chat-1M language model

|

| 64 |

+

|

| 65 |

+

Use the transformers backend for inference:

|

| 66 |

+

|

| 67 |

+

```python

|

| 68 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 69 |

+

|

| 70 |

+

MODEL_PATH = 'ZhipuAI/glm-4-9b-chat-1m-hf'

|

| 71 |

+

|

| 72 |

+

tokenizer = AutoTokenizer.from_pretrained(MODEL_PATH)

|

| 73 |

+

model = AutoModelForCausalLM.from_pretrained(MODEL_PATH, device_map="auto")

|

| 74 |

+

|

| 75 |

+

message = [

|

| 76 |

+

{

|

| 77 |

+

"role": "system",

|

| 78 |

+

"content": "Answer the following question."

|

| 79 |

+

},

|

| 80 |

+

{

|

| 81 |

+

"role": "user",

|

| 82 |

+

"content": "How many legs does a cat have?"

|

| 83 |

+

}

|

| 84 |

+

]

|

| 85 |

+

|

| 86 |

+

inputs = tokenizer.apply_chat_template(

|

| 87 |

+

message,

|

| 88 |

+

return_tensors='pt',

|

| 89 |

+

add_generation_prompt=True,

|

| 90 |

+

return_dict=True,

|

| 91 |

+

).to(model.device)

|

| 92 |

+

|

| 93 |

+

input_len = inputs['input_ids'].shape[1]

|

| 94 |

+

generate_kwargs = {

|

| 95 |

+

"input_ids": inputs['input_ids'],

|

| 96 |

+

"attention_mask": inputs['attention_mask'],

|

| 97 |

+

"max_new_tokens": 128,

|

| 98 |

+

"do_sample": False,

|

| 99 |

+

}

|

| 100 |

+

out = model.generate(**generate_kwargs)

|

| 101 |

+

print(tokenizer.decode(out[0][input_len:], skip_special_tokens=True))

|

| 102 |

+

```

|

| 103 |

+

|

| 104 |

+

## LICENSE

|

| 105 |

+

|

| 106 |

+

The weights of the GLM-4 model are available under the terms of [LICENSE](LICENSE)

|

| 107 |

+

|

| 108 |

+

## Citations

|

| 109 |

+

|

| 110 |

+

If you find our work useful, please consider citing the following paper.

|

| 111 |

+

|

| 112 |

+

```

|

| 113 |

+

@misc{glm2024chatglm,

|

| 114 |

+

title={ChatGLM: A Family of Large Language Models from GLM-130B to GLM-4 All Tools},

|

| 115 |

+

author={Team GLM and Aohan Zeng and Bin Xu and Bowen Wang and Chenhui Zhang and Da Yin and Diego Rojas and Guanyu Feng and Hanlin Zhao and Hanyu Lai and Hao Yu and Hongning Wang and Jiadai Sun and Jiajie Zhang and Jiale Cheng and Jiayi Gui and Jie Tang and Jing Zhang and Juanzi Li and Lei Zhao and Lindong Wu and Lucen Zhong and Mingdao Liu and Minlie Huang and Peng Zhang and Qinkai Zheng and Rui Lu and Shuaiqi Duan and Shudan Zhang and Shulin Cao and Shuxun Yang and Weng Lam Tam and Wenyi Zhao and Xiao Liu and Xiao Xia and Xiaohan Zhang and Xiaotao Gu and Xin Lv and Xinghan Liu and Xinyi Liu and Xinyue Yang and Xixuan Song and Xunkai Zhang and Yifan An and Yifan Xu and Yilin Niu and Yuantao Yang and Yueyan Li and Yushi Bai and Yuxiao Dong and Zehan Qi and Zhaoyu Wang and Zhen Yang and Zhengxiao Du and Zhenyu Hou and Zihan Wang},

|

| 116 |

+

year={2024},

|

| 117 |

+

eprint={2406.12793},

|

| 118 |

+

archivePrefix={arXiv},

|

| 119 |

+

primaryClass={id='cs.CL' full_name='Computation and Language' is_active=True alt_name='cmp-lg' in_archive='cs' is_general=False description='Covers natural language processing. Roughly includes material in ACM Subject Class I.2.7. Note that work on artificial languages (programming languages, logics, formal systems) that does not explicitly address natural-language issues broadly construed (natural-language processing, computational linguistics, speech, text retrieval, etc.) is not appropriate for this area.'}

|

| 120 |

+

}

|

| 121 |

+

```

|

README_zh.md

ADDED

|

@@ -0,0 +1,96 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# GLM-4-9B-Chat-1M

|

| 2 |

+

|

| 3 |

+

Read this in [English](README.md).

|

| 4 |

+

|

| 5 |

+

如果您使用的是这个仓库中的权重,请更新到

|

| 6 |

+

|

| 7 |

+

<span style="color:red; font-weight:bold;"> transformers>=4.46.0 </span>

|

| 8 |

+

|

| 9 |

+

这些权重 **不兼容** 较早版本的 transformers 库。

|

| 10 |

+

|

| 11 |

+

## 模型介绍

|

| 12 |

+

|

| 13 |

+

GLM-4-9B 是智谱 AI 推出的最新一代预训练模型 GLM-4 系列中的开源版本。

|

| 14 |

+

在语义、数学、推理、代码和知识等多方面的数据集测评中,GLM-4-9B 及其人类偏好对齐的版本 GLM-4-9B-Chat 均表现出较高的性能。

|

| 15 |

+

除了能进行多轮对话,GLM-4-9B-Chat 还具备网页浏览、代码执行、自定义工具调用(Function Call)和长文本推理(支持最大 128K

|

| 16 |

+

上下文)等高级功能。

|

| 17 |

+

本代模型增加了多语言支持,支持包括日语,韩语,德语在内的 26 种语言。我们还推出了支持 1M 上下文长度(约 200 万中文字符)的模型。

|

| 18 |

+

|

| 19 |

+

## 评测结果

|

| 20 |

+

|

| 21 |

+

### 长文本

|

| 22 |

+

|

| 23 |

+

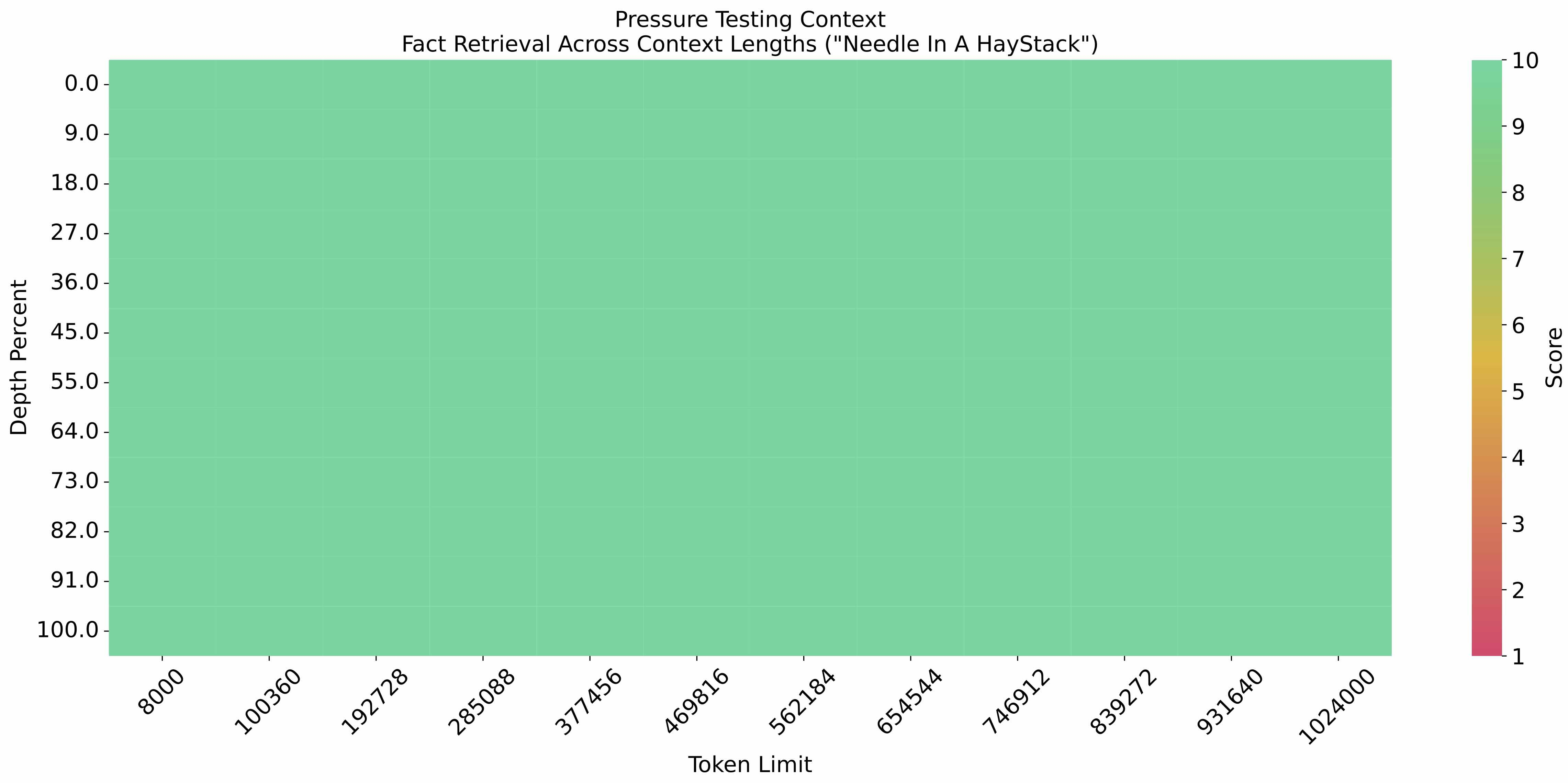

在 1M 的上下文长度下进行[大海捞针实验](https://github.com/LargeWorldModel/LWM/blob/main/scripts/eval_needle.py),结果如下:

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

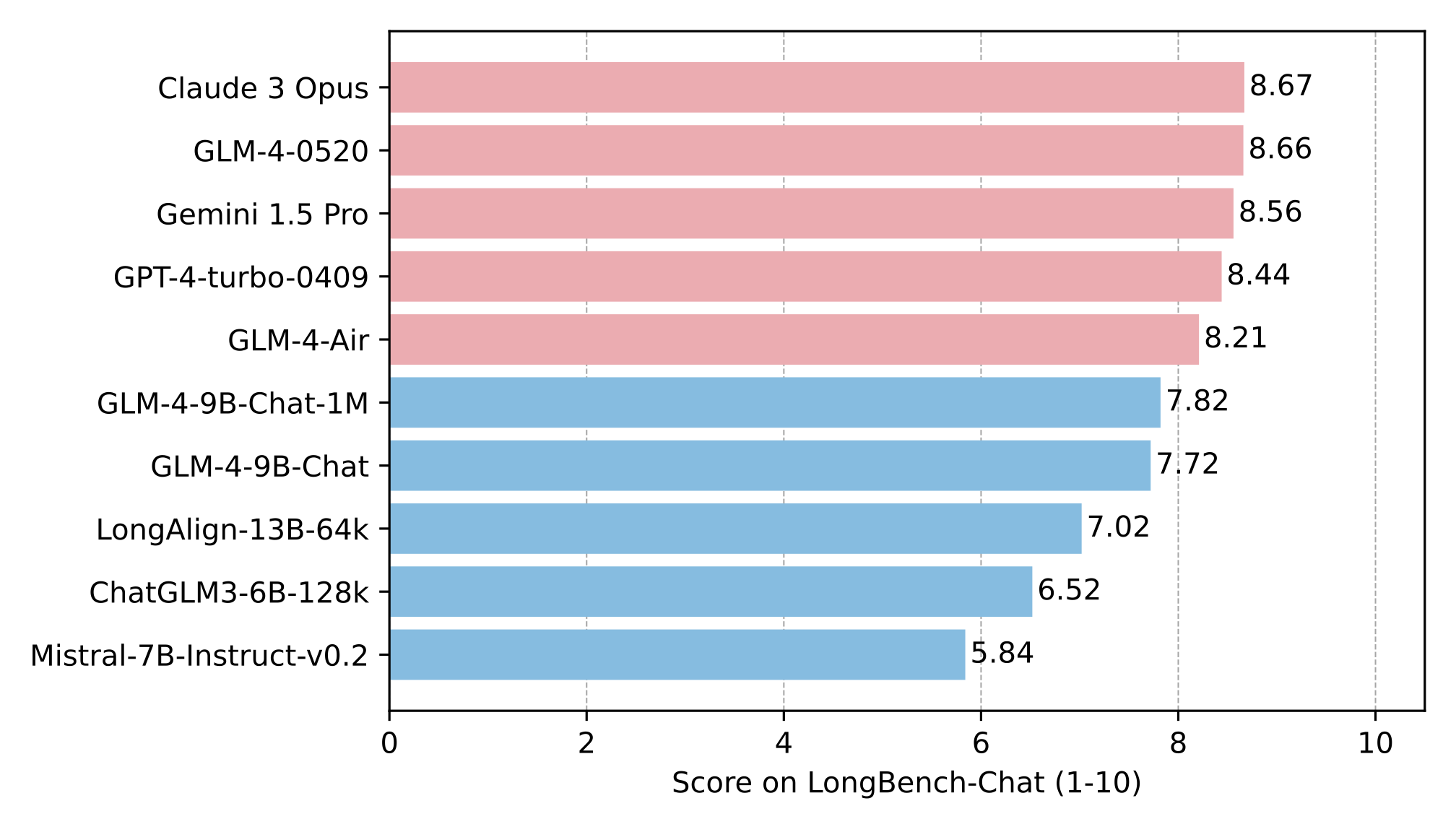

在 LongBench-Chat 上对长文本能力进行了进一步评测,结果如下:

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

|

| 31 |

+

**本仓库是 GLM-4-9B-Chat-1M 的模型仓库,支持`1M`上下文长度。**

|

| 32 |

+

|

| 33 |

+

## 运行模型

|

| 34 |

+

|

| 35 |

+

**更多推理代码和依赖信息,请访问我们的 [github](https://github.com/THUDM/GLM-4)。**

|

| 36 |

+

|

| 37 |

+

**请严格按照[依赖](https://github.com/THUDM/GLM-4/blob/main/basic_demo/requirements.txt)安装,否则无法正常运行。**

|

| 38 |

+

|

| 39 |

+

使用 transformers 后端进行推理:

|

| 40 |

+

|

| 41 |

+

```python

|

| 42 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 43 |

+

|

| 44 |

+

MODEL_PATH = 'ZhipuAI/glm-4-9b-chat-1m-hf'

|

| 45 |

+

|

| 46 |

+

tokenizer = AutoTokenizer.from_pretrained(MODEL_PATH)

|

| 47 |

+

model = AutoModelForCausalLM.from_pretrained(MODEL_PATH, device_map="auto")

|

| 48 |

+

|

| 49 |

+

message = [

|

| 50 |

+

{

|

| 51 |

+

"role": "system",

|

| 52 |

+

"content": "Answer the following question."

|

| 53 |

+

},

|

| 54 |

+

{

|

| 55 |

+

"role": "user",

|

| 56 |

+

"content": "How many legs does a cat have?"

|

| 57 |

+

}

|

| 58 |

+

]

|

| 59 |

+

|

| 60 |

+

inputs = tokenizer.apply_chat_template(

|

| 61 |

+

message,

|

| 62 |

+

return_tensors='pt',

|

| 63 |

+

add_generation_prompt=True,

|

| 64 |

+

return_dict=True,

|

| 65 |

+

).to(model.device)

|

| 66 |

+

|

| 67 |

+

input_len = inputs['input_ids'].shape[1]

|

| 68 |

+

generate_kwargs = {

|

| 69 |

+

"input_ids": inputs['input_ids'],

|

| 70 |

+

"attention_mask": inputs['attention_mask'],

|

| 71 |

+

"max_new_tokens": 128,

|

| 72 |

+

"do_sample": False,

|

| 73 |

+

}

|

| 74 |

+

out = model.generate(**generate_kwargs)

|

| 75 |

+

print(tokenizer.decode(out[0][input_len:], skip_special_tokens=True))

|

| 76 |

+

```

|

| 77 |

+

|

| 78 |

+

## 协议

|

| 79 |

+

|

| 80 |

+

GLM-4 模型的权重的使用则需要遵循 [LICENSE](LICENSE)。

|

| 81 |

+

|

| 82 |

+

|

| 83 |

+

## 引用

|

| 84 |

+

|

| 85 |

+

如果你觉得我们的工作有帮助的话,请考虑引用下列论文。

|

| 86 |

+

|

| 87 |

+

```

|

| 88 |

+

@misc{glm2024chatglm,

|

| 89 |

+

title={ChatGLM: A Family of Large Language Models from GLM-130B to GLM-4 All Tools},

|

| 90 |

+

author={Team GLM and Aohan Zeng and Bin Xu and Bowen Wang and Chenhui Zhang and Da Yin and Diego Rojas and Guanyu Feng and Hanlin Zhao and Hanyu Lai and Hao Yu and Hongning Wang and Jiadai Sun and Jiajie Zhang and Jiale Cheng and Jiayi Gui and Jie Tang and Jing Zhang and Juanzi Li and Lei Zhao and Lindong Wu and Lucen Zhong and Mingdao Liu and Minlie Huang and Peng Zhang and Qinkai Zheng and Rui Lu and Shuaiqi Duan and Shudan Zhang and Shulin Cao and Shuxun Yang and Weng Lam Tam and Wenyi Zhao and Xiao Liu and Xiao Xia and Xiaohan Zhang and Xiaotao Gu and Xin Lv and Xinghan Liu and Xinyi Liu and Xinyue Yang and Xixuan Song and Xunkai Zhang and Yifan An and Yifan Xu and Yilin Niu and Yuantao Yang and Yueyan Li and Yushi Bai and Yuxiao Dong and Zehan Qi and Zhaoyu Wang and Zhen Yang and Zhengxiao Du and Zhenyu Hou and Zihan Wang},

|

| 91 |

+

year={2024},

|

| 92 |

+

eprint={2406.12793},

|

| 93 |

+

archivePrefix={arXiv},

|

| 94 |

+

primaryClass={id='cs.CL' full_name='Computation and Language' is_active=True alt_name='cmp-lg' in_archive='cs' is_general=False description='Covers natural language processing. Roughly includes material in ACM Subject Class I.2.7. Note that work on artificial languages (programming languages, logics, formal systems) that does not explicitly address natural-language issues broadly construed (natural-language processing, computational linguistics, speech, text retrieval, etc.) is not appropriate for this area.'}

|

| 95 |

+

}

|

| 96 |

+

```

|