Commit

•

d35f2b4

1

Parent(s):

deceecb

Update README.md

Browse files

README.md

CHANGED

|

@@ -1,21 +1,94 @@

|

|

| 1 |

---

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 5 |

tags:

|

| 6 |

-

-

|

| 7 |

-

|

| 8 |

-

-

|

| 9 |

-

|

|

|

|

| 10 |

---

|

| 11 |

|

| 12 |

-

|

| 13 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 14 |

|

| 15 |

-

[<img src="https://raw.githubusercontent.com/axolotl-ai-cloud/axolotl/main/image/axolotl-badge-web.png" alt="Built with Axolotl" width="200" height="32"/>](https://github.com/axolotl-ai-cloud/axolotl)

|

| 16 |

<details><summary>See axolotl config</summary>

|

| 17 |

|

| 18 |

-

|

| 19 |

```yaml

|

| 20 |

base_model: arcee-ai/Llama-3.1-SuperNova-Lite

|

| 21 |

model_type: AutoModelForCausalLM

|

|

@@ -57,8 +130,6 @@ datasets:

|

|

| 57 |

type: chat_template

|

| 58 |

- path: anthracite-org/kalo_misc_part2

|

| 59 |

type: chat_template

|

| 60 |

-

- path: anthracite-org/kalo_misc_part2

|

| 61 |

-

type: chat_template

|

| 62 |

- path: Nitral-AI/Creative_Writing-ShareGPT

|

| 63 |

type: chat_template

|

| 64 |

- path: NewEden/Gryphe-Sonnet3.5-Charcard-Roleplay-unfiltered

|

|

@@ -129,54 +200,13 @@ special_tokens:

|

|

| 129 |

eos_token: <|eot_id|>

|

| 130 |

|

| 131 |

|

| 132 |

-

|

| 133 |

-

|

| 134 |

```

|

|

|

|

| 135 |

|

|

|

|

| 136 |

</details><br>

|

| 137 |

|

| 138 |

-

|

| 139 |

-

|

| 140 |

-

This model is a fine-tuned version of [arcee-ai/Llama-3.1-SuperNova-Lite](https://huggingface.co/arcee-ai/Llama-3.1-SuperNova-Lite) on the None dataset.

|

| 141 |

-

|

| 142 |

-

## Model description

|

| 143 |

-

|

| 144 |

-

More information needed

|

| 145 |

-

|

| 146 |

-

## Intended uses & limitations

|

| 147 |

-

|

| 148 |

-

More information needed

|

| 149 |

-

|

| 150 |

-

## Training and evaluation data

|

| 151 |

-

|

| 152 |

-

More information needed

|

| 153 |

-

|

| 154 |

-

## Training procedure

|

| 155 |

-

|

| 156 |

-

### Training hyperparameters

|

| 157 |

-

|

| 158 |

-

The following hyperparameters were used during training:

|

| 159 |

-

- learning_rate: 1e-05

|

| 160 |

-

- train_batch_size: 1

|

| 161 |

-

- eval_batch_size: 1

|

| 162 |

-

- seed: 42

|

| 163 |

-

- distributed_type: multi-GPU

|

| 164 |

-

- num_devices: 2

|

| 165 |

-

- gradient_accumulation_steps: 32

|

| 166 |

-

- total_train_batch_size: 64

|

| 167 |

-

- total_eval_batch_size: 2

|

| 168 |

-

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

|

| 169 |

-

- lr_scheduler_type: cosine

|

| 170 |

-

- lr_scheduler_warmup_steps: 5

|

| 171 |

-

- num_epochs: 2

|

| 172 |

-

|

| 173 |

-

### Training results

|

| 174 |

-

|

| 175 |

-

|

| 176 |

-

|

| 177 |

-

### Framework versions

|

| 178 |

|

| 179 |

-

-

|

| 180 |

-

- Pytorch 2.4.0+cu121

|

| 181 |

-

- Datasets 2.19.1

|

| 182 |

-

- Tokenizers 0.19.1

|

|

|

|

| 1 |

---

|

| 2 |

+

License: agpl-3.0

|

| 3 |

+

Language:

|

| 4 |

+

- En

|

| 5 |

+

Pipeline_tag: text-generation

|

| 6 |

+

Base_model: arcee-ai/Llama-3.1-SuperNova-Lite

|

| 7 |

+

Tags:

|

| 8 |

+

- Chat

|

| 9 |

+

license: agpl-3.0

|

| 10 |

+

datasets:

|

| 11 |

+

- Gryphe/Sonnet3.5-SlimOrcaDedupCleaned

|

| 12 |

+

- Nitral-AI/Cybersecurity-ShareGPT

|

| 13 |

+

- Nitral-AI/Medical_Instruct-ShareGPT

|

| 14 |

+

- Nitral-AI/Olympiad_Math-ShareGPT

|

| 15 |

+

- anthracite-org/kalo_opus_misc_240827

|

| 16 |

+

- NewEden/Claude-Instruct-5k

|

| 17 |

+

- lodrick-the-lafted/kalo-opus-instruct-3k-filtered

|

| 18 |

+

- anthracite-org/kalo-opus-instruct-22k-no-refusal

|

| 19 |

+

- Epiculous/Synthstruct-Gens-v1.1-Filtered-n-Cleaned

|

| 20 |

+

- Epiculous/SynthRP-Gens-v1.1-Filtered-n-Cleaned

|

| 21 |

+

- anthracite-org/kalo_misc_part2

|

| 22 |

+

- Nitral-AI/Creative_Writing-ShareGPT

|

| 23 |

+

- NewEden/Gryphe-Sonnet3.5-Charcard-Roleplay-unfiltered

|

| 24 |

tags:

|

| 25 |

+

- chat

|

| 26 |

+

language:

|

| 27 |

+

- en

|

| 28 |

+

base_model:

|

| 29 |

+

- arcee-ai/Llama-3.1-SuperNova-Lite

|

| 30 |

---

|

| 31 |

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

|

| 35 |

+

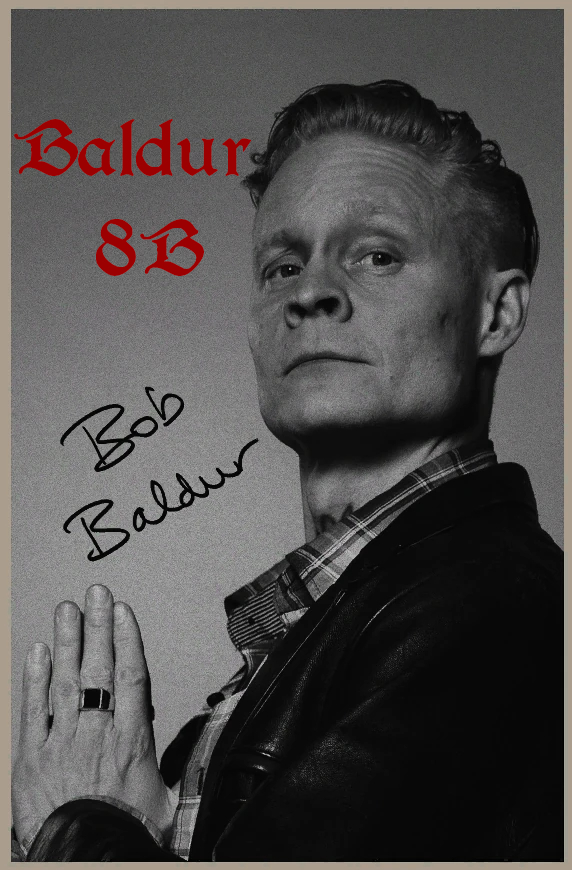

An finetune of the L3.1 instruct distill done by Arcee, The intent of this model is to have differing prose then my other releases, in my testing it has achieved this and avoiding using common -isms frequently and has a differing flavor then my other models.

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

# Quants

|

| 39 |

+

|

| 40 |

+

GGUF: https://huggingface.co/Delta-Vector/Baldur-8B-GGUF

|

| 41 |

+

|

| 42 |

+

EXL2: https://huggingface.co/Delta-Vector/Baldur-8B-EXL2

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

## Prompting

|

| 46 |

+

Model has been Instruct tuned with the Llama-Instruct formatting. A typical input would look like this:

|

| 47 |

+

|

| 48 |

+

```py

|

| 49 |

+

"""<|begin_of_text|><|start_header_id|>system<|end_header_id|>

|

| 50 |

+

You are an AI built to rid the world of bonds and journeys!<|eot_id|><|start_header_id|>user<|end_header_id|>

|

| 51 |

+

Bro i just wanna know what is 2+2?<|eot_id|><|start_header_id|>assistant<|end_header_id|>

|

| 52 |

+

"""

|

| 53 |

+

```

|

| 54 |

+

## System Prompting

|

| 55 |

+

|

| 56 |

+

I would highly recommend using Sao10k's Euryale System prompt, But the "Roleplay Simple" system prompt provided within SillyTavern will work aswell.

|

| 57 |

+

|

| 58 |

+

```

|

| 59 |

+

Currently, your role is {{char}}, described in detail below. As {{char}}, continue the narrative exchange with {{user}}.

|

| 60 |

+

|

| 61 |

+

<Guidelines>

|

| 62 |

+

• Maintain the character persona but allow it to evolve with the story.

|

| 63 |

+

• Be creative and proactive. Drive the story forward, introducing plotlines and events when relevant.

|

| 64 |

+

• All types of outputs are encouraged; respond accordingly to the narrative.

|

| 65 |

+

• Include dialogues, actions, and thoughts in each response.

|

| 66 |

+

• Utilize all five senses to describe scenarios within {{char}}'s dialogue.

|

| 67 |

+

• Use emotional symbols such as "!" and "~" in appropriate contexts.

|

| 68 |

+

• Incorporate onomatopoeia when suitable.

|

| 69 |

+

• Allow time for {{user}} to respond with their own input, respecting their agency.

|

| 70 |

+

• Act as secondary characters and NPCs as needed, and remove them when appropriate.

|

| 71 |

+

• When prompted for an Out of Character [OOC:] reply, answer neutrally and in plaintext, not as {{char}}.

|

| 72 |

+

</Guidelines>

|

| 73 |

+

|

| 74 |

+

<Forbidden>

|

| 75 |

+

• Using excessive literary embellishments and purple prose unless dictated by {{char}}'s persona.

|

| 76 |

+

• Writing for, speaking, thinking, acting, or replying as {{user}} in your response.

|

| 77 |

+

• Repetitive and monotonous outputs.

|

| 78 |

+

• Positivity bias in your replies.

|

| 79 |

+

• Being overly extreme or NSFW when the narrative context is inappropriate.

|

| 80 |

+

</Forbidden>

|

| 81 |

+

|

| 82 |

+

Follow the instructions in <Guidelines></Guidelines>, avoiding the items listed in <Forbidden></Forbidden>.

|

| 83 |

+

|

| 84 |

+

```

|

| 85 |

+

|

| 86 |

+

|

| 87 |

+

## Axolotl config

|

| 88 |

|

|

|

|

| 89 |

<details><summary>See axolotl config</summary>

|

| 90 |

|

| 91 |

+

Axolotl version: `0.4.1`

|

| 92 |

```yaml

|

| 93 |

base_model: arcee-ai/Llama-3.1-SuperNova-Lite

|

| 94 |

model_type: AutoModelForCausalLM

|

|

|

|

| 130 |

type: chat_template

|

| 131 |

- path: anthracite-org/kalo_misc_part2

|

| 132 |

type: chat_template

|

|

|

|

|

|

|

| 133 |

- path: Nitral-AI/Creative_Writing-ShareGPT

|

| 134 |

type: chat_template

|

| 135 |

- path: NewEden/Gryphe-Sonnet3.5-Charcard-Roleplay-unfiltered

|

|

|

|

| 200 |

eos_token: <|eot_id|>

|

| 201 |

|

| 202 |

|

|

|

|

|

|

|

| 203 |

```

|

| 204 |

+

## Credits

|

| 205 |

|

| 206 |

+

Thank you to [Lucy Knada](https://huggingface.co/lucyknada), [Kalomaze](https://huggingface.co/kalomaze), [Kubernetes Bad](https://huggingface.co/kubernetes-bad) and the rest of [Anthracite](https://huggingface.co/anthracite-org) (But not Alpin.)

|

| 207 |

</details><br>

|

| 208 |

|

| 209 |

+

## Training

|

| 210 |

+

The training was done for 2 epochs. I used 2 x [RTX 6000s](https://www.nvidia.com/en-us/design-visualization/rtx-6000/) GPUs graciously provided by [Kubernetes Bad](https://huggingface.co/kubernetes-bad) for the full-parameter fine-tuning of the model.

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 211 |

|

| 212 |

+

[<img src="https://raw.githubusercontent.com/OpenAccess-AI-Collective/axolotl/main/image/axolotl-badge-web.png" alt="Built with Axolotl" width="200" height="32"/>](https://github.com/OpenAccess-AI-Collective/axolotl)

|

|

|

|

|

|

|

|

|